$ cat post/y2k-echoes-in-2002:-an-infrastructure-manager's-perspective.md

Y2K Echoes in 2002: An Infrastructure Manager's Perspective

December 16th, 2002. I still remember the Y2K panic and the early-morning alarms on our systems as if it were yesterday. Yet here we are, with the echoes of that event lingering like a phantom from the past. It’s funny how history repeats itself in strange ways.

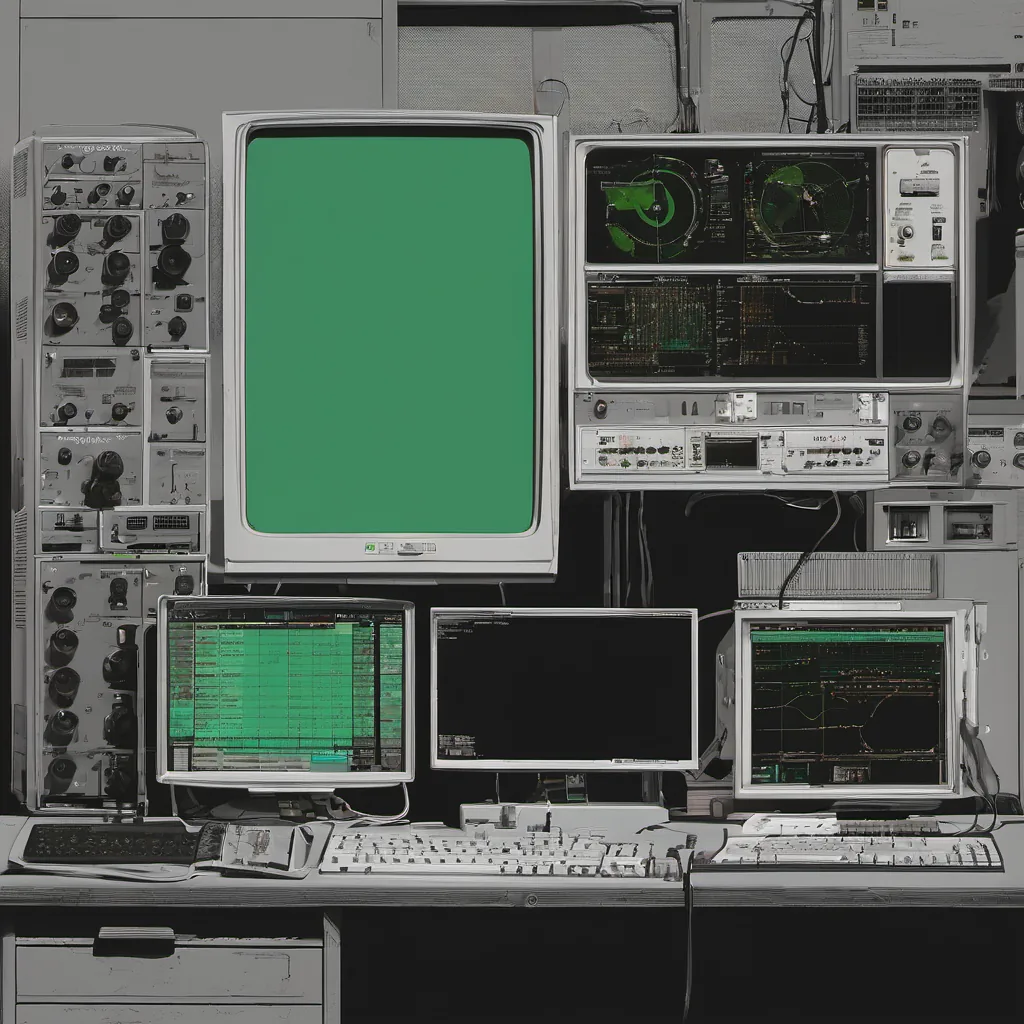

As an infrastructure manager for a mid-sized tech company, my days were filled with the usual mix of mundane tasks and occasional heart-stopping moments. We were dealing with aging hardware, migrating from our ancient Sun servers to newer x86 systems with VMware, and slowly but surely moving towards a more Linux-centric desktop environment. Apache still ruled the server world, and Sendmail was a constant source of debugging nightmares.

One particularly memorable day, I found myself in a war room scenario that felt eerily familiar—albeit not as dramatic as Y2K. We were dealing with a mysterious issue affecting our e-commerce platform. Sales had been going strong over the holiday season, but suddenly, we started getting reports of orders not being processed correctly. The logs didn’t show anything unusual, and the application servers appeared to be running smoothly from the outside.

I spent hours poking around, trying to replicate the problem locally. It was like a detective story where every piece of evidence seemed inconclusive. Finally, one evening, I was browsing through the system’s configuration files when something caught my eye—a line in our database connection settings. Our application was configured to use an older version of MySQL that had some known date handling issues.

The realization hit me like a freight train. The problem wasn’t in our code; it was in the environment. A quick update and some careful testing later, we were back up and running. The orders started processing correctly again, and for a moment, I felt relieved. But then came the question: How could such an issue slip through?

In reflecting on this incident, I couldn’t help but think about Y2K. Back then, everyone was so focused on 1900-1999 rollover issues that we almost forgot to look elsewhere—especially at how our systems handled dates in the future. Now, here we were, dealing with a similar issue without even realizing it.

This experience underscored something I had been thinking about for years: the importance of continuously reviewing and updating our infrastructure components. Just because something worked last year didn’t mean it would work this year, or next year. The tech world moves fast, and if you’re not constantly pushing forward, you risk being caught off guard.

It’s easy to get stuck in a maintenance cycle where you’re just keeping the lights on. But true leadership means finding that balance between stability and innovation. You need to know when it’s time to replace a perfectly functional old server with something newer and better, even if it feels like a luxury at the moment.

As I typed away at my keyboard, making notes for our next team meeting, I realized how much this incident had taught me. Y2K was a reminder of the importance of disaster planning and attention to detail. This 2002 issue reinforced the need for continuous learning and updating in our tech stack.

In the end, it wasn’t just about fixing the bug; it was about making sure we were prepared for whatever challenges lay ahead. As I signed off on the resolution notes, I made a mental note: we needed to revisit our date handling mechanisms across all services—old and new.

The future always comes knocking in unexpected ways. And sometimes, the best way to prepare is by looking back at where we came from and ensuring we don’t repeat past mistakes.