$ cat post/ssh-key-accepted-/-we-ran-it-on-bare-metal-once-/-the-pipeline-knows.md

ssh key accepted / we ran it on bare metal once / the pipeline knows

Title: Y2K Residue & Apache Benchmarks

It’s been a few months since the Y2K scare has faded from daily headlines, but its echoes are still ringing in the halls of tech. The year 2001 started with more than just a fresh calendar page; it brought new challenges and opportunities. As an engineer in the heart of Silicon Valley, I found myself navigating through the aftermath of one disaster while preparing for another.

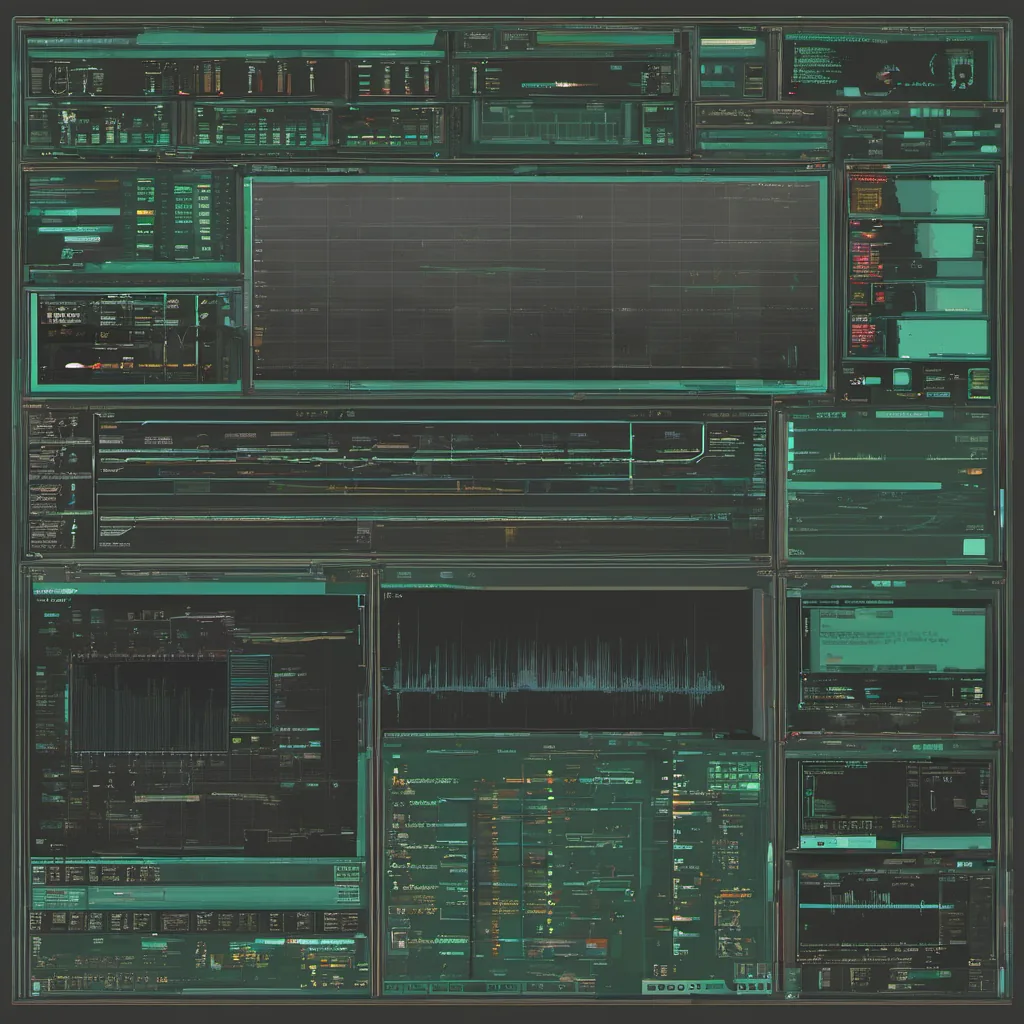

On my desk is a copy of Apache Bench, the little tool that helps you stress-test your web servers. It’s simple: type ab -n 10000 -c 100 <your-site-url> and watch the numbers roll in. I used it daily to fine-tune our server setup, but little did I know that this humble utility would soon become my ally against a more insidious threat.

Our company was rapidly scaling, serving millions of users with a web application built on Apache, MySQL, and Perl. The tech stack was robust enough for our needs, but as we grew, so did the demands on the system. We were starting to see some performance issues under load—pages that should have been lightning fast were taking seconds to render. The blame game began: was it the database? Could our app server handle more requests?

One evening, I found myself arguing with a colleague over whether Apache’s thread model was causing the slowdowns. “We need to switch to mod_perl for better concurrency!” he argued passionately. I countered that our application code wasn’t optimized and could be made faster without rewriting it in a new language. We were both frustrated—myself because I felt like every optimization was being missed, his because he saw a clear path forward.

To settle the debate, we decided to use Apache Bench to benchmark various configurations: mod_perl vs plain Perl scripts, different thread pools, and even toyed with a few other web servers for comparison. We ran endless tests, each time refining our approach, trying to find that elusive performance boost without breaking anything.

After days of tweaking and re-running benchmarks, we finally hit upon something. It turned out that the culprit wasn’t Apache at all; it was our database queries! Some complex reports were hitting the database in a way that caused significant delays. By rewriting those queries to be more efficient, we saw an immediate improvement in performance. The argument with my colleague? He was right all along—mod_perl would have helped, but only after fixing the root cause.

That experience taught me that sometimes, the tools and technologies you choose are just the surface. It’s the underlying architecture and optimizations that matter most. We learned to use Apache Bench not just as a performance testing tool, but as a diagnostic instrument. By breaking down our application into smaller components and measuring each piece under load, we could identify bottlenecks quickly and address them effectively.

As 2001 progressed, other forces were at play too—Linux was gaining serious ground on the desktop, and open source projects like Apache continued to mature and dominate server-side web development. VMware was making waves with virtualization, promising better resource management for our servers. And of course, there was the ongoing discussion about IPv6—a topic that still feels relevant today despite its gradual adoption.

Looking back, those early days of 2001 were a mix of chaos and opportunity. We faced challenges like Y2K again, but in a more subtle form—performance optimization and scalability issues. The tools and technologies we used then, while not perfect, set the stage for what was to come. Apache Bench taught me that sometimes, you just have to roll up your sleeves and dig deep into the code.

And so, as I sit here with my trusty laptop running Apache Bench on an old server (which surprisingly still works), I reflect on those days. Technology moves fast, but the lessons we learn about performance and optimization stay with us.