$ cat post/compile-errors-clear-/-the-monorepo-grew-too-wide-/-config-never-lies.md

compile errors clear / the monorepo grew too wide / config never lies

Title: Debugging Digg’s Dirty Little Secret

November 15, 2004 was just another day when I woke up to the early morning sun streaming through my window. As a platform engineer at a small startup in the heart of San Francisco, I was already deep into my routine of debugging and optimizing our web services infrastructure. Our primary technology stack was LAMP—Linux, Apache, MySQL, and PHP—and we were serving as much traffic as a small social network could muster back then.

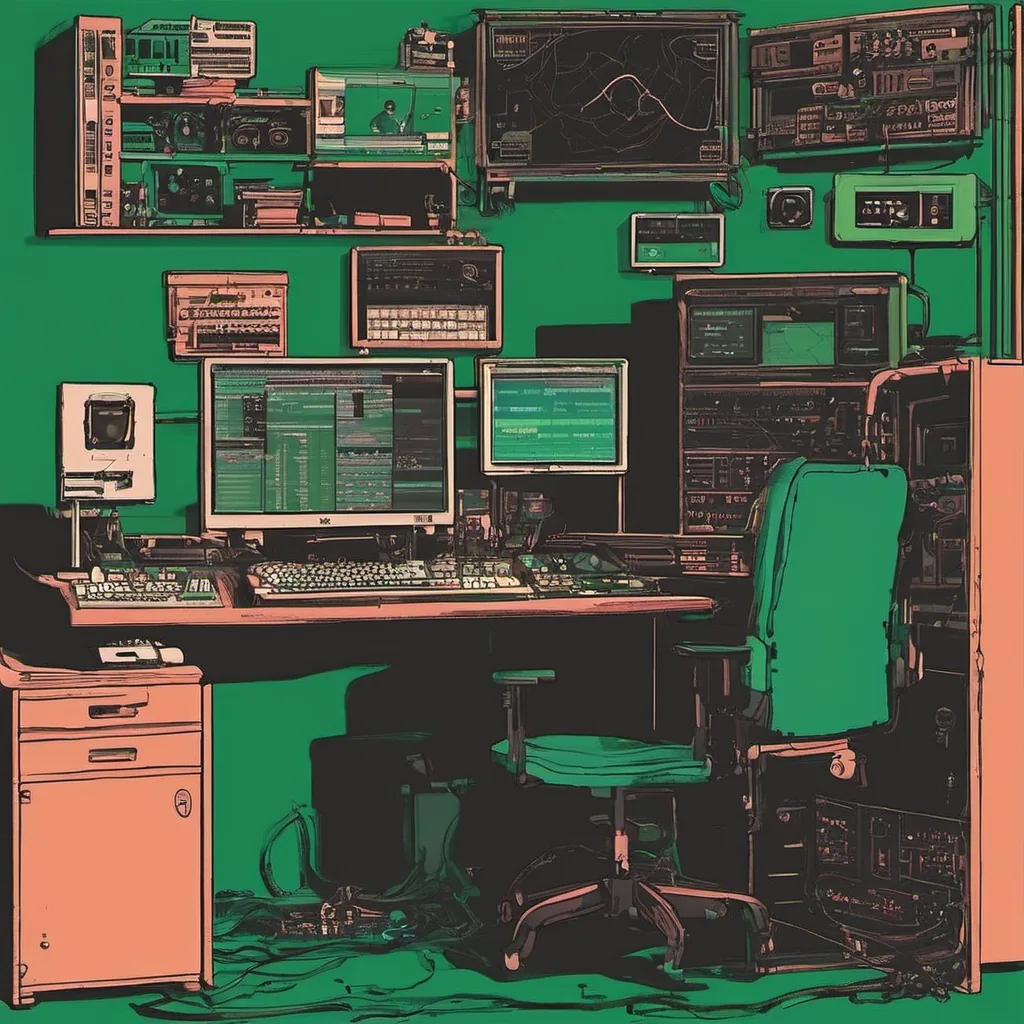

One morning, as I sat at my desk staring at the server logs, something felt off. The load times for our user-facing pages had doubled over the past few days. Our application was built on top of Xen virtual machines running Ubuntu and we were using Python scripts to manage many of our backend processes. I pulled up my favorite command-line tools—top, htop, and a custom Perl script I wrote to monitor database queries—and began digging into the code.

The first thing that jumped out at me was an unusually high number of MySQL queries being executed during peak hours. Our database had been growing exponentially, but now it seemed like every page request was hitting multiple tables instead of just one or two. This pattern didn’t fit with our application’s architecture, so I knew we were dealing with a caching issue.

I spent the next few hours tracing back through the codebase to find where these unnecessary queries were being generated. It turned out that our logging framework wasn’t as well-optimized as it should have been. We were logging every single SQL statement, and this was causing an enormous performance hit. The solution seemed obvious: we needed a better logging strategy.

I quickly whipped up a Python script to filter the logs based on specific query patterns and re-ran them through our database monitoring tools. Sure enough, by filtering out most of the irrelevant queries, we saw a significant improvement in load times and overall system performance.

However, this wasn’t just about improving performance; it was also an opportunity for us to rethink our logging strategy moving forward. We decided to implement a more intelligent logging mechanism that only logs critical information during development phases, but switches to full logging mode during staging and production environments. This way, we could get the data we needed without compromising on performance.

Reflecting on this experience, I realized how much the sysadmin role was evolving. It wasn’t just about fixing bugs or setting up servers; it required a deep understanding of both the application code and the infrastructure beneath it. The rise of open-source tools like Xen and Python scripts had made us more agile, but also demanded that we stay sharp in our problem-solving skills.

As I sat back to watch the logs, feeling a sense of accomplishment, I couldn’t help but think about how quickly the tech landscape was changing. Google was hiring aggressively, Firefox was launching, and Web 2.0 was just starting to take shape with early players like Digg and Reddit gaining traction. The sysadmin role was becoming more strategic, not just tactical.

That day taught me a lot about debugging complex systems and the importance of having a well-thought-out logging strategy. It also highlighted how the tools we use today were evolving rapidly from open-source projects into integral parts of our daily work. And as always, the true test wasn’t in the technology itself but in how we leveraged it to solve real-world problems.