$ cat post/debugging-a-y2k-aftermath:-a-mysterious-network-glitch.md

Debugging a Y2K Aftermath: A Mysterious Network Glitch

January 15, 2001. I was fresh out of college, the dot-com bubble had just burst, and we were still in Y2K recovery mode. My team at a small tech company was tasked with maintaining our internal network infrastructure—Apache, Sendmail, BIND—a mix that seemed to be holding together well enough until one fateful morning.

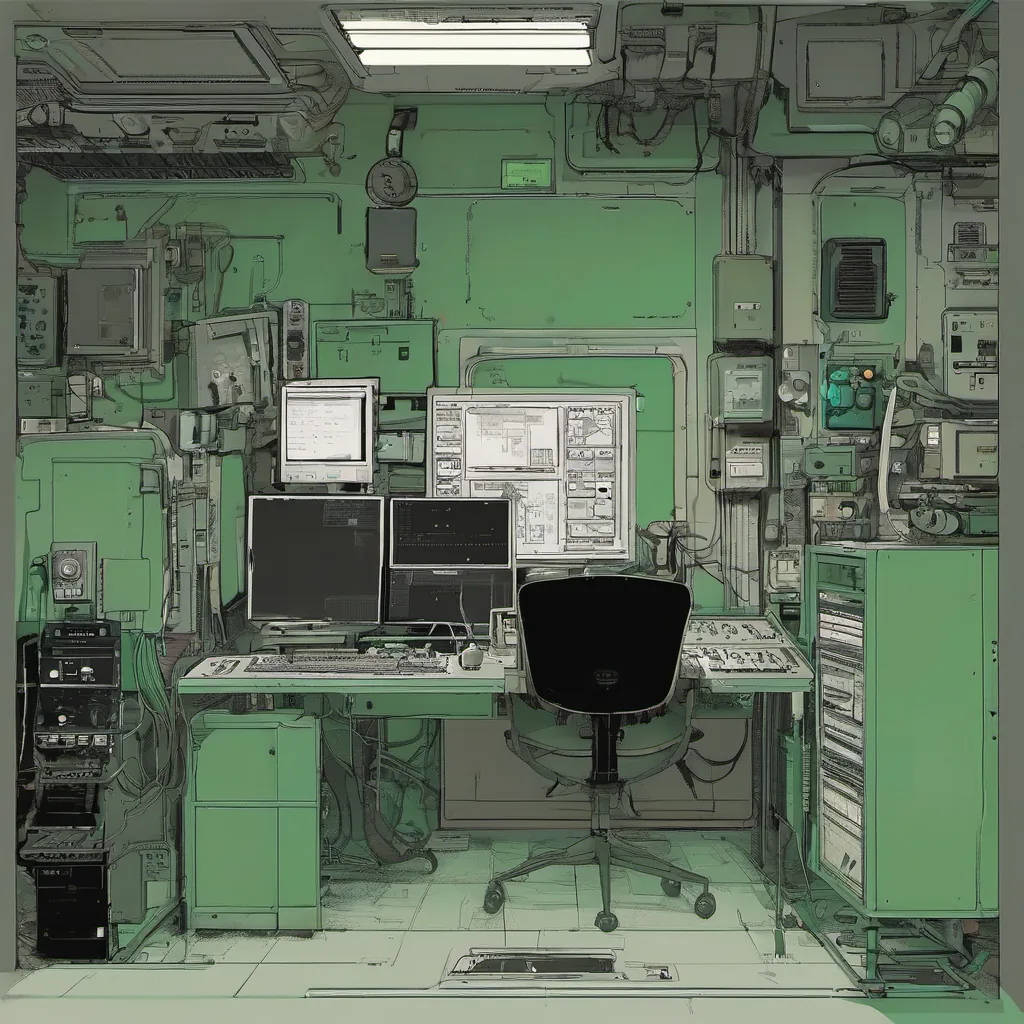

The morning after New Year’s Eve, I walked into the office feeling like any other workday. But as soon as I plopped down at my desk and fired up my terminal, something was off. Our network performance had taken a dive. Pages were loading slowly on our internal web applications, emails weren’t being delivered promptly, and DNS resolution times seemed to be spiking.

I kicked things into gear, first checking the usual suspects: Apache logs for errors, Sendmail queues to see if anything was stuck, and BIND logfiles for any strange behavior. Nothing jumped out at me; everything looked normal. I decided to take a broader approach by running some network diagnostics from my laptop on the same subnet as our web servers.

The ping command started to exhibit something peculiar: it would time out every few seconds, then work fine again after a minute or two. This behavior was intermittent and erratic. I knew right away that this wasn’t just a simple timeout issue; there had to be more going on under the hood.

I started tracing packets from my laptop back to our web servers using tcpdump. The output looked normal at first glance, but something caught my eye as I scrolled through it: there were occasional packet losses. But they weren’t random—they seemed to follow a pattern of every 30 seconds or so. This was starting to sound like a routing issue, and that got me thinking about our network topology.

We had recently upgraded from static IP routing to dynamic OSPF (Open Shortest Path First) for redundancy. I quickly ran some show ip ospf database commands on one of our routers and noticed something strange: there were frequent updates to the link-state databases, but the actual routes weren’t being recalculated as expected.

I decided to manually trigger a route update by sending an ip route redistribute command. This action seemed to temporarily fix the issue, but it was just a bandaid—not addressing the root cause. I realized we needed to dive deeper into OSPF configuration and potentially revisit our network design.

This led me on a journey through hours of logging into various routers, running diagnostics, and making small adjustments. I spent days trying to understand why this intermittent glitch was happening. The frustration built up as every fix seemed temporary at best. Yet, in the middle of all this chaos, there was a moment when everything clicked.

It turned out that one of our switches had been configured with an OSPF metric setting that caused it to become less preferable for traffic routing after 30 seconds. This setting was part of an old configuration script from before we switched to dynamic OSPF, and no one had bothered to revisit it in the rush of the upgrade.

I made the necessary adjustment, reconfigured the switch, and watched as the network performance stabilized. The ping timings became consistent, Apache logs showed normal requests, and Sendmail queues started processing emails faster than before.

Reflecting on this experience, I couldn’t help but feel a mix of relief and frustration. The relief came from solving the problem, while the frustration stemmed from realizing how much time could have been saved if someone had simply re-evaluated our configuration scripts in light of the new routing protocol. This episode taught me that no matter how many times you think you’ve covered your bases, there’s always something to revisit and improve.

In those early days of dynamic routing protocols like OSPF, the lessons were hard-earned but valuable. It was a reminder that while technology evolves rapidly, the fundamentals of network design and management don’t change much. Debugging these issues was a humbling experience, but it solidified my understanding of how interconnected all these components are in a functioning network.

That’s what you get when you wake up on January 15th, 2001, and stumble into the mysterious world of network glitch debugging. Sometimes, even after Y2K is behind us, you can still find yourself dealing with unexpected challenges.