$ cat post/the-old-server-hums-/-we-blamed-the-cache-as-always-/-it-ran-in-the-dark.md

the old server hums / we blamed the cache as always / it ran in the dark

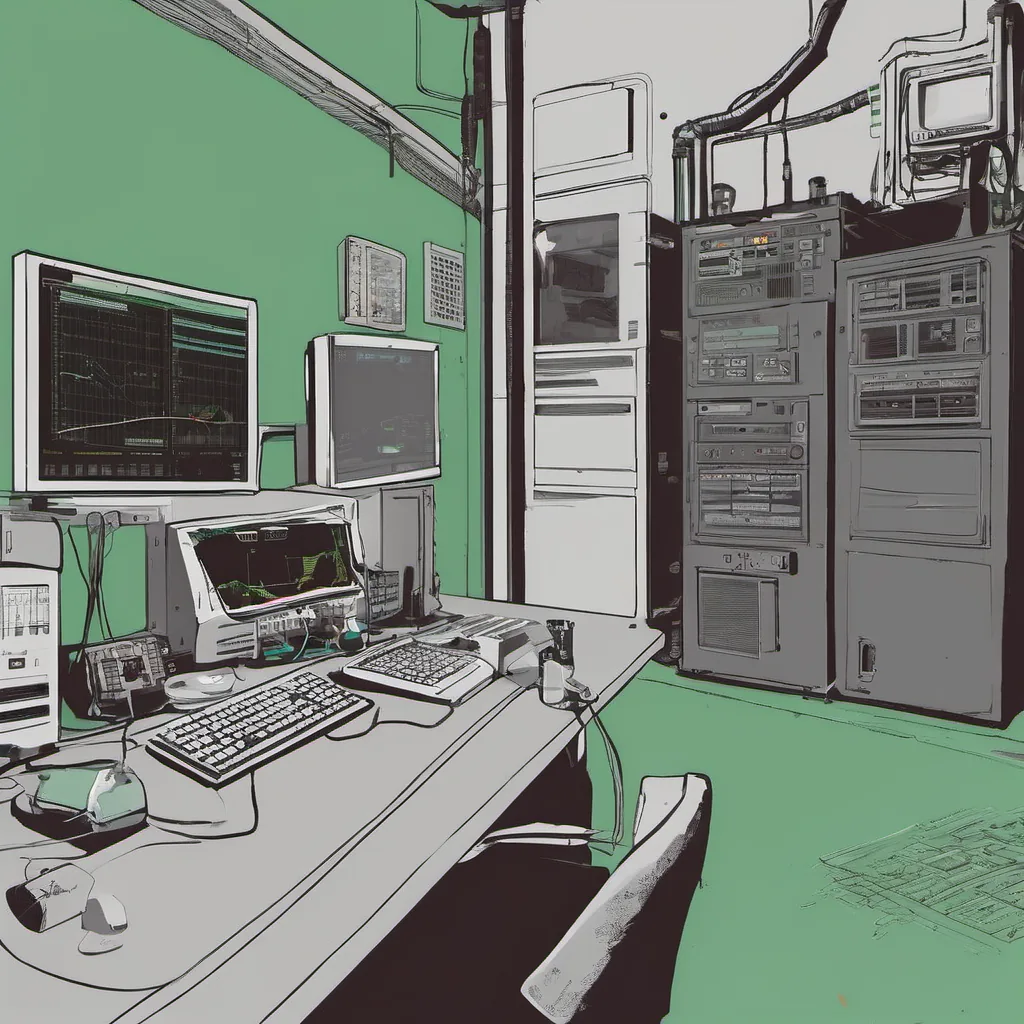

Title: The December 15, 2008 Crunch: A Day in the Life of a DevOps Engineer

December 15, 2008 was a typical Friday for me. I had just gotten back to my desk after a quick lunch in the break room and was trying to catch up on some code reviews when the alarms started going off. “Oh great,” I thought, “another emergency.”

The first alert came from our monitoring system, NewRelic. Our primary web app was showing an uptick in latency. The next was from Splunk: hundreds of errors rolling in. And just as if that wasn’t enough, my pager went off with a warning about an overloaded EC2 instance.

It seemed like every service we had running on AWS was under strain at the same time. The economic crash was really hitting home for us, as our client base was cutting back. But still, the infrastructure had to stay up and running.

I quickly logged into one of my servers via SSH, trying to figure out what was going wrong. “Let’s see… top, htop, ps aux. Nothing jumped out at me.” I tried connecting to our database instance but got a timeout error. Hmm, something wasn’t right here.

Deciding it was time for a deeper dive, I switched over to the AWS management console and started checking on my instances. A few of them were indeed overloaded, but not as much as the alarms indicated. “Wait, are these alarms set correctly?” I wondered aloud. I went back to our monitoring configuration files and found that yes, they had been tweaked recently, but maybe a little too aggressively.

I fired off an email to my team: “Attention all ops. We’ve got some issues with latency and errors. Please check your services.” As the minutes ticked by, more alarms started going off—this time from our S3 buckets showing increased read/write times.

Just as I was about to jump into one of our chat channels to discuss potential solutions, my phone rang. It was the client’s support team: “We’re seeing issues with our service.” Great, another PR crisis on top of this.

I quickly fired up a couple of new EC2 instances and started redirecting traffic from the overloaded ones. The process was slow, as we had to carefully balance the load without causing a complete failure. It was like threading a needle under a time crunch.

Meanwhile, I couldn’t help but think about all that was happening in tech at the time: GitHub launches, AWS gaining serious traction, iPhones being released with SDKs, and Git adoption spreading. The cloud vs. colo debates raged on as everyone tried to figure out where they fit into this new world.

The economic crash had hit hard, too. Tech hiring seemed to be on a downturn, making the pressure even greater for us ops folks to stay lean and efficient. And here I was, dealing with yet another firefighting session while trying to keep up with the latest tech trends.

After about an hour of intense debugging and some frantic server management, things started calming down. The errors stopped rolling in, and our latency dropped significantly. The alarms went back to normal, but we knew it wasn’t over yet. We’d have to do a post-mortem on this and make sure our monitoring was more accurate.

As I sat there with my pager turned off for the night, reflecting on another day of dealing with unforeseen issues, I couldn’t help but chuckle at how much had changed since I first started in ops back in 2005. Back then, we didn’t have this level of automation and real-time monitoring. We relied heavily on physical servers and manual scaling.

Now, AWS made it easier to manage resources, Git made version control more accessible, and GitHub was changing the way developers collaborated. But even with all these advances, ops still required a lot of hands-on work—albeit more efficient thanks to automation tools like Ansible and Terraform.

As I turned off my computer and left for home, I couldn’t shake the feeling that despite all the technological advancements, we were still dealing with some very old problems. The race was on to build better monitoring systems, faster deployment pipelines, and more robust architectures. But until then, the pager would always ring at odd hours, demanding our attention.

December 15, 2008 felt like any other day in ops, but it was a reminder that no matter how much technology advances, the fundamental challenges of building and maintaining reliable infrastructure never truly go away.