$ cat post/a-patch-long-applied-/-the-repo-holds-my-old-mistakes-/-the-cron-still-fires.md

a patch long applied / the repo holds my old mistakes / the cron still fires

Title: Y2K Echoes and a Wobbly Red Hat Server

May 14, 2001. I can still recall the atmosphere of the office that day. The air was thick with a mix of relief and uncertainty. The dot-com bust had left its mark on everyone, but we were all too aware that the internet was here to stay. Linux was making waves, and Apache had become a household name among web developers.

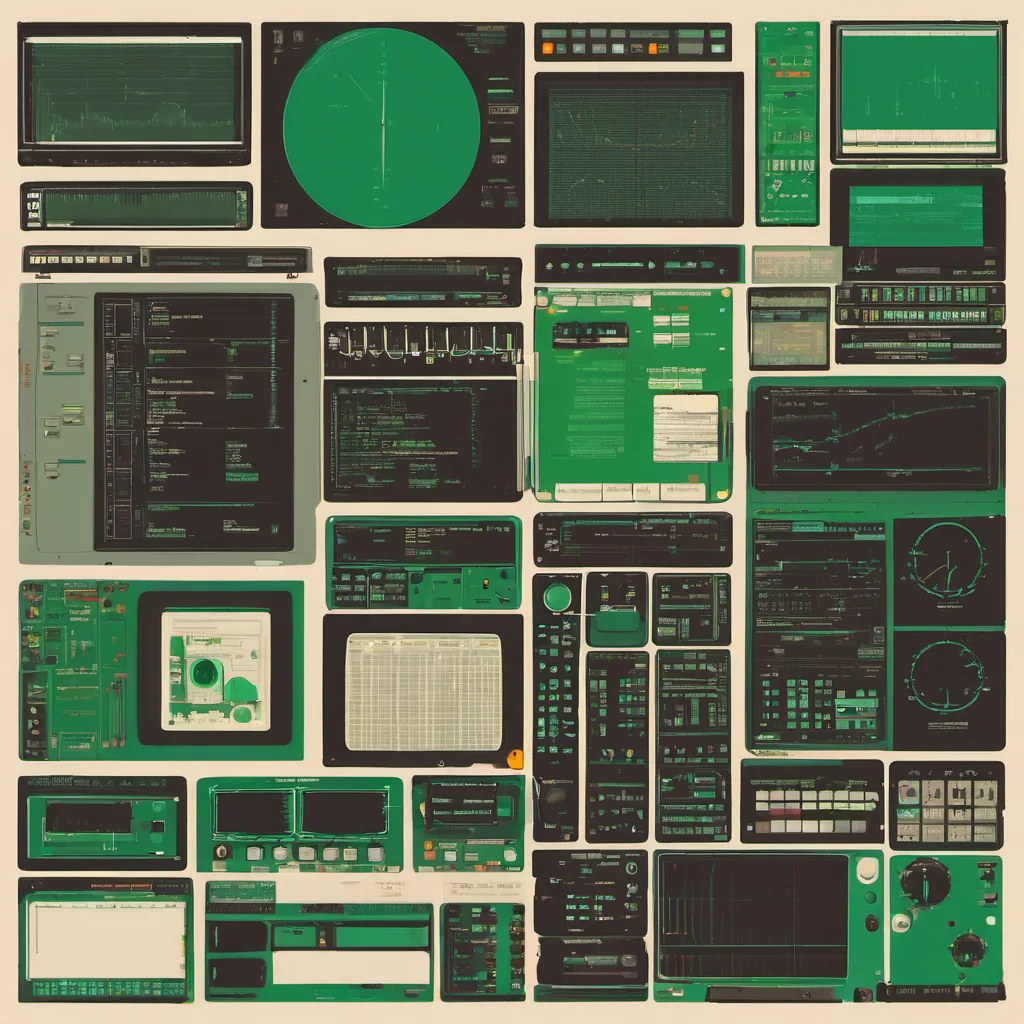

I spent most of my time wrangling servers at a small startup. Our tech stack included Red Hat 6.2 (yes, the version number sticks in my head), Sendmail for email, BIND for DNS, and Apache for serving websites. It was a good mix back then. We were using VMware to run our test environments on top of Windows boxes, which felt like science fiction at the time.

Today, I’m sitting in front of a wobbly Red Hat server that’s been running non-stop since last November. It houses a critical web application that’s seeing increased traffic due to some viral content from last month. The load is creeping up and I can feel the tension building as we prepare for an expected spike in users.

A Day in the Life

The morning started with a nagging feeling about this server. I decided to take a closer look at its health. Opening a terminal session, I typed top to see what was going on under the hood. The CPU utilization was steady at 30%, which is expected for a mid-sized server, but memory usage was at an alarming 95%. Paging through processes with ps aux, I found that Apache was hogging most of the RAM.

I fired up htop and drilled down into the Apache process tree. The culprit turned out to be a poorly written script in our application code. It was doing unnecessary database queries for every single page request, causing a memory leak over time. Each time a user accessed the site, it loaded more data into RAM, slowly filling up the available space.

Debugging and Patching

I quickly made some changes to the script, reducing the number of queries per page and optimizing how data was fetched from the database. This brought down the server’s memory usage significantly. But I knew this wasn’t a permanent solution. I needed to write more efficient code and possibly upgrade our infrastructure.

To test the changes, I spun up a new virtual machine using VMware and deployed the patched application there. Everything seemed to be working as expected with no memory leaks. With some careful planning, I scheduled the deployment for after business hours to minimize disruption.

Reflections on the Era

Looking back, it’s fascinating how much has changed since 2001. Back then, we were still dealing with Y2K issues and worrying about whether our systems would handle rollovers correctly. Now, of course, those fears seem quaint compared to today’s cybersecurity challenges.

The tools have evolved so dramatically too. We’re no longer limited to command-line interfaces like top and ps. Today, we’ve got fancy monitoring tools that can give real-time insights into system health without needing to log in manually. But the core principles remain: ensure your code is efficient, keep an eye on resource usage, and be prepared for unexpected spikes in traffic.

The Future

As I saved my changes and pushed them live, I couldn’t help but think about where we’re headed next. IPv6 discussions are heating up, and cloud computing is starting to gain traction. But for now, Red Hat 6.2 is still holding strong.

I’m proud of the work we’ve done so far, but there’s always more to learn and adapt to. The tech landscape may have changed, but the core practices of ensuring system stability and performance remain as crucial as ever.

[End of Post]