$ cat post/debugging-the-magic-in-production.md

Debugging the Magic in Production

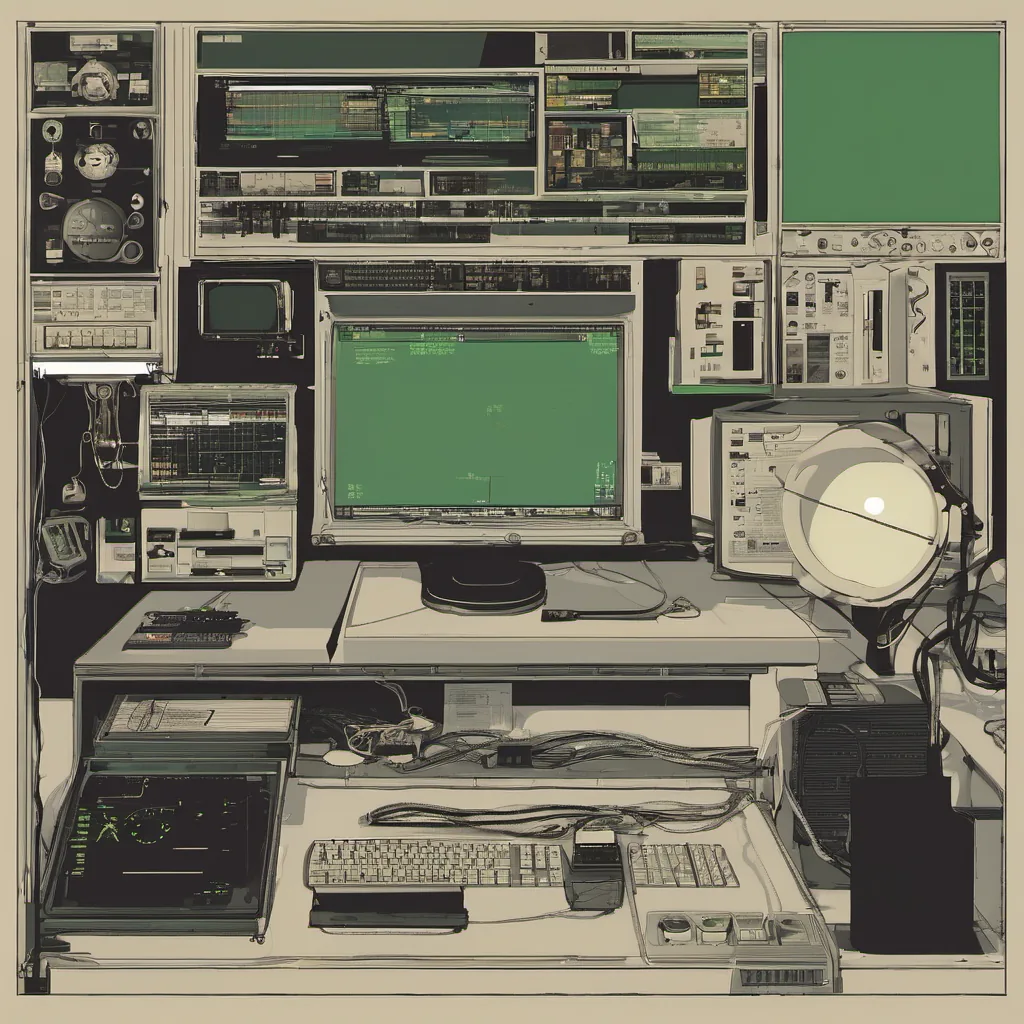

July 14, 2025. It’s been a week since I sat down at my desk and pulled up the dashboard for our AI-native platform. Today, as usual, the dashboard showed everything green—no alarms, no errors, just a sea of calm that should be expected from something so mature. But here’s the funny part: it was anything but.

Last night, we had deployed a new version of one of our copilot tools. The plan was straightforward—integrate more robust error handling to catch those pesky edge cases where LLMs might go haywire. Simple enough in theory, right?

Wrong.

Sure, we managed to deploy the changes without any immediate crashes or outages. But just like a sneaky hacker slipping through the cracks, an unexpected issue cropped up. One of our production environments started to slowly degrade. The copilot’s performance wasn’t just subpar; it was downright lazy. Requests were timing out more frequently, and responses were lagging.

I found myself staring at the logs late into the night, trying to debug this thing like it was a wild child that had escaped from its pen. The first few hours were spent with my usual suspects: kubectl commands, ps aux, and good old curl. But nothing seemed out of place. It felt as though I was searching for a needle in a haystack, but the hay was all green.

Then, like a flash of insight, it hit me—eBPF. The eBPF tools we’ve been using to monitor our applications are known for their precision and minimal overhead. Maybe they could help me get closer to the root cause than any kubectl command ever could. So I dove into the kernel trace, tracing every call that was going on in the background.

What I found was both frustrating and enlightening. The copilot’s performance issues weren’t coming from a single misbehaving line of code or even a bug in the LLM itself. It turned out to be a subtle race condition in how we were handling context switching between tasks. With so much going on under the hood, it was easy for small delays to accumulate and snowball into noticeable performance degradation.

Debugging this wasn’t just about finding a fix; it was also about understanding how our AI-native platform behaves at scale. We had to re-evaluate some of the assumptions we made during design. The idea that every piece of an AI system works perfectly in isolation can be deceiving when you have thousands of tasks running concurrently.

As I sat there, tweaking and retesting with eBPF, it occurred to me how far we’ve come since the early days of this platform. We started with simple scripts and bash commands, then moved on to Kubernetes and containers. Now, everything is so intertwined with AI that debugging feels like solving a complex puzzle where each piece moves in real-time.

By 3 AM, I had a fix ready. It wasn’t just a patch; it was an understanding of how our system operates under the hood. The next day, as I watched the production dashboard turn green again, I felt a mix of relief and pride. We’ve come a long way, but we’re still just a team of engineers trying to make sense of this ever-changing landscape.

Debugging the magic in production isn’t about finding that one bug; it’s about understanding the complexity of the systems we build. It’s about learning from our failures and using those lessons to push the boundaries even further. In 2025, AI-native tools are everywhere, but they’re only as good as the people who keep them running smoothly.

That’s my day in the life of a platform engineer on July 14, 2025. Here’s to more adventures with eBPF and copilots!