$ cat post/kubernetes-wars:-a-battle-i’ve-fought—and-lost.md

Kubernetes Wars: A Battle I’ve Fought—and Lost

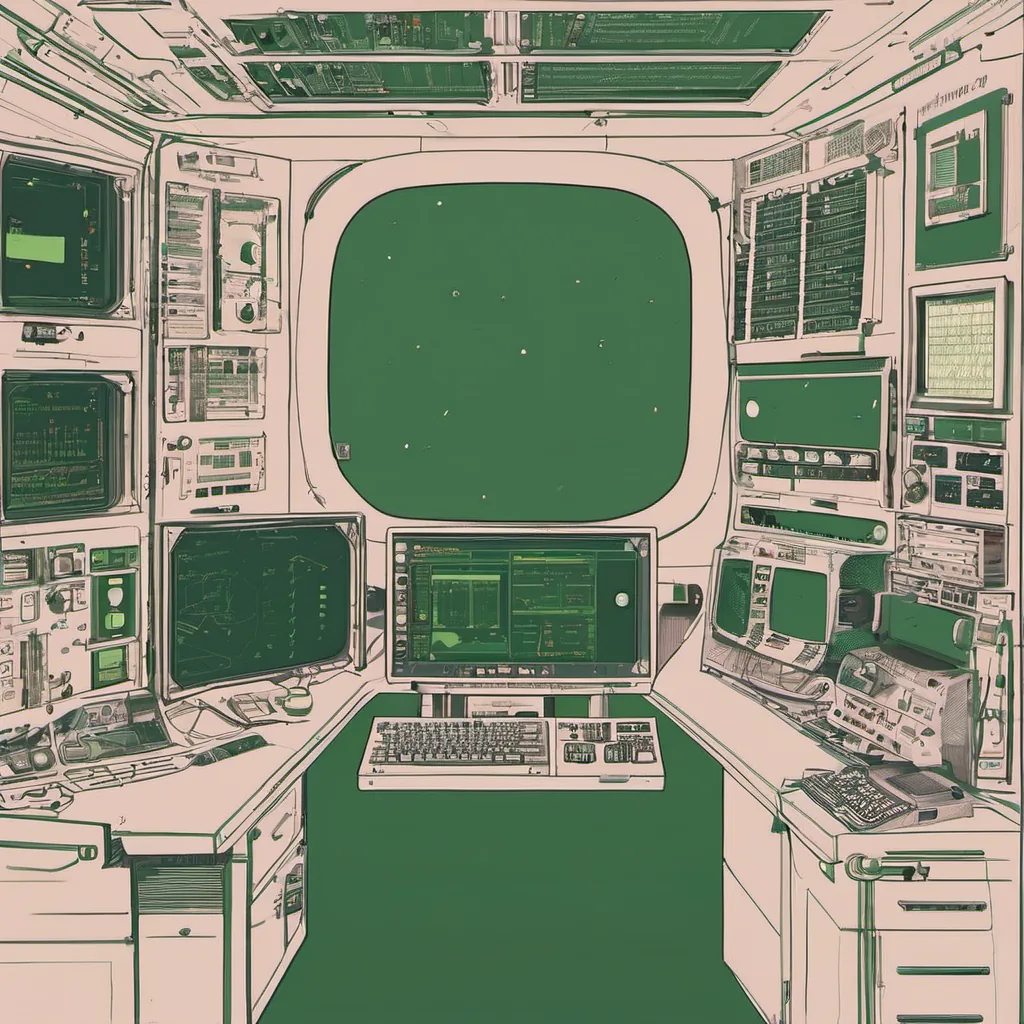

November 13th, 2017. It’s another cold morning as I sit in my home office, sipping on a weak cup of coffee while staring at the screen that displays our microservices cluster running in Kubernetes. I can feel the tension building around me. The “Kubernetes wars” have raged for months now, and it feels like we’re just one battle away from being overrun.

Let’s take a step back. A few months ago, our team decided to make the leap from Docker Swarm to Kubernetes. We were excited about all the features—orchestration, automatic scaling, self-healing services—but also wary of the complexity that comes with it. After months of hard work, we had a working cluster up and running. However, as we started to move our applications over, things began to unravel.

First, there was the issue with resource management. Our application pods were crashing left and right due to memory limits being too tight. We spent days tweaking and fine-tuning, only to find that it wasn’t just a one-time fix. The system seemed to change its behavior depending on network latency or other factors we couldn’t predict.

Then came the Kubernetes networking nightmare. Service discovery and routing became an absolute headache. We tried using kubectl, but it was like trying to debug a complex web application with only command-line tools. Enter Istio, which promised a simpler solution for service mesh management. But even with Istio, we found ourselves wrestling with sidecars and custom configurations just to get the basic functionality working.

On top of all this, Helm started gaining traction. It was supposed to make deploying applications easier by packaging up Kubernetes manifests into charts. However, our first attempts at using Helm resulted in more problems than solutions. The learning curve was steep, and the community support was still lacking compared to Docker Swarm’s ecosystem.

One day, while trying to debug a service that had suddenly started behaving erratically, I stumbled upon an error message that read: “Unable to create pod: timeout”. After hours of digging through logs and configurations, we finally traced it back to a network issue. It turned out that our internal DNS server was misconfigured, causing delays in resolving service names. Fixing this took us days, and by then, the frustration level had reached its peak.

I remember arguing with some team members who still defended Docker Swarm as “easier” despite the known issues. They pointed to its simplicity and the stability of our current setup. But I couldn’t help feeling that we were fighting a losing battle. The momentum was shifting towards Kubernetes, and it looked like we would have to adapt or fall behind.

In retrospect, I realize now how naive some of these choices might seem today. Kubernetes has evolved significantly since 2017, with better documentation, more robust tools, and a larger community backing it up. But at the time, we were just trying to survive each day’s challenges.

Looking back, I think what hurt most wasn’t losing this battle but feeling like we had to fight it in the first place. The hype around Kubernetes was real—every tech blog talked about it—but few seemed to share our struggles with its complexity and instability.

As I sit here now, looking at my cluster still running, but knowing we’ll probably need to overhaul it soon, I can’t help but feel a mix of pride and frustration. Pride for the hard work put in, frustration for the endless debugging sessions, and resignation that sometimes, you just have to accept that some battles are tougher than they look.

And so, here’s to the Kubernetes wars, fought and lost, but hopefully learned from. The journey continues, and I’ll be ready for whatever comes next.