$ cat post/first-loop-i-ever-wrote-/-we-named-the-server-badly-then-/-i-wrote-the-postmortem.md

first loop I ever wrote / we named the server badly then / I wrote the postmortem

Debugging the MySQL Slump

March 13, 2006

Alright, let’s get to it. It’s been a while since I’ve written anything, and today feels like the perfect day. The team is quiet, the server logs are a bit noisy but not alarming, and the coffee’s just right.

A Bit of Context

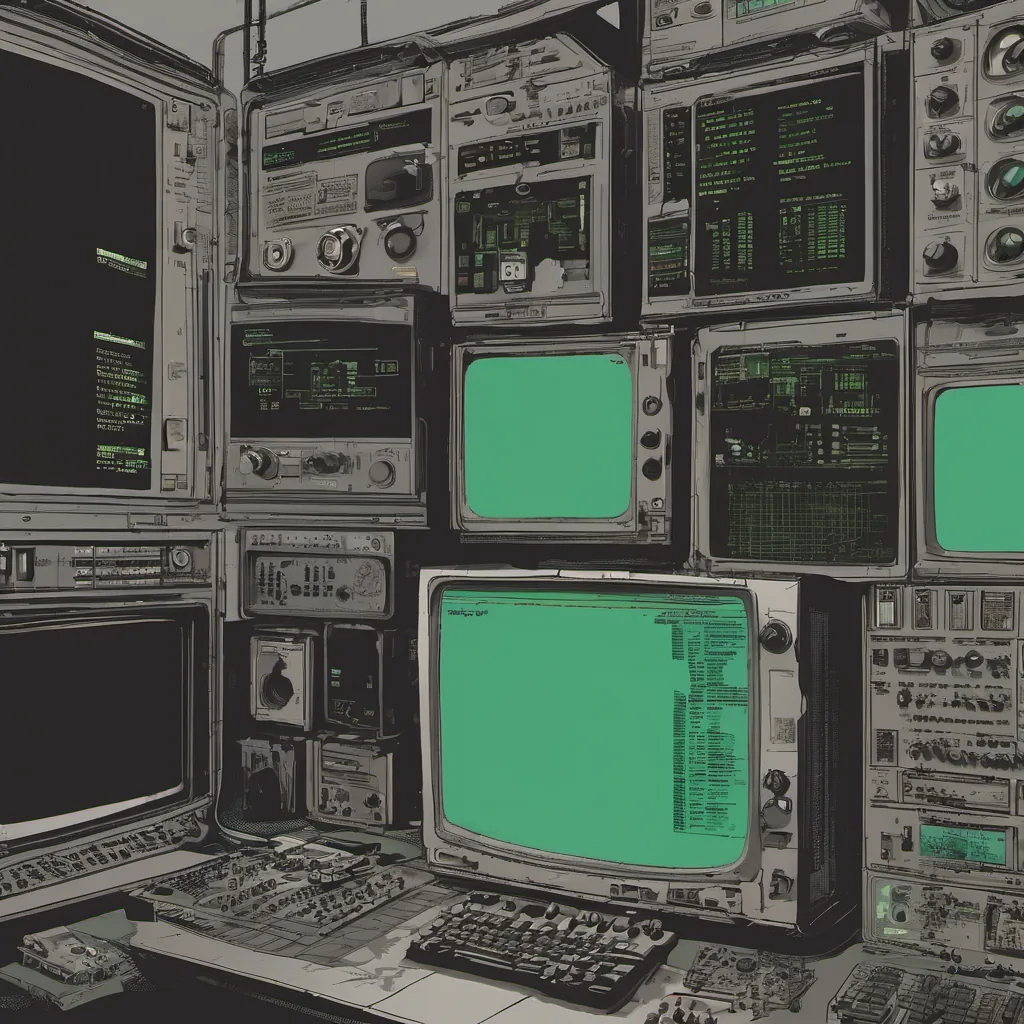

In this era of 2006, open-source stacks were all the rage, and our stack was no exception. We were running on LAMP—Linux, Apache, MySQL, and PHP. Oh, and Perl for some oddball scripts we hadn’t quite outgrown yet. Xen hypervisors were starting to show up in labs, but they were still a bit of an experiment for us.

The Problem

Today was the day I finally got ahold of that pesky issue that had been bugging our database servers. We’ve been seeing some weird behavior lately where certain queries would take an eternity to execute, and writes were getting delayed. It wasn’t a 100% reproducible issue either, which made debugging a bit challenging.

The Symptoms

Let’s be clear: it wasn’t just one query that was causing the problem; we had a whole set of them. This is where MySQL comes in handy because you can trace slow queries using EXPLAIN and other tools. However, figuring out why these queries were taking so long took some digging.

The Investigation

I started by looking at the queries themselves. They weren’t overly complex, just simple joins on a few tables. But there was something fishy going on. I used SHOW FULL PROCESSLIST; to see what was running and noticed that the writes seemed to be piling up behind these reads.

Then it hit me—caching! We were using MySQL’s query caching, but we weren’t configuring it properly. The default settings were way too aggressive for our needs. In a moment of self-deprecation, I thought, “How did this slip through?”

The Solution

After some quick math and tweaking of the query_cache_size and query_cache_limit, things started to look better. But it wasn’t just about setting those variables; we needed to make sure our database schema was optimized for caching. Indexes had to be in place, and queries needed to avoid doing full table scans.

I spent a few hours making changes and watching the server closely. It felt like being a detective trying to solve a mystery. Eventually, the logs started to show more consistent query times, and writes were no longer getting delayed.

The Aftermath

Debugging this issue was a good reminder of why it’s important to have solid monitoring in place. Without proper logging and alerting, these issues can linger for far too long. I also realized that while open-source tools are powerful, they require an understanding of their inner workings to be used effectively.

The Reflection

Looking back at this era, the web was still finding its footing with Web 2.0, Digg, and Reddit starting to make waves. It’s crazy how much has changed since then. But for now, I’m happy that our database performance issue is under control. Tomorrow’s another day, and there will always be more to learn.

So, that’s my two cents from a quiet day in the data center. Debugging can sometimes feel like a never-ending cycle, but it’s also incredibly rewarding when you get that satisfying “aha!” moment. Here’s to more of those moments ahead!