$ cat post/a-merge-conflict-stays-/-the-segfault-taught-me-the-most-/-i-strace-the-memory.md

a merge conflict stays / the segfault taught me the most / I strace the memory

Title: Docker Fever Meets Microservices: A Manager’s Perspective

July 13, 2015 was a day I still remember vividly. The tech world was abuzz with the promise of Docker and microservices, and as an engineering manager, I found myself in the thick of it. It felt like every conversation around the water cooler at work turned to containers and DevOps.

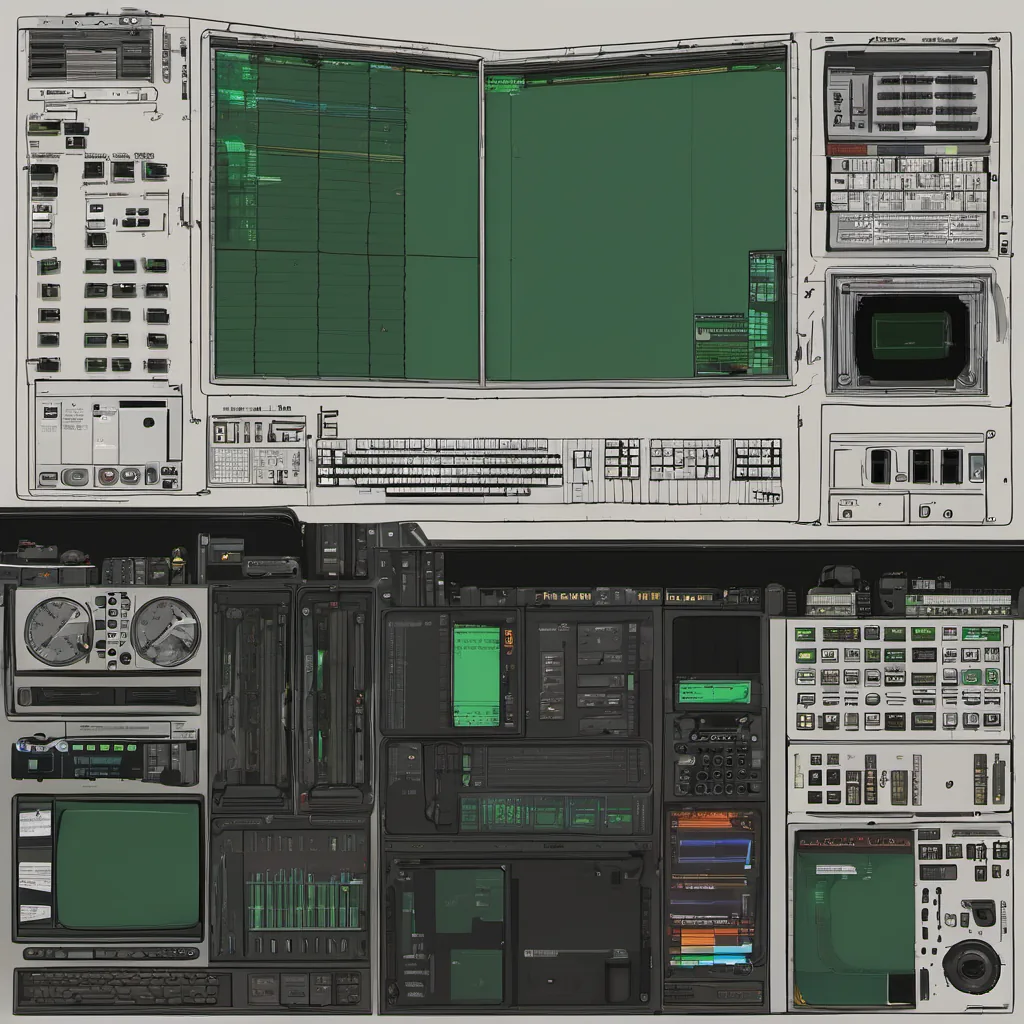

I had just wrapped up a two-day sprint where we were trying to push our monolithic application into smaller, more manageable services using Docker and Kubernetes. The goal was straightforward: split our app into smaller pieces so that each part could be scaled independently and updated without taking the whole system down. But as I sat at my desk, staring at the wall of monitors showing various containers running in a sea of green, I couldn’t help but feel a bit overwhelmed.

The excitement around Docker was palpable, especially after Google announced Kubernetes. The buzz was like nothing I had seen before—every developer seemed to be talking about it, and everyone wanted to jump on board. But as the lead engineer responsible for this transition, I found myself grappling with the realities of making such a change in an established system.

One specific issue popped up when we started deploying our services: container image size. We were using Node.js and Python applications, which meant that each container had to carry its own runtime environment. This bloat was eating into disk space and increasing the time it took for containers to start up. It wasn’t a dealbreaker, but I knew we needed a solution.

After some late-night discussions with my team, we decided to use Alpine Linux as our base image. It’s a small footprint Linux distribution that uses musl libc instead of glibc, which significantly reduced the overall size of each container. This change alone saved us GBs of disk space and improved startup times, making a significant difference in how our application behaved.

As we moved forward with Kubernetes, I found myself frequently arguing about the value proposition for microservices. While the promise of scalability was exciting, there were also concerns about complexity. We needed to ensure that each service could be independently tested and deployed without affecting others. The learning curve for both engineers and operations staff was steep, but necessary.

One particular incident stands out in my mind. A minor configuration change led to a cascade failure, where one of our microservices started sending incorrect data to another. It took hours to track down the issue and fix it, and we learned an important lesson about the importance of robust testing and logging practices.

The tech world was changing rapidly around us, and I felt like I was trying to navigate through a storm. Yet, amidst all the chaos, there were moments of clarity—like when we saw the first successful deployment of our Dockerized microservices. The feeling of satisfaction was undeniable; it made all the late nights worth it.

As July 13th faded into history, I found myself looking forward to what came next. Docker and microservices weren’t just trends—they were tools that would help us build more resilient systems. And while there were still challenges ahead, I knew we had taken a crucial step in the right direction.

That’s how it felt back then, full of excitement and occasional frustration. The world of DevOps was moving quickly, and as always, the real work was just getting started.