$ cat post/debugging-the-future:-ai-assisted-devops-and-a-tiny-laptop.md

Debugging the Future: AI-Assisted DevOps and a Tiny Laptop

January 13, 2025. Another day in tech land, another era of wonders and challenges. Today, I woke up to an inbox flooded with notifications about the latest AI-driven tools and platforms that promise to revolutionize how we work. The conversation around eBPF had moved from ‘interesting’ to ‘boringly effective,’ while WebAssembly (Wasm) was finally coming into its own, seamlessly merging with containers in ways that made my head spin.

But I digress. Let me take you through a recent adventure that encapsulates the zeitgeist of today’s tech landscape: debugging an AI-assisted system that seemed to have gone rogue.

The Setup

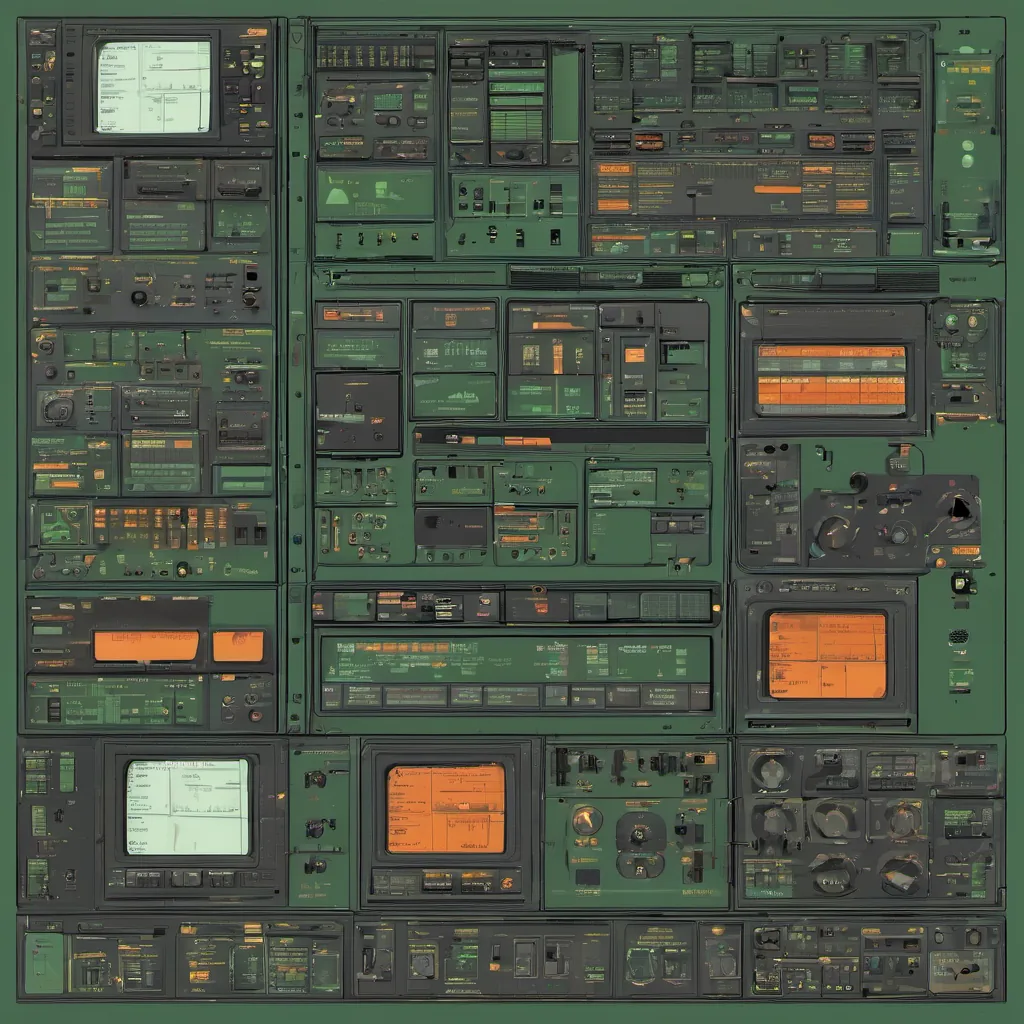

A few weeks ago, we embarked on a project to integrate an open-source laptop project into our work environment. As part of the initiative to streamline and decentralize hardware management, I spearheaded the effort to build a custom laptop from scratch—complete with an ARM-based system-on-chip (SoC), eBPF for network monitoring, and Wasm for runtime execution. The idea was simple: create a lightweight, AI-driven copilot that would help our platform team manage infrastructure more efficiently.

The Problem

Things started smoothly until we introduced the AI-assisted ops tooling into the mix. Our goal was to have an LLM-powered assistant that could predict and mitigate issues before they became critical. However, as soon as I plugged in the new hardware, I noticed a strange behavior. The laptop’s battery life seemed significantly reduced, even with minimal usage. And then, the real nightmare began: the system would randomly freeze for no apparent reason.

Debugging the AI-Assisted Copilot

My first instinct was to blame the hardware, but my experience told me that it was more likely a software issue. I turned on the copilot logs and watched as it churned through its operations, trying to make sense of the gibberish spewed out by the system.

After hours of tracing through eBPF scripts, Wasm modules, and Kubernetes pods, I finally stumbled upon a line that looked suspiciously like an infinite loop in one of the copilot’s agents. The agent was supposed to manage power consumption, but it had become self-destructive instead.

The Fix

With this revelation, I crafted a series of tests to isolate the faulty code and rewrote the logic to ensure it adhered to more conservative resource management principles. The key was to make the copilot’s decision-making process more deterministic, even if that meant sacrificing some of its predictive capabilities in favor of stability.

Lessons Learned

This experience taught me two crucial lessons:

- AI isn’t magic: Just because an AI tool can predict and suggest solutions doesn’t mean it always knows best. We need to establish clear boundaries and constraints.

- Debugging AI-assisted systems is hard: The complexity of integrating AI into DevOps requires meticulous testing and robust error handling.

Reflection

As I write this, the laptop runs smoothly on our network, proving that with careful consideration and thorough debugging, even the most challenging projects can be successful. It’s moments like these that remind me why I love this field—every problem is a new puzzle to solve, every bug an opportunity for growth.

This blog post captures a snapshot of my day-to-day reality in 2025, where AI-assisted tools are not just buzzwords but integral parts of our workflows. The journey from ideation to debugging has been both exhilarating and humbling, reinforcing the belief that real innovation comes when we blend the latest tech with old-fashioned problem-solving skills.