$ cat post/tail-minus-f-forever-/-the-service-mesh-confused-us-all-/-we-kept-the-old-flag.md

tail minus f forever / the service mesh confused us all / we kept the old flag

Title: February 13, 2012 - A Day in the Life of a DevOps Pioneer

February 13, 2012, was another day like any other. I woke up to the sound of my alarm blaring, and the first thought that crossed my mind was, “Did I forget about that bug?” The truth is, the DevOps movement was in full swing, but my world was still very much about maintaining a balance between infrastructure and application development.

I started my day with a quick review of my Slack notifications. A few services were flapping, which is never a good sign. One of them caught my eye: our internal messaging app was having issues with message delivery delays. This wasn’t something we could afford to sit on; users would start to notice and that’s the last thing I wanted.

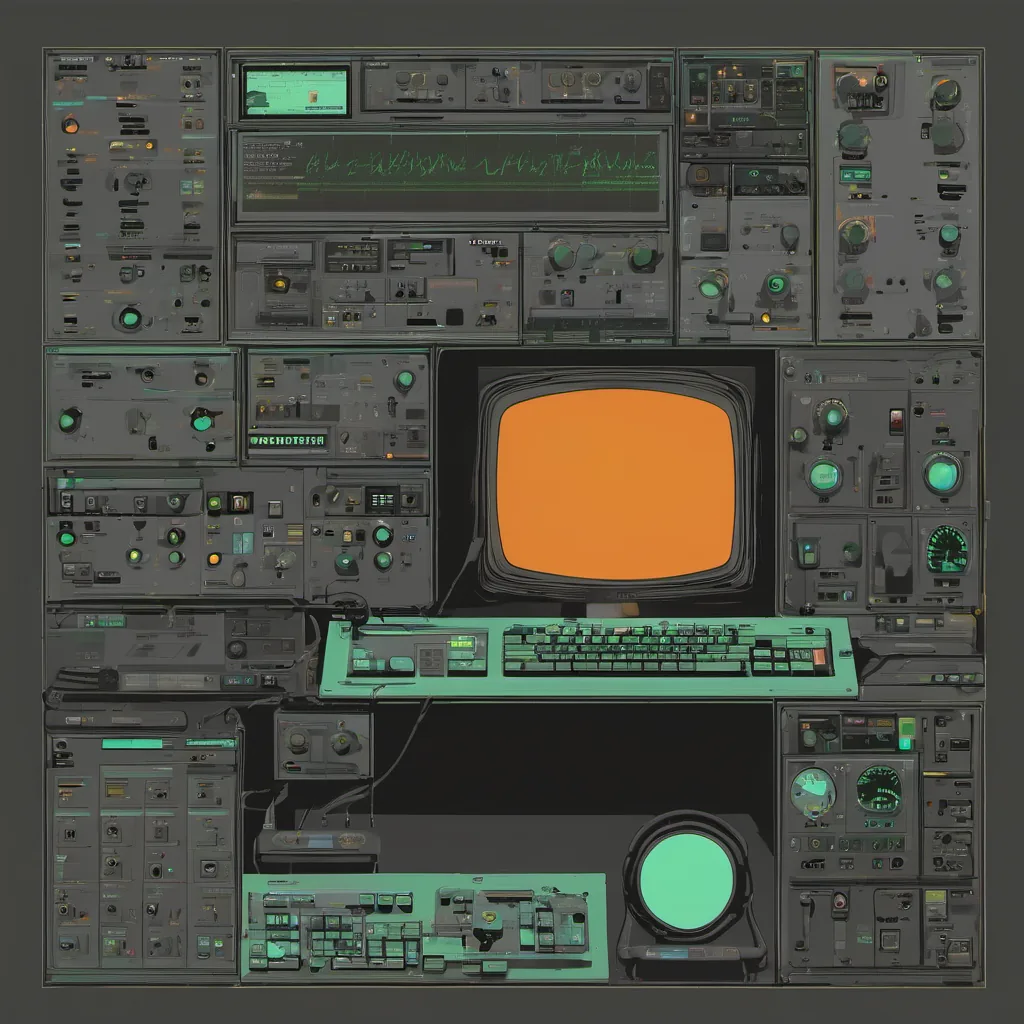

I logged into our monitoring dashboard, Grafana, and started looking at the metrics. The error rate seemed high, so I dug deeper into the logs using Fluentd. It was a bit of a mess, but there were some clear patterns emerging—HTTP 500 errors correlated with increased load on our database server.

I reached out to our operations team via IRC (yes, we still used IRC back then) and shared my findings. They acknowledged that they had noticed the spike in database activity too. We decided to work through it together since our app and infrastructure teams were increasingly collaborating more closely.

We spent a few hours analyzing the data and brainstorming solutions. One of the senior ops guys suggested using a load balancer with better health checks, which would help distribute traffic away from the overloaded instances. I liked the idea but knew we needed to test it first. I quickly whipped up a script to simulate the load and monitored the effects.

By mid-afternoon, things were looking good. We had deployed our changes and everything seemed stable for now. But I wasn’t done yet; I wanted to make sure we could handle sudden spikes in traffic without running into similar issues again.

That’s when the ops team mentioned something about Netflix’s Chaos Engineering. They hadn’t implemented it fully but thought it might be a good idea. I agreed, and we spent some time fleshing out our own version of chaos engineering for our services. We decided to start with load testing our message service and gradually introduce more complex scenarios.

As the day wore on, I started wrapping up my work and preparing for a meeting about migrating one of our microservices to Heroku, which had just been acquired by Salesforce. The idea was that moving it would simplify our deployment process and free us from some of the complexity we faced with running our own infrastructure.

I left the office around 6 PM, feeling pretty good about what we accomplished today. It wasn’t a groundbreaking day, but small victories like fixing bugs and improving processes are what keep me going. Tomorrow was another day to learn, adapt, and push forward.

Looking back at the Hacker News stories from that month—like “Path uploads your entire iPhone address book to its servers”—it’s clear how much data security and privacy were becoming major concerns. It’s a topic that will continue to be relevant as we build more connected systems.

But today was just about fixing an app, and I’m glad I could make a small difference in our team’s day-to-day operations. That’s the real work of DevOps—finding solutions that keep things running smoothly while constantly looking for ways to improve.

That’s how February 13, 2012, unfolded for me. A typical day with some ups and downs, but ultimately filled with the satisfaction of making progress.