$ cat post/man-page-at-two-am-/-i-pivoted-the-table-wrong-/-the-patch-is-still-live.md

man page at two AM / I pivoted the table wrong / the patch is still live

Title: Debugging the Red

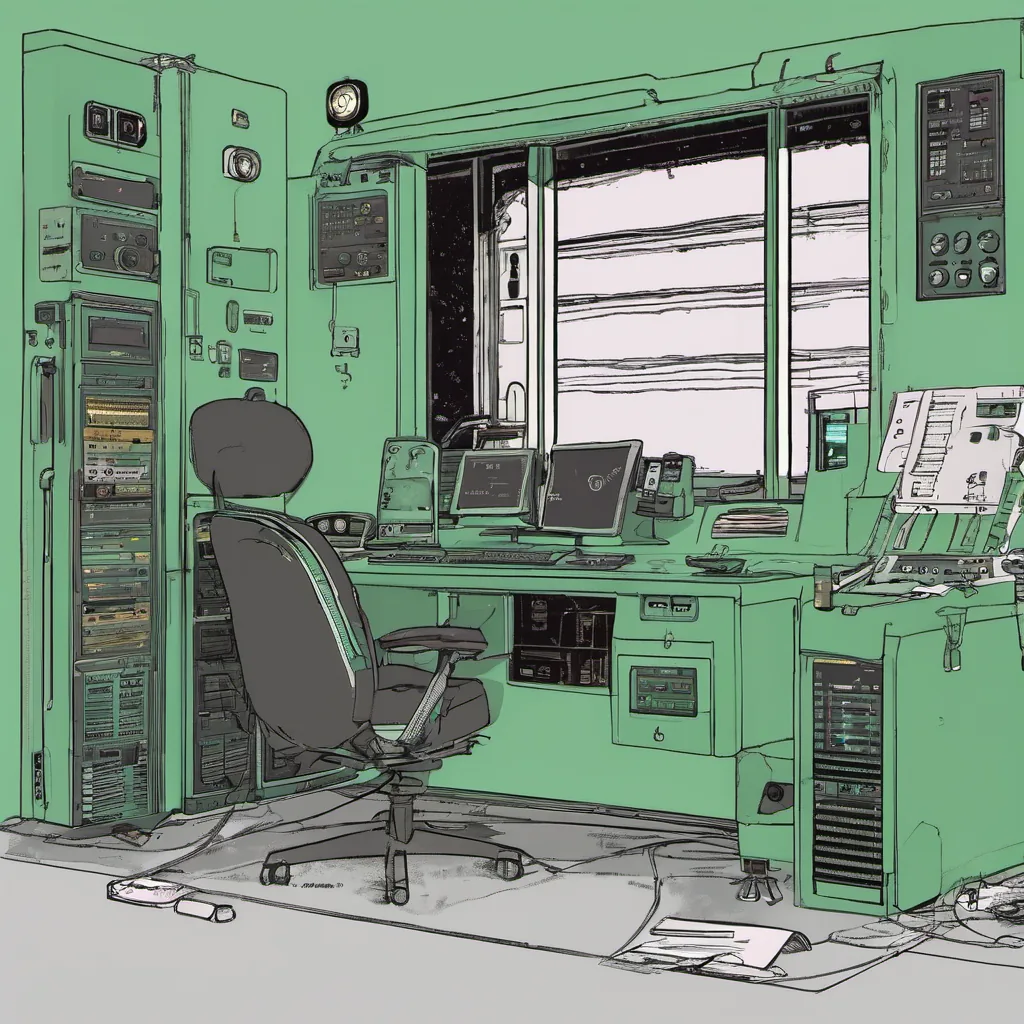

December 13, 2004. A crisp winter day with a hint of snow in the air. The office is filled with the usual hustle and bustle, but something feels different. It’s the kind of day when you feel like you’re at the heart of it all, and I’ve just been tasked to debug one of our most critical services.

Our application had started to experience some strange behavior recently—slow response times and occasional crashes. It was affecting both internal operations and customer service, which meant we needed to get a handle on it pronto. The service in question handles user authentication for multiple applications, making its stability crucial.

I spent the morning sifting through logs, looking for patterns that would give me a clue about what was going wrong. There were too many entries to wade through manually, so I quickly whipped up a Python script to parse and filter out the relevant information. It was one of those moments where I felt like a true hacker, diving into the codebase with my trusty tools.

By mid-afternoon, I had narrowed it down to a few suspicious lines that hinted at an issue within our authentication service’s database interaction. The script helped me identify that the queries were taking longer than they should, leading to timeouts and eventually causing the application to crash.

The next step was diving into the code itself. We were using MySQL back then, which wasn’t the most performant option for high-load environments but we had optimized it as much as possible with indexing and query optimization techniques. Still, something didn’t add up.

I reached out to our DBA team, and after a quick discussion, they suggested that maybe the issue lay in how we were handling connections. We had been using a connection pool, which was supposed to manage database connections efficiently, but it seemed like there might be some underlying issues with how the pool was being managed.

After a bit more digging, I found out that our application code wasn’t closing connections properly when they weren’t needed. This led to an accumulation of idle connections in the pool, eventually causing performance degradation and timeouts. It turned out to be a simple fix—adding a few lines of code to close unused connections—but it took some time to track down.

The whole experience was humbling. In hindsight, it’s one of those moments where you realize that even with all the best practices and optimizations in place, human error can still trip you up. But we made it through, and by late afternoon, our service was back online, running smoothly once again.

As I look around the office, I see others working away on their own projects—some writing code, others debugging, a few collaborating over Skype calls. It’s a reminder that even though we’re all doing different things, we share a common goal: to build something robust and reliable.

And so, as 2004 draws to a close, I’m left with the satisfaction of having debugged another issue, the thrill of diving into complex systems, and the knowledge that there’s always more to learn. The tech world moves fast, but for now, we’ve got this little victory under our belts.

Happy debugging!