$ cat post/a-patch-long-applied-/-i-ssh-to-ghosts-of-boxes-/-the-repo-holds-it-all.md

a patch long applied / I ssh to ghosts of boxes / the repo holds it all

Title: Kubernetes, Helm, and a Late Night with Prometheus

August 13th, 2018 was another unassuming day in the tech world, but for me and my team at Platform Engineering Land, it marked an ongoing struggle with Kubernetes. This month had been tough—Helm seemed like a good idea, but we were still grappling with how to best use it alongside our beloved Kubernetes.

The Night Shift

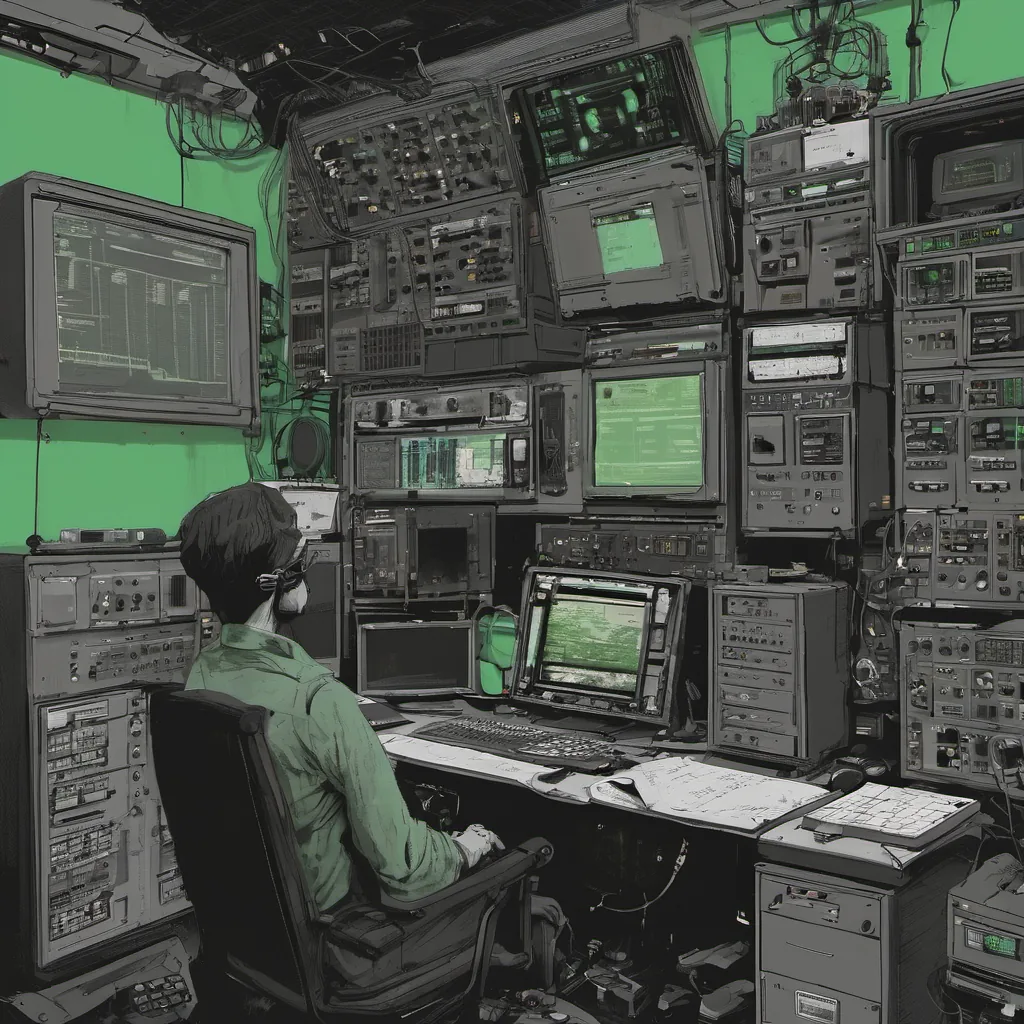

Around 10 PM, I was paged because one of our critical services went down. It wasn’t immediately clear which pod or service was flapping, and I had just enough sleep from the previous night’s Netflix binge. I quickly logged into my laptop, fired up kubectl, and started checking logs.

$ kubectl get pods -n prodThe output showed a few pods in a CrashLoopBackOff state, which was unusual. A quick glance at the pod logs revealed an error:

[error] 2018-08-13T22:45:07+00:00 [pid 9321] something went wrong with the database connection.This pointed to a misconfigured environment variable. I double-checked our Helm chart and saw that someone had accidentally used the old DATABASE_URL instead of the new one we rolled out last week.

I fixed the mistake, ran helm upgrade, and everything came back online within minutes. Phew! That was close.

Helm’s Growing Pains

While that issue got resolved relatively quickly, I couldn’t help but feel like we were still swimming upstream with Helm. It felt like every time a team wanted to deploy something new, they had to learn yet another set of commands and flags. The Helm documentation seemed to change overnight, and our internal documentation was getting outdated fast.

We started exploring alternatives—Operator SDK seemed promising, but it was still in alpha at the time. We decided to stick with Helm for now, hoping that it would mature over the next few months.

Prometheus, Our New Best Friend

On a brighter note, we’ve been increasingly using Prometheus and Grafana for monitoring. We’ve seen firsthand how much better they are compared to our old Nagios setup. The query language is powerful, and visualizing metrics with Grafana has made troubleshooting a breeze.

One of the coolest parts was when I set up alerts for memory usage on one of our key services. A spike in memory consumption had led to an unexpected crash, and now we have real-time visibility into that metric. We’ve been able to proactively address issues before they become major pain points.

The Docker Drama

Speaking of pain points, the Docker incident where you couldn’t download it without logging in was a bit of a wake-up call. We realized how dependent our development environment had become on Docker Hub being available and secure. It reinforced the importance of having local builds and avoiding single points of failure for critical services.

Looking Forward

As we move into the fall, I’m looking forward to seeing what new tools and technologies emerge in the world of container orchestration and monitoring. Kubernetes is definitely winning the war, but there’s still a lot of work to be done. The Helm community needs to stabilize its releases, and I hope we see more mature operator frameworks.

For now, I’ll keep working through the late nights with Prometheus and figuring out how best to integrate all these moving pieces into our platform engineering practices. There’s no shortage of challenges, but that’s what makes this job so exciting.

That was my take on August 13th, 2018. The tech world keeps evolving, and it feels like every day there’s something new to learn or debug. But at the end of the day, it’s all about making our platform more robust and reliable for everyone who uses it.