$ cat post/when-your-server-goes-down-in-a-startup-sprint.md

When Your Server Goes Down in a Startup Sprint

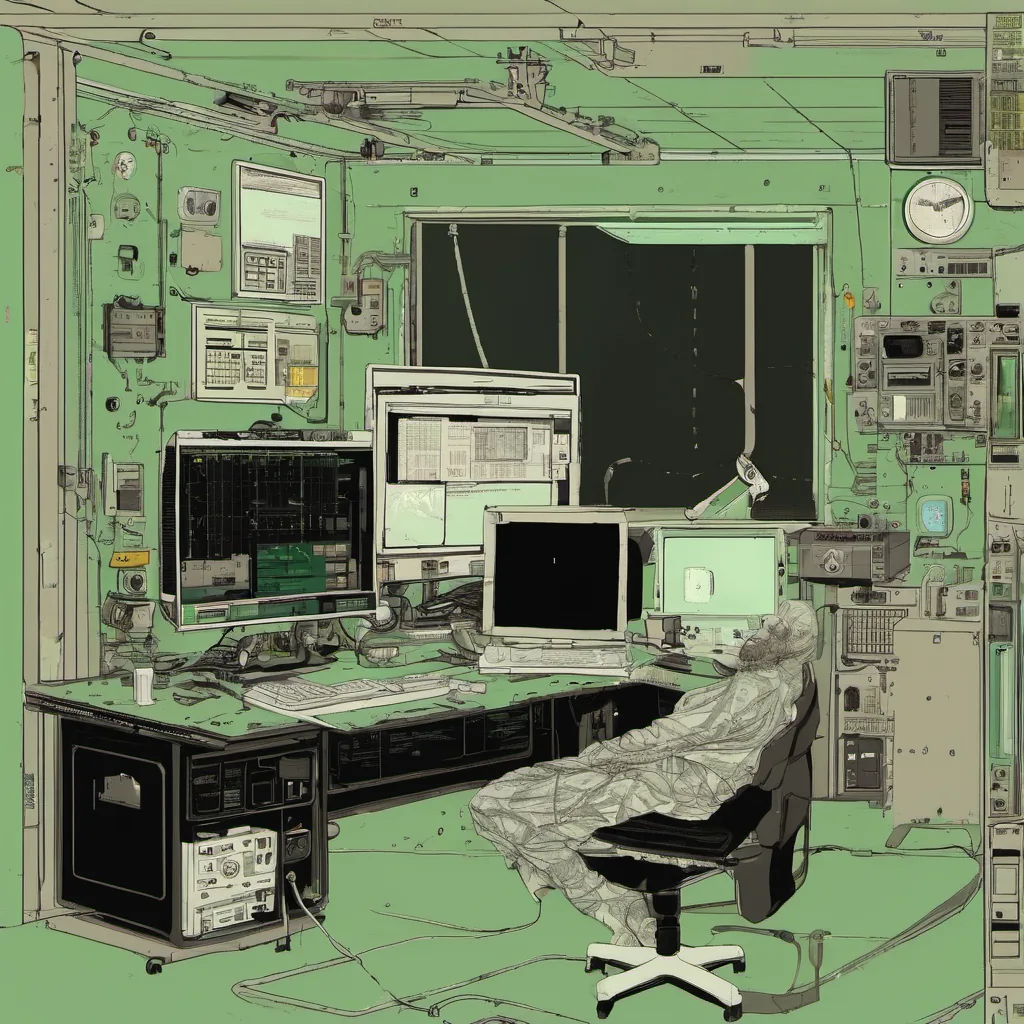

May 12, 2008 was just another day at the office for me. I remember it well because that’s when our server went down during one of those epic startup sprint nights. The scene was something out of a horror movie – red lights flickering on the servers, alarms blaring, and the sound of silence from our normally bustling development team.

I’m Brandon Camenisch, and this is my technical ramble for 2008. Let me take you back to that night.

It all started innocently enough with a late-night deployment. We were pushing a new feature that was supposed to make our app fly. The excitement of seeing your baby grow was palpable, but so was the pressure. Our app had been growing rapidly since launching in March, and we knew this release could be the one that tipped us over into the big leagues.

The servers were humming along smoothly when suddenly, all hell broke loose. The server logs showed a flurry of 502 Bad Gateway errors. A quick check revealed that the load balancer was getting HTTP responses with status code 502 from our web app servers. Huh? Something wasn’t right.

I quickly assembled my ops team to get to the bottom of this. We knew we had some caching issues, but nothing this bad. As we pored over logs and metrics, I couldn’t shake off a feeling that we were missing something obvious. After what felt like hours of frustration, one of our junior devs pointed out an issue with the web server configuration.

Turns out, we’d inadvertently disabled error logging on one of our servers during a previous configuration tweak. Without logs, debugging became a nightmare. The solution was simple: re-enable logging and restart the server.

Once that was done, we watched in horror as our servers struggled to keep up with traffic. CPU usage spiked, and memory started eating away at our precious resources. It took some frantic coding and tweaking of our database queries to get things under control. By 3 AM, we had a working fix, but the damage was already done.

That night, I learned the hard way that even with all the tools in the world (GitHub, AWS EC2/S3, you name it), basic ops knowledge can make or break your app. We had grown so fast that we hadn’t fully embraced the full stack development process. Our codebase was a mess of quick hacks and spaghetti logic, which made debugging a nightmare.

Looking back, I wish we had taken more time to lay down proper foundations from day one. Agile methodologies were becoming popular in 2008, but we were all still grappling with how to implement them effectively. In our rush to build and ship, we often skipped the boring parts like writing tests or setting up continuous integration.

The cloud was starting to gain traction too. AWS EC2 and S3 were game-changers, but we hadn’t fully embraced their potential yet. We stuck with our own private servers because “we knew best” – a common attitude back then that often held us back from the benefits of modern infrastructure.

That night taught me a valuable lesson about the importance of ops in software development. It’s not just about writing code; it’s also about building and maintaining systems that can scale and handle real-world traffic. We needed to invest more time in setting up robust monitoring, logging, and automated deployments. The economic crash was hitting hard at the time too, but we couldn’t afford to cut corners on infrastructure.

In the end, we got through it. The app stabilized, and our user base continued to grow. But that night left an indelible mark on me. It made me realize that as much as I love coding, there’s more to building a successful product than just writing lines of code.

So here’s to those late nights when your server goes down – may they teach you valuable lessons and push you to improve your craft. And remember, even in the darkest moments, there’s always a way back up if you’re willing to put in the effort.