$ cat post/a-shell-i-once-loved-/-the-alert-fired-at-three-am-/-disk-full-on-impact.md

a shell I once loved / the alert fired at three AM / disk full on impact

Title: Why I Kept Waking Up at 3 AM Thinking About Containers

It was January 2015, and the tech world was buzzing with containerization. Docker had just started its journey to mainstream adoption, while everyone was getting their first taste of what microservices could mean for development workflows. I found myself awake late at night, not because of a pesky cat or an endless stream of emails, but because containers were keeping me up. Specifically, I couldn’t stop thinking about the implications of Docker and how it might disrupt our current infrastructure setup.

At work, we had just completed a major migration from monolithic Java applications to a microservices architecture. The transition was challenging but necessary for scaling and maintaining our application’s health. As part of this effort, we started looking into containerization as a way to package and deploy our services more efficiently. Our initial tests were promising; Docker containers seemed like the perfect fit for our needs.

However, as I delved deeper into the intricacies of Docker, I found myself questioning every assumption. How do you manage hundreds or thousands of these lightweight processes? What about networking between containers? And what happens when one container goes down—does it bring the entire system with it?

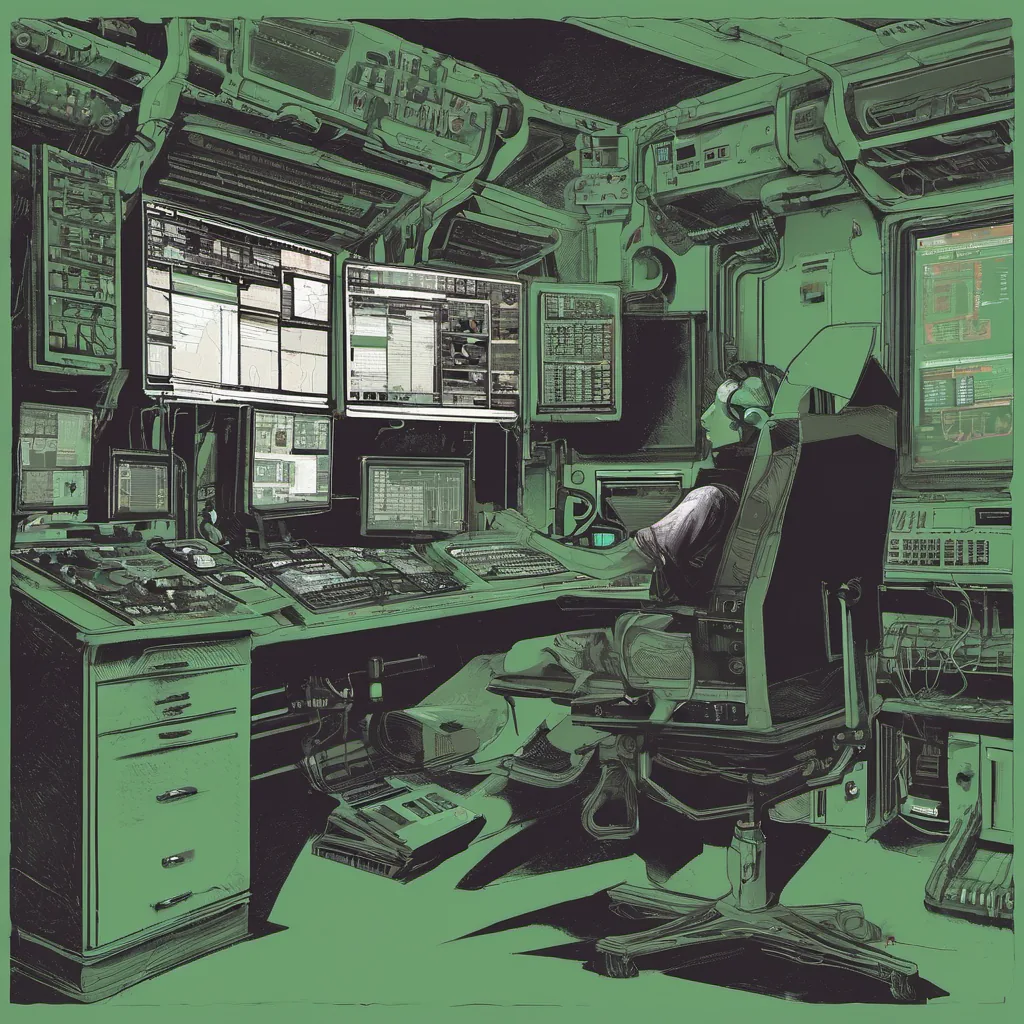

I remember sitting in front of my screen late one night, staring at a command-line output from docker run, trying to wrap my head around how this tool was going to revolutionize our infrastructure. The simplicity of starting a new instance seemed almost too good to be true, but I couldn’t shake off the feeling that we were missing something.

One evening, I found myself arguing with colleagues about whether we should use Docker natively or build a custom container orchestration solution on top of Kubernetes. Some argued for sticking with Mesos/Marathon because it was familiar and had a proven track record in our organization. Others pushed back, pointing to the growing momentum behind Google’s open-source project.

In the end, I ended up writing a script to automate the deployment of Docker containers using Marathon, just to see if we could get by without Kubernetes. It worked, but there were subtle issues with scaling and network configuration that made me question whether this was really the best path forward.

Meanwhile, industry buzz around containerization only seemed to increase. Stories like the one about YouTube defaulting to HTML5 video kept popping up in my Hacker News feed, distracting me from the real work at hand. I couldn’t help but feel a mix of excitement and dread as I read about new tools and technologies coming out almost daily.

By now, it was clear that containers were more than just a cool new toy; they represented a fundamental shift in how we think about application deployment and scaling. But like any new technology, there were growing pains. Debugging issues with container interactions, managing dependencies across multiple services, and ensuring consistent environments—these all became real challenges.

One particularly frustrating night, I spent hours trying to diagnose why a seemingly simple command was failing. The error message from Docker was cryptic, and after much head-scratching, it turned out that the issue lay in some obscure configuration detail that had nothing to do with containers themselves but rather how our logging system interacted with them.

This experience taught me two important lessons: first, that even when things look simple on the surface, there are always complexities beneath; and second, that persistence and a willingness to dig deeper can often uncover the root cause of problems. In the end, I shipped a fix for the issue, but not before waking up in the middle of the night because my brain was still trying to figure it out.

Looking back on that time, I realize how much containerization has evolved since then. Today, Docker and Kubernetes are practically synonymous with modern DevOps practices. But even as those technologies matured, so did our understanding of what they could—and couldn’t—do for us. And while I might not have all the answers today, I know that every challenge we face brings us closer to finding better solutions.

So here’s to staying up late thinking about containers and figuring out how best to manage them. Because even if it means sacrificing a few hours of sleep, it’s worth it when you’re building something truly innovative.