$ cat post/november-11,-2013-–-the-container-revolution's-dawn.md

November 11, 2013 – The Container Revolution's Dawn

November 11, 2013 was a significant date for me and my team. It marked the beginning of our journey into containers, an era that would change how we thought about software deployment and infrastructure management.

We had just wrapped up a project to migrate our existing application from monolithic servers to a series of microservices using Docker. The decision to embrace containers was not driven by grand visions or hype; it came from the practical need to scale more efficiently and reduce the complexity of our deployment pipeline.

Our initial foray into Docker was tentative, but as we delved deeper, the benefits became clear. We were able to package our applications in lightweight, portable containers that could be deployed consistently across different environments—from development to production. This meant fewer integration issues and a faster feedback loop during development cycles.

One of the challenges we faced early on was ensuring the stability and performance of these new containerized services. As we began deploying them, we noticed some unexpected behavior. Our microservices were hitting resource limits more frequently than expected. We found ourselves wrestling with CPU and memory constraints as our containers scaled up and down in response to demand.

I remember the late nights spent debugging and optimizing our Docker images. We had to fine-tune our resource settings and carefully monitor our containerized services to ensure they didn’t starve or monopolize resources. It was a learning curve, but an essential one that would pay off as we continued to scale.

Another issue we encountered was the complexity of managing multiple containers across different machines. While Docker provided the foundation for containerization, it lacked some of the higher-level orchestration capabilities that were becoming increasingly important in our rapidly growing infrastructure. We started exploring tools like CoreOS’s fleet and later Kubernetes, which would become mainstream soon after.

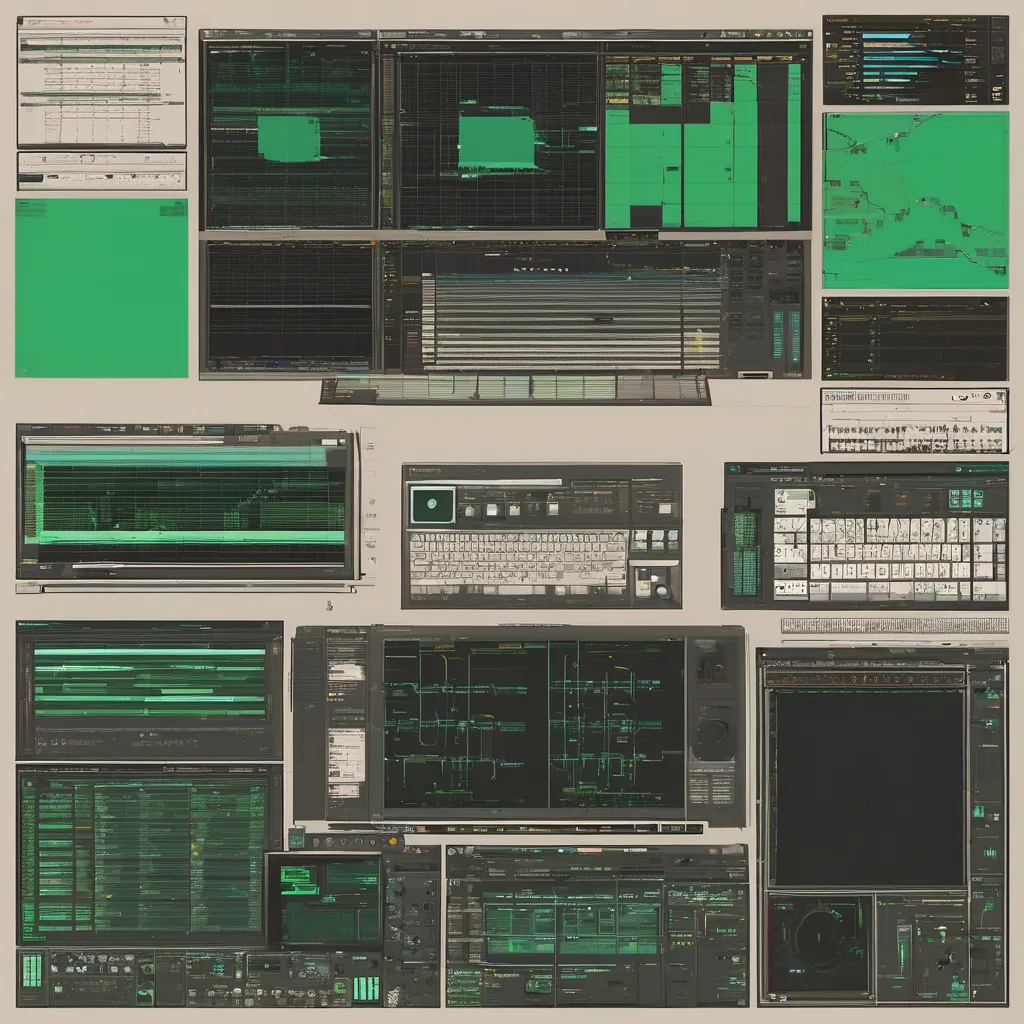

During this period, we also had to deal with the reality of scaling up our operations team. With more services running in containers, we needed a more robust monitoring and alerting system. We integrated Prometheus and Grafana into our stack, which helped us gain better visibility into our containerized applications and their performance characteristics.

Reflecting on this time, I see how far we’ve come since those early days of containerization. Back then, Docker was still in its infancy, and the concepts of microservices were becoming more widely understood but not yet fully realized. We were part of a small community of pioneers navigating these uncharted waters.

The stories from Hacker News that month added to the zeitgeist of this era. The spreadsheet challenge, the failed company, and the shutdown of Winamp all felt like snippets of our lives at work: tinkering with new technologies, experimenting, and sometimes hitting roadblocks. These stories reminded us that innovation is as much about failure and learning as it is about success.

In many ways, November 11, 2013 was just another day in the life of a tech company, but looking back, it marks the beginning of a significant shift in how we approach software deployment and infrastructure. It was a humbling journey filled with challenges, but also with growth and the promise of more efficient, scalable systems ahead.

This blog post reflects my personal experiences during that time, providing a candid look at the practical aspects of containerization and its impact on our operations.