$ cat post/the-prod-deploy-froze-/-i-watched-the-memory-climb-slow-/-i-kept-the-old-box.md

the prod deploy froze / I watched the memory climb slow / I kept the old box

Title: AI Copilot Debugging: When LLMs Misbehave

August 11, 2025. Another day in the life of an engineer in a world where AI copilots are everywhere. I woke up to the hum of my office and the constant background chatter of language models assisting with everything from coding to meetings. Today’s challenge? Debugging an LLM that kept getting confused about context.

Last night, during our platform engineering meeting, we discussed how our infrastructure was scaling beautifully under the load of AI-native tooling. We were running eBPF in production and had successfully integrated WebAssembly (Wasm) with containers. It felt like everything was coming together as planned. But sometimes, things don’t go according to plan.

Around lunchtime, I received a notification that our internal chatbot, which is supposed to assist developers by suggesting code snippets, was misbehaving in some scenarios. Developers were reporting that it was giving them incorrect suggestions or even outright erroneous code. This wasn’t just frustrating; it could lead to significant bugs if not addressed quickly.

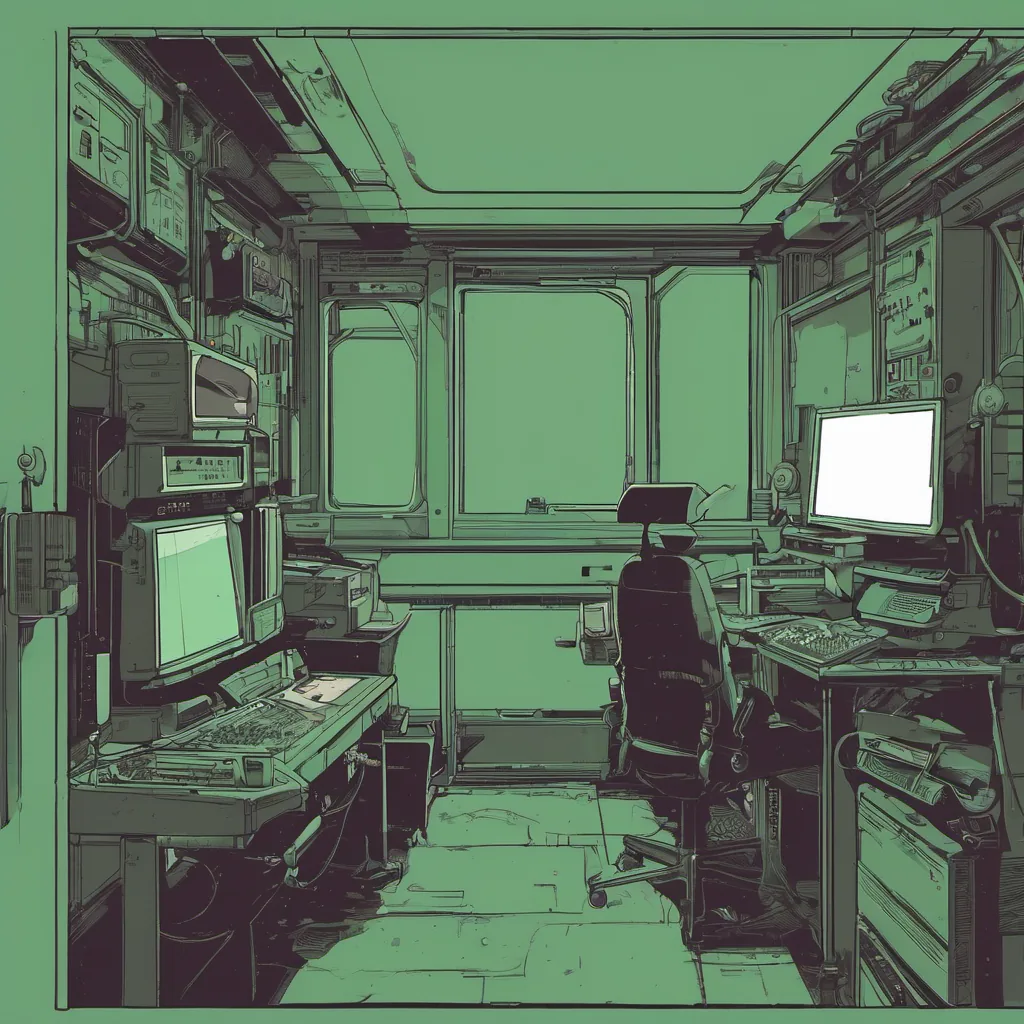

I sat down at my desk and fired up the LLM debugging tool we had been developing for a while now. The tool allowed us to inspect the state of the model, trace its reasoning process, and even step through parts of its code. I started by looking at the context where it went wrong. In this particular case, it was suggesting a map function instead of an array.reduce method.

I decided to run a few tests manually, feeding in similar input contexts and observing how the model responded. It seemed like the model wasn’t properly handling certain edge cases or complex data types. This is where things got interesting.

The LLM was using a combination of pre-trained models and real-time training based on recent interactions with users. However, it appeared that the feedback loop for real-time training was not robust enough to handle these more nuanced situations. The model was essentially overfitting to simpler patterns and missing out on the subtleties.

I spent some time tweaking the hyperparameters of the LLM’s real-time learning module. I adjusted the weight given to recent interactions versus historical data, and fine-tuned the feedback mechanisms. It wasn’t a straightforward fix; there were several moving parts that needed careful calibration.

After a few iterations, things started looking better. The model was still suggesting map in some places, but not as often or as aggressively. I decided to take it for a more rigorous test by deploying it temporarily on our internal CI/CD pipeline. If anything broke, we would catch it quickly and have time to revert.

The results were encouraging. The number of erroneous suggestions dropped significantly, and the code quality metrics improved. However, there was still room for improvement. I realized that one of the key issues was the lack of domain-specific knowledge in some parts of the model’s reasoning process. We needed a way to integrate more specialized knowledge into our models.

This led me to dive back into some research papers on multi-modal learning and domain adaptation techniques. The goal was to find ways to train the LLMs on specific domains, like different programming languages or frameworks, so they could better understand context-specific nuances.

As I sat there late into the night, tweaking and testing, I couldn’t help but feel a mix of excitement and frustration. Excited because we were making real progress in how AI assists engineers, frustrated because this kind of debugging was far from glamorous and often required deep technical insights rather than just pushing buttons.

By the time morning rolled around, our LLM had stabilized enough to go back into production. Developers reported a noticeable improvement, and I couldn’t help but feel proud that we were making these kinds of incremental steps towards smarter, more reliable AI assistants in the workplace.

This experience underscored for me just how much work goes into making even the most seemingly simple tools. As an engineer navigating this era of AI-native tooling, it’s a constant reminder that while the technology is incredible, it still requires human insight and ingenuity to get right.

In the end, it was just another day in tech—full of challenges and rewards, all wrapped up in a neat LLM debugging session.