$ cat post/november-10,-2008---debugging-the-cloud.md

November 10, 2008 - Debugging the Cloud

November 10, 2008. A day that seemed normal enough, yet it marked a turning point in my career and the tech industry as a whole.

Just a couple of weeks earlier, GitHub had launched with a bang, promising to revolutionize code collaboration. The iPhone SDK was just starting to gain traction, and Hadoop was slowly making its way into big data circles. Cloud computing was still a buzzword for many, but AWS EC2 and S3 were proving their worth in the enterprise. Agile/Scrum was becoming the de facto standard for project management, and I found myself deep in the trenches of a project that demanded both technical expertise and organizational change.

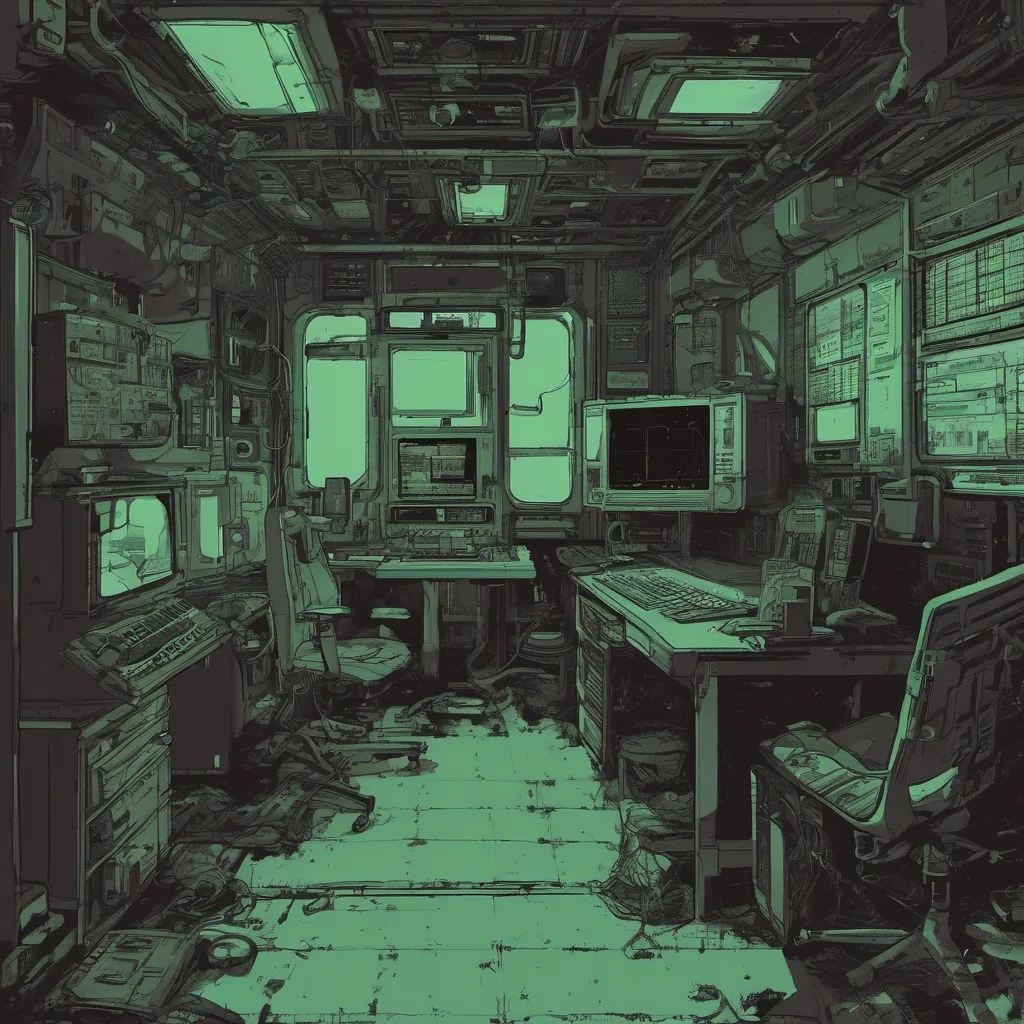

I remember sitting in my office at 8 AM, staring at my screen as our application went down. “This can’t be happening,” I thought to myself, but it was already too late. Users were flooding Twitter with complaints about downtime. The team scrambled—developers, ops, and product managers all converging on the monitoring dashboard.

We quickly identified a bottleneck in one of our EC2 instances running our critical backend service. AWS had been under scrutiny for its reliability ever since the S3 outage earlier that year. We knew we needed to get this fixed fast, but there was also an underlying tension about whether cloud-based infrastructure could truly be as robust as traditional colocation.

I dove into the logs, looking for any anomalies. The culprit was a misconfigured autoscaling group—basically, our servers were scaling up and down faster than they should have been, leading to performance issues and eventually, service degradation. I made the necessary adjustments and watched as the metrics started to stabilize. Relieved sighs echoed through the office as we pushed the changes live.

But just as we thought the storm had passed, another front emerged: a sudden spike in traffic from a popular social media site that was linking to one of our blog posts. Our app wasn’t optimized for this kind of traffic surge, and it quickly overloaded our servers. I knew immediately what needed to be done—vertical scaling on AWS—but there was still the question of long-term solutions.

The cloud vs. colo debate was heating up in tech circles, with many arguing that the cloud offered more flexibility and cost savings while others clung to the perceived stability of colocation centers. For us, the reality was somewhere in between—we needed a hybrid approach that combined the best of both worlds.

This incident highlighted the challenges we faced as engineers trying to keep up with the rapid pace of technological change. While tools like AWS were powerful, they required a deep understanding of their nuances and limitations. We couldn’t afford to rely on magic; every line of code and configuration mattered, and we needed to be ready to adapt quickly when things went wrong.

As I sat back in my chair that evening, watching the logs stabilize again, I felt both exhausted and invigorated. The tech landscape was evolving so rapidly that it often felt like we were just trying to keep up. But this experience taught me something invaluable: resilience is key in this industry. No matter how well you plan or how much you know, Murphy’s Law will always find a way to assert itself.

So here’s to November 10, 2008—the day I learned not only about the bugs and challenges of cloud computing but also about the importance of staying adaptable and vigilant in a rapidly changing world.