$ cat post/the-prod-deploy-froze-/-the-load-average-climbed-alone-/-the-cron-still-fires.md

the prod deploy froze / the load average climbed alone / the cron still fires

Title: February 10, 2014 - Docker Fever and the Microservices Debacle

Today was a day filled with Docker fever, and my team had just gone through another microservices argument. I decided to write this down while it’s fresh in my mind.

I remember waking up to all sorts of chatter about Docker on Hacker News. The containerization buzz seemed to be everywhere. People were already predicting that Kubernetes would take over the world by 2015, but little did they know what was really going through our team’s minds.

Our engineering department had been debating microservices for months. One camp wanted to fully embrace them and build a monolithic beast into a collection of small services. The other side argued that it would just create more complexity and overhead. I found myself in the latter camp, but sometimes felt like the odd one out. The argument was passionate on both sides, with valid points from each.

In my previous project, we had tried to build a service-oriented architecture using the 12-factor app methodology. It worked well for small teams, but as our projects grew, the overhead of managing multiple services became daunting. The promise of microservices—like increased scalability and easier deployment—seemed too good to be true when it came to large-scale ops.

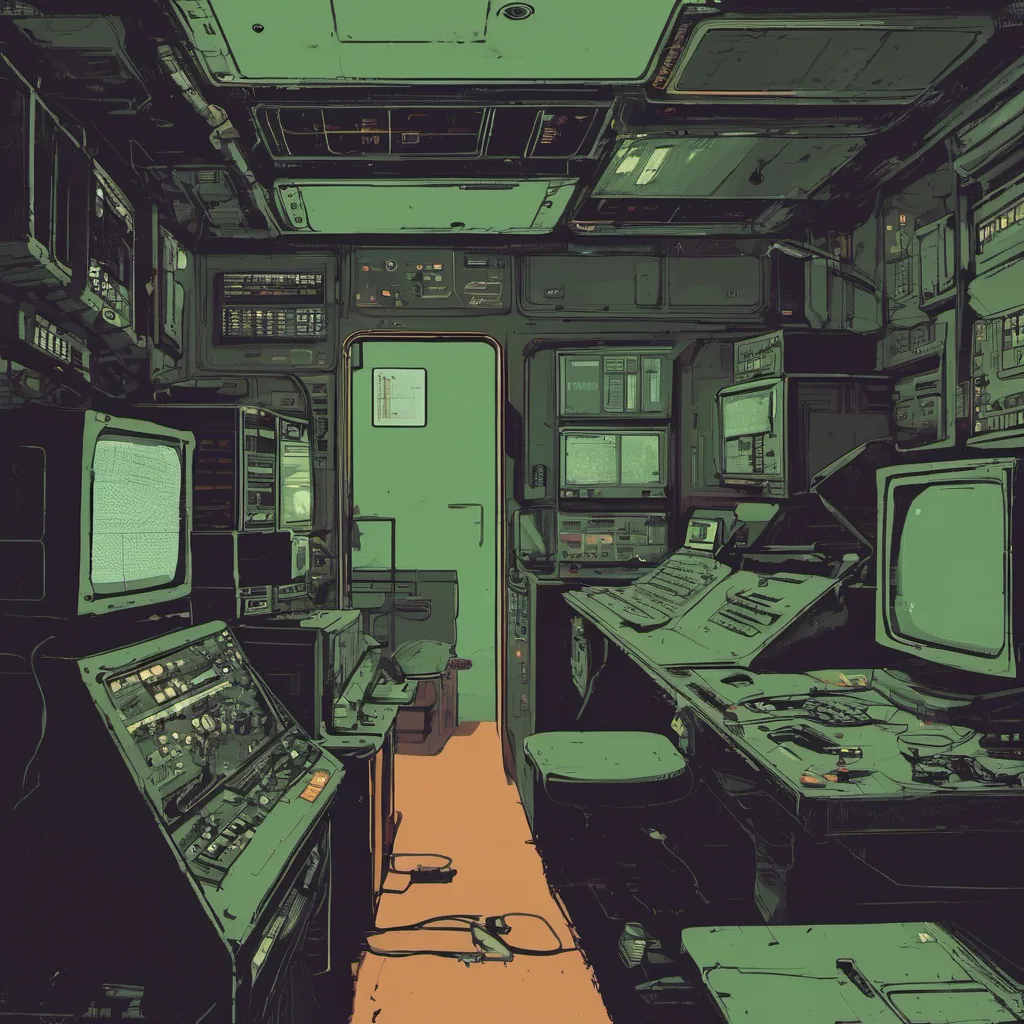

Today, we were discussing another project that required a robust, scalable solution. Our dev lead proposed using Docker containers for rapid prototyping and easy testing. I had mixed feelings about the suggestion. On one hand, Docker did offer a lot of flexibility; on the other, we needed to ensure that our production environment didn’t become a chaotic mess of isolated services.

We decided to give it a shot for this project. The idea was that each service would be its own containerized microservice, communicating via APIs. This way, we could scale up individual components as needed and maintain better isolation between services. But there were always the challenges of container orchestration, network configuration, and ensuring consistency across environments.

After our meeting, I spent some time setting up a basic Docker environment for testing. It was a bit clunky at first, but the simplicity of running docker run to start an instance was hard to argue with. As I set things up, I couldn’t help but think about the complexity that comes with managing multiple containers.

Later in the day, I joined a quick standup where we were discussing some recent issues. One of our services had failed due to an unexpected configuration change in another service. This is exactly what we feared—inter-service communication breaking down because of misconfiguration. We spent half an hour trying to figure out which environment had the wrong setting, and it was a stark reminder that microservices come with their own set of challenges.

As I left the office for the day, my mind was still swirling with thoughts about Docker, microservices, and the trade-offs we make as engineers. The excitement around new technologies is hard to ignore, but sometimes the real work is in balancing those shiny tools against the complexity they can introduce.

Tomorrow brings more debates, more code, and hopefully, a better understanding of how to best utilize these powerful tools. For now, I’ll hit the hay, knowing that whatever solution we choose will be part of an ongoing journey.

Goodnight, Docker.