$ cat post/xen-nightmares:-the-day-my-server-fell-apart.md

Xen Nightmares: The Day My Server Fell Apart

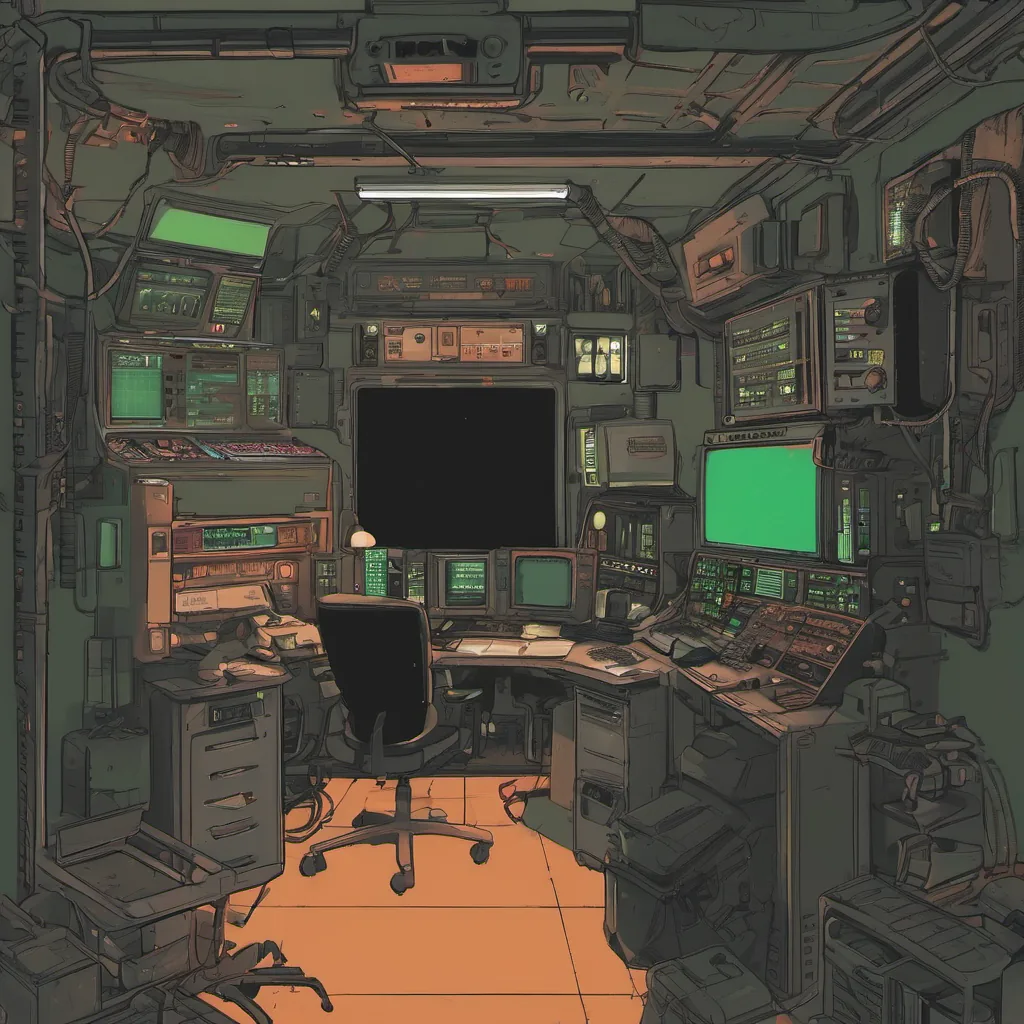

April 10, 2006. A day that will live in infamy for me as a system admin. I had just finished a late night of crunching numbers and running scripts on my work machine when the alarm clock went off to welcome another early morning. My phone buzzed with an alert: “Server 101 is down.” Now, Server 101 was one of our production servers, a reliable beast that had been serving us well for years. But tonight? Well, it didn’t seem to be playing by the rules.

I logged in and quickly realized this wasn’t just any old outage—this felt like a full-on meltdown. The disk utilization was spiking through the roof, with processes running wild. I fired up top and watched the screen as CPU usage hit 100% and memory usage ballooned to an unsustainable level. It was clear: something had gone terribly wrong.

The first thing that came to mind was a misconfigured cron job or some rogue script causing excessive resource consumption. I dove into /var/log/cron looking for any suspicious activity but couldn’t find anything out of the ordinary. Then it hit me—I switched my focus to the Xen hypervisor logs. Xen, our chosen virtualization technology, had been a lifesaver during the days of over-provisioned physical servers, but tonight it seemed like a demon.

I ran xm list and saw multiple VMs with high memory consumption, but no immediate obvious culprit. I decided to reboot Server 101 to see if that would clear things up. After a few moments of intense waiting, the console screen flashed back to life. But my relief was short-lived because upon booting into our standard initrd, it froze on a critical module load. This wasn’t just about resource exhaustion; something fundamental had failed.

I called a meeting with the team and walked them through what I found so far. We spent hours going over logs and configs, but nothing seemed to point to an obvious fix. The consensus was that we needed to get this server back online quickly or risk taking down our entire service. So, we decided on a risky strategy: boot into single-user mode and manually start services one by one.

I spent the next few hours doing exactly that—starting Apache, MySQL, and other critical services. It wasn’t pretty; some processes crashed and needed to be restarted multiple times before they stayed up. By dawn, we had Server 101 back online, but the stress was palpable.

As I looked at the logs once more, something caught my eye—a warning from our monitoring system about a failed backup job for another server. It turns out that while Server 101 was in its death throes, this other critical task had been quietly failing. Our alerting and monitoring systems needed to be better equipped to handle such complex scenarios.

In the days following, I wrote scripts to automate backups and created more robust monitoring around our critical services. We started using Nagios to keep a closer eye on everything and added more redundancy to our Xen configurations. It was a steep learning curve, but it made us stronger as an ops team.

Looking back at that day, I can’t help but chuckle at how far we’ve come since those early days of Xen and the LAMP stack. The sysadmin role has evolved so much in just a few short years—scripting and automation are now table stakes. But even with all the tools at our disposal, there’s still something undeniably raw about staring down an out-of-control server.

So here’s to all those who’ve faced Xen nightmares: we’re in this together, and we’ll figure it out. That’s what makes us ops people—resilient, resourceful, and always learning.