$ cat post/y2k-glitch?-more-like-y2k+10-glimpse.md

Y2K Glitch? More Like Y2K+10 Glimpse

The Year 2000: An Ops Manager’s Nightmare

April 10, 2000. I remember it like yesterday. Back then, the tech world was still reeling from the dot-com bubble burst, and everyone had a mix of hope and skepticism about what would come next. Linux was making serious inroads on the desktop, but we were still dealing with Y2K issues and IPv6 discussions were just starting to gain momentum.

I was working as an operations manager at a small startup, trying to keep our little server farm running smoothly amidst all the chaos. Our main stack consisted of Apache for serving web content, Sendmail for email, and BIND for DNS management. We were also wrestling with early VMware virtualization technology, which promised to change how we managed hardware but was still quite buggy.

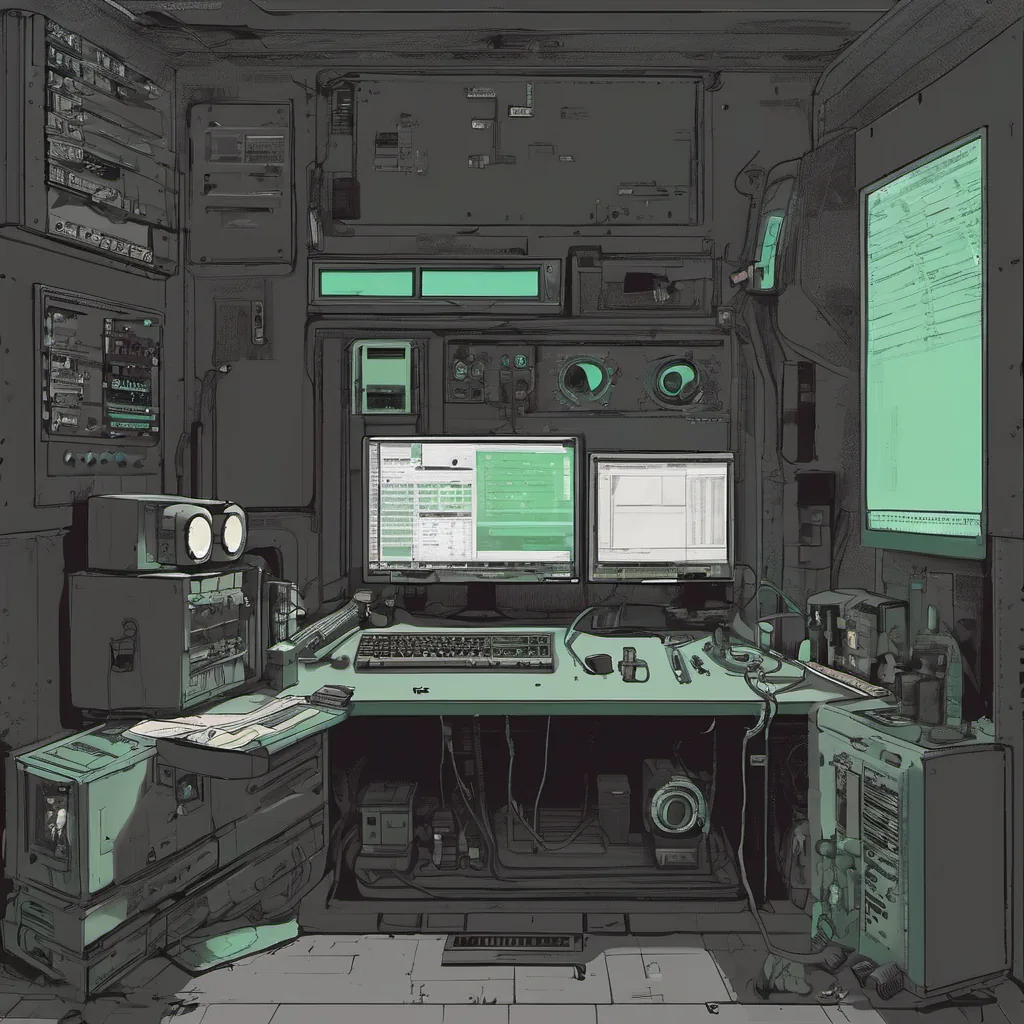

One day, around this time in 2000, our production server just up and died. I found myself staring at a screen full of error messages from Sendmail, one of the most critical services for our application. The logs were telling me that the mail service had gone down due to an obscure bug related to file permissions.

I remember feeling like a bit of a dunce. We had been running this setup for months, and I was supposed to know better. But there it was—our entire infrastructure grinding to a halt because some little detail we hadn’t accounted for was causing issues.

After a few tense hours of digging through the logs and experimenting with different configurations, I finally found the problem. It turned out that an update had been applied during our maintenance window, but the script that managed file permissions hadn’t run properly. This resulted in Sendmail not having the necessary read/write access to its log files.

I fixed it quickly by manually adjusting the permissions and restarting the service. Our server came back online just as we were starting our Monday meeting. The relief was palpable; I could almost taste the satisfaction of a job well done, but there was also that nagging feeling that this wasn’t an isolated incident.

In those early days, managing servers felt like herding cats. Every update brought new challenges, and keeping everything running smoothly required constant vigilance. We were all still learning on the job, navigating a rapidly changing landscape where tools and best practices were evolving faster than any of us could keep up with them.

As I sat back after fixing our little crisis, I thought about how far we had come since the early days of Linux and Apache. It was amazing to see such open-source projects gain mainstream acceptance, but it also felt like a reminder that no matter how much we relied on these tools, they were still just another layer in a complex system.

Reflecting back, I realize now that this event was more than just an operational hiccup—it was a microcosm of the broader tech industry. It taught me valuable lessons about resilience and the importance of detailed documentation and testing before making changes to production systems.

Those early days were filled with late nights, debugging sessions, and plenty of self-deprecation. But they also laid the groundwork for the operational practices that define today’s cloud-based architectures. Looking back, I’m grateful for those challenges because they shaped me into a better engineer.

In the years since, technology has moved on, but some things remain the same: the importance of thorough testing, the value of open-source communities, and the necessity of maintaining vigilance in our operations.

So here’s to 2000—the year that taught us so much about what it means to manage infrastructure in a rapidly changing world.