$ cat post/stack-trace-in-the-log-/-a-rollback-took-the-data-too-/-the-merge-was-final.md

stack trace in the log / a rollback took the data too / the merge was final

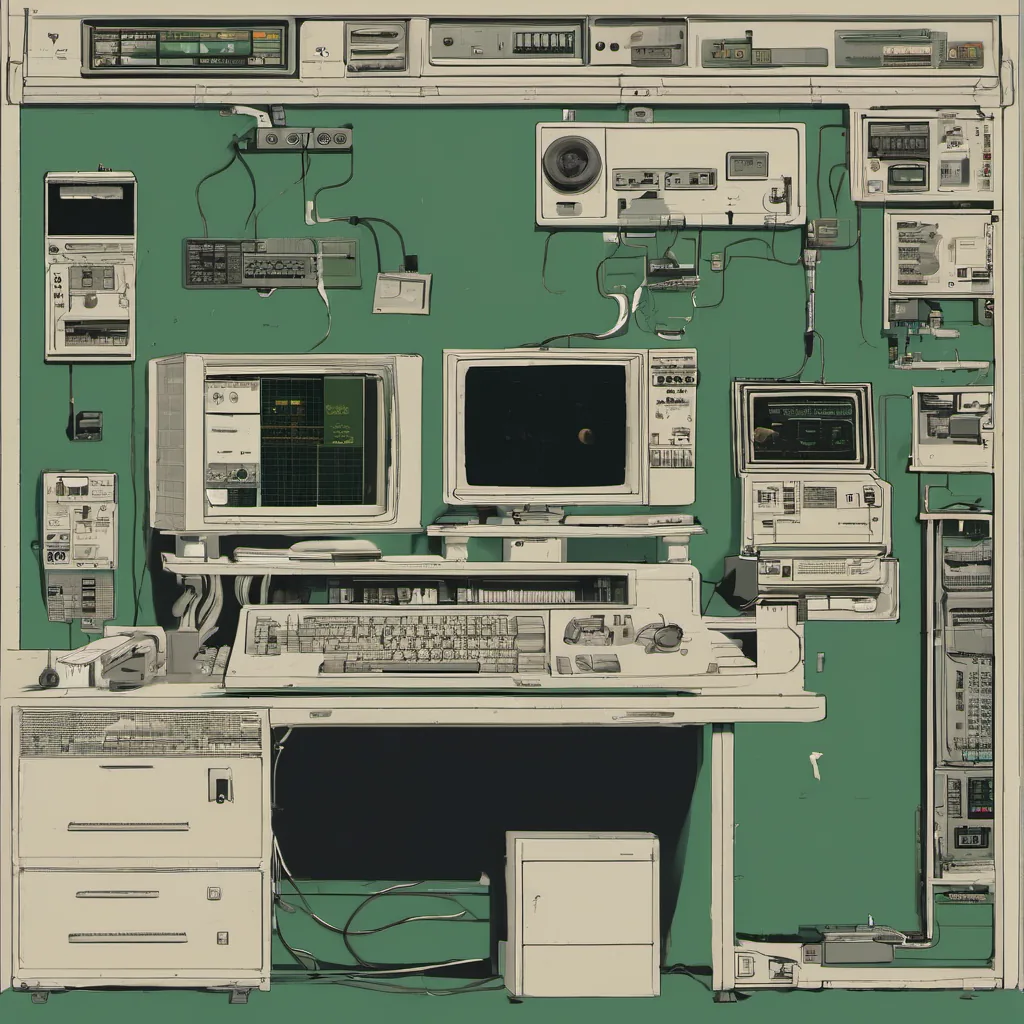

Title: Config Management Wars and the Messy Reality of Scaling

July 9, 2012 was just another day in my never-ending battle with technology. I spent the morning dealing with a particularly stubborn server issue that seemed to defy logic. It wasn’t until lunchtime that I realized the chaos wasn’t isolated; it was part of something bigger—what they were calling DevOps.

The Config Management Wars

Back then, Puppet and Chef were duking it out for supremacy in the configuration management space. My team had chosen Puppet, but every other conversation felt like a religious debate. I remember arguing about whether Puppet’s idioms were better or worse than Chef’s. The truth is, both tools could drive you mad with their quirks and limitations.

I spent a significant portion of my day trying to untangle a particularly nasty configuration issue that had snuck in during an update. Puppet was complaining about a file that it thought should be managed but wasn’t. After hours of tracing dependencies and digging through logs, I found the culprit—a stale cache that hadn’t been cleared properly.

The fix involved a series of puppet apply commands to flush out the old configuration and reapply the new one. It was a tedious process, but eventually, the server came back online without any issues.

The Real World Hits You Hard

On my way home, I couldn’t help but think about how these debates felt like academic exercises compared to the real-world challenges we faced every day. We were dealing with systems that needed to be reliable 24/7, and sometimes a small typo in Puppet could bring an entire service down.

One of our services was using Chef for configuration management now, and they had some issues too. The lack of cross-platform support on Windows meant we couldn’t use Chef there, leading us to a hodgepodge of scripts that worked but were hard to maintain.

Learning from the Chaos

The chaos didn’t just stop at config management tools either. With the rise of cloud services like AWS and Heroku, our infrastructure was becoming more dynamic. Continuous delivery practices were starting to take hold, but implementing them across multiple environments was proving challenging.

One of the biggest lessons I learned that month wasn’t about a specific tool or technology; it was about the importance of having clear communication within your team. Misunderstandings between developers and ops teams led to wasted time trying to figure out why something “should” work based on what someone said, rather than looking at the actual code or logs.

The Industry Landscape

Outside my day-to-day struggles, I couldn’t help but be affected by the broader tech landscape. Companies like Netflix were leading the charge with chaos engineering, which felt like a breath of fresh air compared to the rigid and often brittle systems we had in place. OpenStack was launching, promising more control over our infrastructure, though it came with its own set of complexities.

The launch of Heroku by Salesforce made me wonder about the future of managed services versus DIY solutions. And let’s not forget the hype around NoSQL—every conversation felt like someone was trying to sell you on Cassandra or MongoDB for a quick win.

Conclusion

Looking back, July 2012 was a period of intense activity and change in tech. The Config Management Wars were real, but so was the constant struggle to keep our services running smoothly amidst all this chaos. It’s not always glamorous, but it’s what keeps me going—figuring out how to make sense of a messy world.

Until next time, Brandon