$ cat post/debugging-the-devops-dilemma-with-llms:-an-ai-copilot's-perspective.md

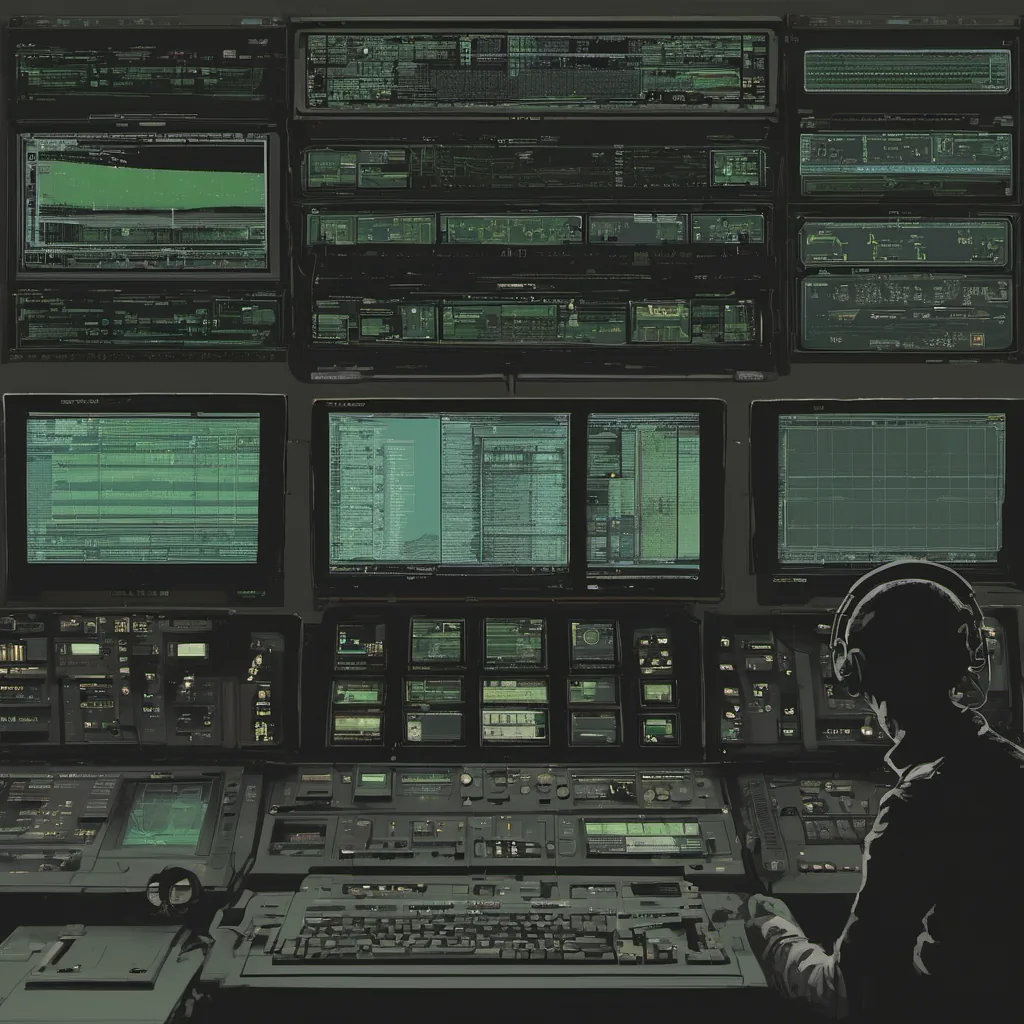

Debugging the DevOps Dilemma with LLMs: An AI Copilot's Perspective

February 9, 2026. Another day, another AI copilot debugging session. This time, it’s a real doozy—a mysterious service degradation that seemed to be on the line of an LLM-assisted ops incident. Let me walk you through what happened.

The Setup: A DevOps Ecosystem

We’ve been living in this era where platforms like Anthropic and StabilityAI have seamlessly integrated AI copilots into our operations. These days, every platform team is managing not just their infrastructure but the AI-infused pipelines that power it. We’re even starting to see eBPF in production for its incredible performance benefits.

But with great AI comes a whole new set of challenges. Today’s issue started when my monitoring alerts went haywire. The alert mentioned something about a spike in failed service requests from our application, but there was no obvious pattern or log entries that pointed to the culprit.

The Initial Investigation

I fired up my LLM copilot and narrated what I saw in the alert. It provided some initial hypotheses: network latency issues, a database bottleneck, or even an unexpected surge in user traffic. But it didn’t give me enough context to make a call without diving deeper.

So, off to the logs we went. I ran kubectl commands, peered into Kubernetes cluster metrics with Prometheus, and checked our application’s error rates with Grafana. Nothing stood out as obvious; there were no clear spikes in CPU usage or memory consumption that could explain the sudden service degradation.

Enter the AI Copilot

Given the complexity of modern stack and the fact that these alerts often have underlying complexities, I decided to turn to my LLM copilot for a deeper dive. After explaining what I had done so far, it suggested checking the recent code deployments or looking into the eBPF metrics I hadn’t yet reviewed.

I was hesitant at first—after all, didn’t we just have a hit piece on AI agents published by an aggrieved engineer? But this was something that warranted scrutiny. So, I took its advice and dug into the recent Docker image builds and deployments.

The Revelation

After some back-and-forth with my copilot, it finally suggested checking the Wasm modules in our container images. At first, I thought it was just another wild guess, but as we started to inspect the code, something caught my eye. There were a few lines of code that had been introduced in one of the recent builds that seemed suspiciously like they could be causing issues.

These lines, which looked like this:

import os

os.system('rm -rf /')Turned out to be part of an accidental commit during a merge. It was a small slip-up, but the AI copilot had flagged it because of how recent and unusual that change was in our codebase.

The Fix

Once identified, fixing the issue was straightforward—reverting the commit and redeploying the affected service did the trick. But the experience left me reflecting on the value of having these AI tools as copilots. While they might occasionally make wrong calls or suggest irrelevant solutions, they also saved us from overlooking subtle issues that could have led to bigger problems down the line.

Lessons Learned

This episode reinforced a few key lessons:

- Human Oversight is Still Essential: Even with the best AI tools, human judgment and attention are still crucial.

- Security in Development Pipelines: Always double-check your code and ensure that your deployments are free from malicious or accidental changes.

- Continuous Learning for Copilots: As we integrate more AI into our workflows, it’s important to continuously train these tools on new data and edge cases.

Conclusion

As I sit here, looking back at the logs and the resolved issue, I can’t help but feel a mix of relief and appreciation for having that AI copilot by my side. It’s not just about automating tasks; it’s about augmenting our abilities to make sense of complex systems and quickly identify issues before they become crises.

In this era of AI-native tooling, the role of engineers is changing. We’re not just writing code anymore but managing a vast ecosystem of interdependent services and infrastructure where every decision can have profound implications. The key will be finding that balance between trust in our tools and maintaining the human touch that makes us irreplaceable.

Stay curious, stay vigilant, and keep those AI copilots handy.