$ cat post/the-pager-went-off-/-the-monorepo-grew-too-wide-/-the-wire-holds-the-past.md

the pager went off / the monorepo grew too wide / the wire holds the past

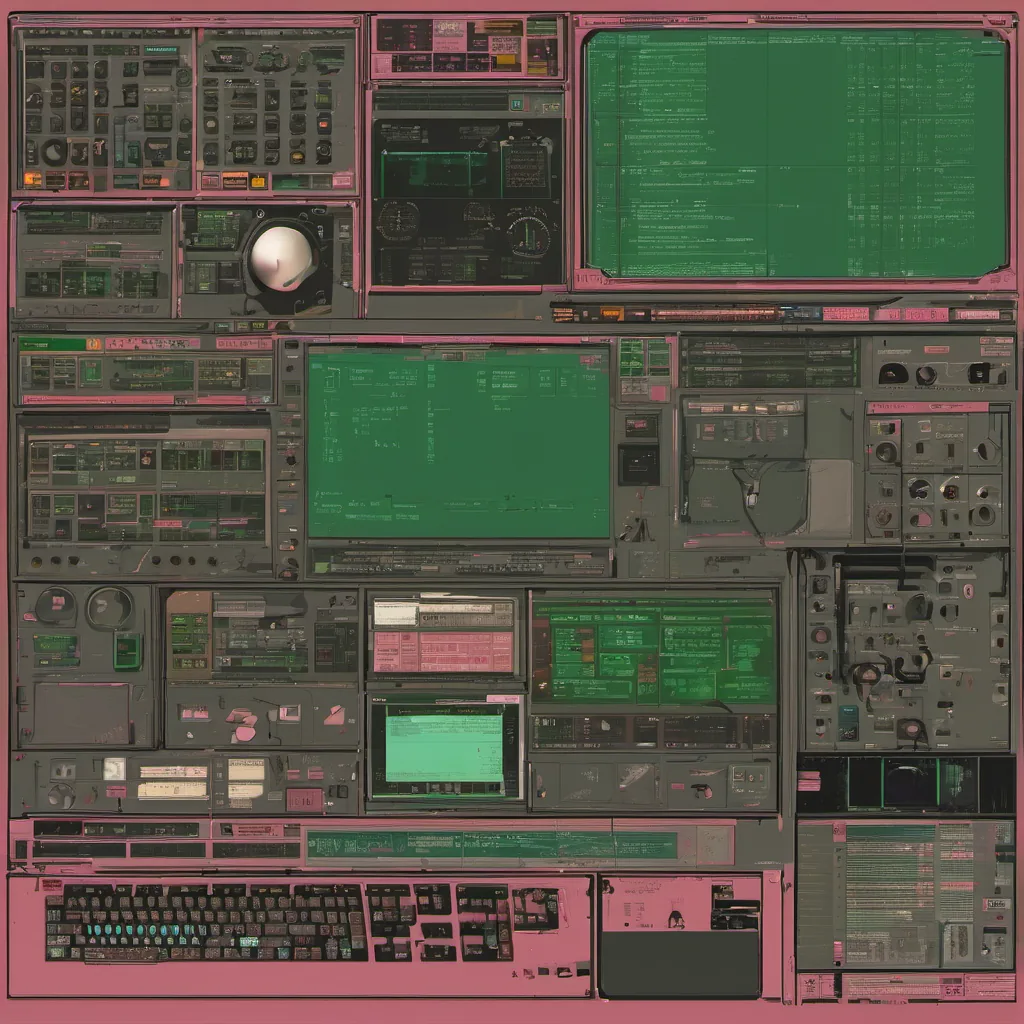

Title: Debugging in the Age of Digg and Firefox

August 9th, 2004 - It’s a Friday afternoon, and I’m sitting in our small office, surrounded by the usual suspects: half-empty cups of coffee, scattered papers, and the faint hum of servers. Today, though, there’s an extra buzz in the air, something that feels like more than just a good Friday.

The tech world is abuzz with Firefox’s launch, a mere three weeks ago, and the beginnings of what would be known as Web 2.0 are starting to take shape. Digg is on the rise, and Reddit is still finding its footing. In this nascent era of open-source stacks and rapid development cycles, I find myself in the thick of it all.

Today’s challenge? A critical issue with our application that’s causing severe performance drops during peak traffic times. The team has been working non-stop to identify the culprit, and we’ve narrowed it down to a specific piece of code. But here’s the kicker: this particular function is a Perl script that runs every few minutes in the background.

Now, you might think Perl scripts are a relic from the past, but they’re still very much alive and well. In our stack, which includes Apache, MySQL, and Perl, the Perl script handles some complex business logic that’s crucial for user interactions. We use it to fetch data from multiple sources, process it, and then store the results in a cache.

The issue is that this script takes an unusually long time to run on certain datasets, causing a backlog and ultimately leading to server timeouts. The pressure is on because every second counts; we need to figure out what’s going wrong and fix it before our customers start noticing.

After some debugging, I realized the problem was in how we were handling database connections. We weren’t closing them properly, which meant that over time, more and more connections would stay open, eventually maxing out the available resources. It’s a classic case of memory leakage, but one that’s surprisingly easy to miss if you’re not paying close attention.

To fix this, I decided to take two approaches: optimize the script itself and improve our connection management. The first step was profiling the script using Devel::NYTProf, which gave us some valuable insights into how it was performing. We identified a few bottlenecks where we could optimize database queries and reduce unnecessary operations.

The second step involved rewriting parts of the script to ensure proper closure of database connections. This meant adding more error handling and making sure every connection opened had a corresponding close statement. It wasn’t glamorous work, but it made all the difference.

In retrospect, this experience taught me a few valuable lessons. First, no matter how old or seemingly insignificant a technology might seem (in our case, Perl), it can still be a critical part of your system and requires thorough understanding and maintenance. Second, performance issues often stem from simple, but overlooked, details like connection management.

As the weekend looms, I feel both exhausted and satisfied. Exhausted because we worked through the night to get this fix in place; satisfied because not only did we solve a critical issue, but we also improved our overall system stability and reliability.

The tech world moves fast, and today’s lessons will undoubtedly be different tomorrow. But for now, as I sit here with the satisfaction of a job well done, I can’t help but think about how far things have come since Firefox’s launch and where they’ll go in the future. One thing is certain: the sysadmin role continues to evolve, requiring not just technical expertise but also adaptability and persistence.

That’s my Friday reflection for August 9th, 2004. What a week it has been!