$ cat post/grep-through-the-dark-log-/-i-pivoted-the-table-wrong-/-the-wire-holds-the-past.md

grep through the dark log / I pivoted the table wrong / the wire holds the past

Title: Kubernetes & Me: A Love-Hate Relationship

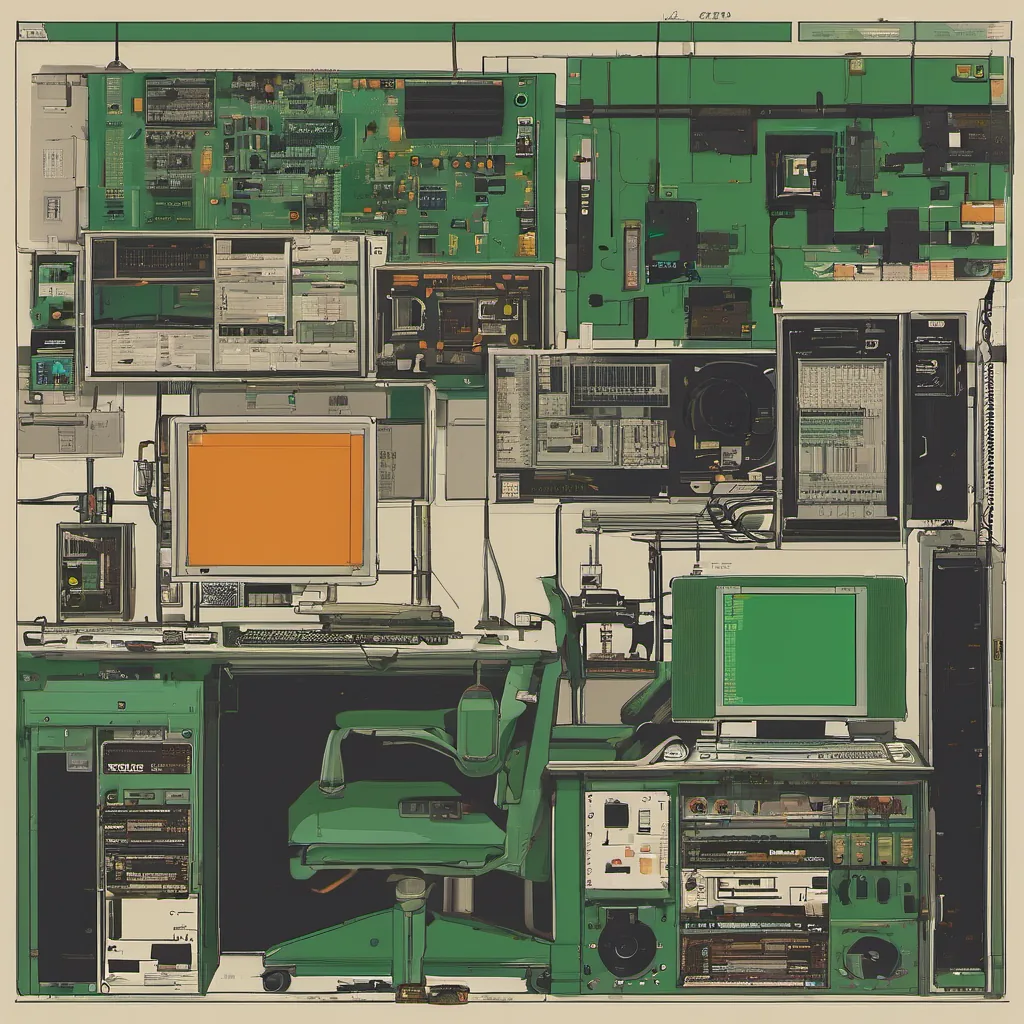

May 8, 2017 was just another day in the life of a platform engineer. I had just finished a debugging session on our production cluster and was now staring at my monitor, wondering if I could finally take a break for the day. The title of today’s post might make it sound like a love story, but let’s be honest—it’s more of a complicated relationship.

You see, Kubernetes has been all the rage since early 2017, and by now, everyone is jumping on the bandwagon. But as someone who has to live with this beast every day, I’m not sure if we really understand what it does for us. Let me tell you about a recent experience that shed some light on its complexity.

A few days ago, we launched a new service using Kubernetes. It was supposed to be the epitome of ease and automation—deploy, forget, and let Kubernetes take care of everything. But oh boy, did it ever complicate things.

First off, Kubernetes is great for scaling and managing pods across nodes. I love how it abstracts away the details of container orchestration and provides a simple API to work with. But every time something goes wrong, it’s like trying to diagnose a cluster of code monkeys in a war room.

I spent hours tracing logs from multiple nodes, running kubectl commands, and pulling my hair out trying to figure out why our application was crashing intermittently. It turns out that one of the pods was leaking memory due to an unoptimized piece of code in our application. But Kubernetes made it incredibly difficult to pinpoint which pod had the issue because they all appeared identical from the outside.

This led me down a rabbit hole of debugging tools and practices. We tried using Prometheus for monitoring and Grafana for visualization, but it still wasn’t enough. We needed something that could tell us not just what was happening in our cluster, but why it was happening. That’s when I stumbled upon Istio and Envoy.

Istio seemed like a lifesaver with its service mesh capabilities. It promised to solve our inter-service communication issues and provide better visibility into the network traffic. But integrating it with our existing setup was a nightmare. The learning curve was steep, and every time we made changes, something broke. I spent countless hours wrestling with YAML files, trying to figure out which configuration would make Istio play nicely with Kubernetes.

On top of all this, Helm emerged as the de facto tool for packaging and deploying applications on Kubernetes. While it simplified some aspects of deployment, it introduced another layer of complexity. Every time we wanted to update our application, we had to navigate through a myriad of charts, templates, and values files. It felt like we were back in the days of shell scripts with no end in sight.

The serverless hype was still going strong, but for us platform engineers, it was hard to see its immediate benefits. Sure, AWS Lambda looked cool, but would our users really benefit from yet another abstraction layer? The jury is still out on that one.

As I sit here now, reflecting on the day’s events, I can’t help but feel a mix of frustration and admiration for Kubernetes. It’s like being in a relationship with someone who is both incredibly complex and endlessly fascinating. There are days when it feels like I’m constantly firefighting, dealing with unexpected issues and misconfigurations. But there are also moments of sheer joy when everything clicks into place, and the application runs smoothly.

In this era of rapid technology change, Kubernetes remains a powerful tool in our arsenal. However, we need to be mindful of its complexity and the potential pitfalls that come with it. Maybe one day, I’ll find a way to tame this beast and make it work more seamlessly for us.

For now, though, I’m just grateful for another day of debugging sessions and late-night conversations about how we can improve our setup. Here’s to Kubernetes—and all the love-hate relationships that come with it!

Until next time, Brandon