$ cat post/y2k-redux:-why-i-stayed-up-all-night.md

Y2K Redux: Why I Stayed Up All Night

January 8, 2001. It’s a date that, for many of us in the tech world, will never be forgotten. This was the night we all stayed up with our fingers crossed, waiting to see if the world had made it through Y2K without any major catastrophes. For me, it was more than just a historical event—it was another sleepless night filled with late-night debugging sessions and cross-checks.

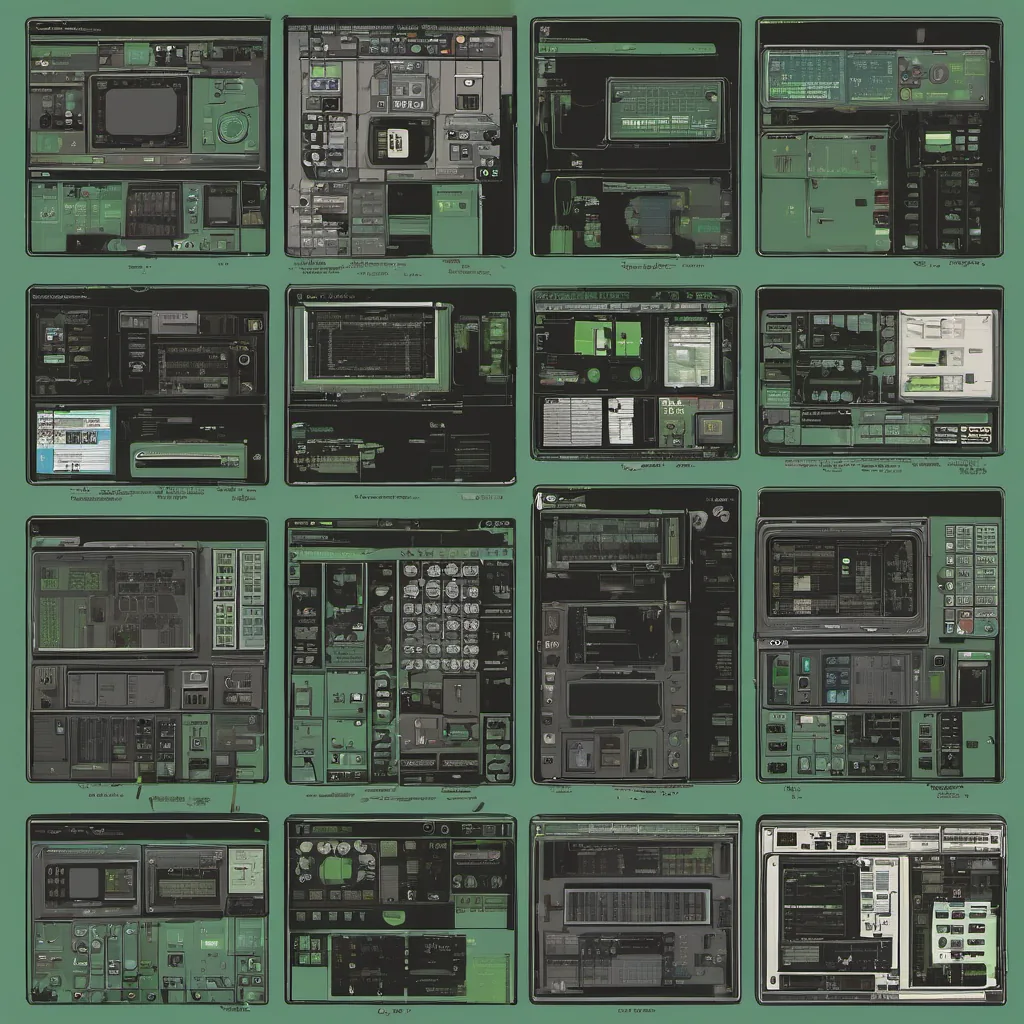

I remember the tension in the air as I sat at my desk in the data center, surrounded by monitors displaying our company’s critical systems. The office next to mine was empty; everyone had gone home or were in meeting rooms huddled over laptops, preparing for the worst. I felt a mix of excitement and dread, knowing that any second now, something could go wrong.

Y2K Redux

The year 2000 didn’t just end on December 31st, it kindled a new wave of concerns about potential issues in systems built before the turn of the century. Many software applications were hardcoded with two-digit dates, and as we approached January 1, 2001, there was a very real possibility that these systems would interpret “01” as 1901 instead of 2001.

Our team had spent months preparing for this eventuality. We had gone through every piece of code we owned, manually checking for and fixing date-related issues. But you can only do so much with a manual audit—there were always going to be corner cases that slipped through the cracks. And tonight, as I sat in the data center, I was prepared for anything.

Debugging in Real Time

One of our key systems, which handled critical financial transactions, had some unexpected issues. The system was supposed to handle a spike in traffic around midnight and seamlessly transition between date rollovers. But at 12:05 AM, it started to slow down significantly. I could see the metrics on my monitoring tools climbing—CPU usage, memory consumption, network latency—all pointing to an overload.

I quickly jumped into the application logs to look for any anomalies. What caught my eye was a series of error messages indicating that certain transactions were failing due to date parsing issues. It looked like some of our custom scripts weren’t handling the rollover correctly and were throwing exceptions.

Without much time to spare, I began to patch the code on the fly. I knew this wasn’t ideal—fixing critical issues in a live production environment was always risky—but we had no choice. I made the necessary changes, redeployed the application, and watched as the system gradually recovered. By 1:30 AM, everything seemed to be running smoothly again.

Lessons Learned

As I sat back in my chair, breathing a sigh of relief, I couldn’t help but think about how much we had learned from this experience. The Y2K issue wasn’t just about coding and infrastructure—it was also about preparedness and resilience. We realized that no matter how many code audits or tests you run, there will always be unknowns.

In the days following January 1st, 2001, our team spent even more time reviewing and improving our systems. We implemented better logging practices, set up automated monitoring tools, and created disaster recovery plans. These steps were not just for Y2K issues—they were foundational improvements that would help us in any future crisis.

Moving On

January 8th was a reminder of how quickly things can change. The next day, as I walked out of the data center into the bright morning light, I couldn’t shake the feeling of gratitude. We had made it through another challenge, and although the Y2K bug had been fixed, we were now better prepared for whatever might come our way.

In the years that followed, the tech industry continued to evolve rapidly—Apache, Sendmail, and BIND remained stalwarts in system administration, while new technologies like VMware started to gain traction. But looking back at that night, I realize it was a pivotal moment that shaped my career and instilled in me a sense of urgency and preparedness for unexpected events.

So here’s to all the late nights, debugging sessions, and cross-checks we’ve endured. They may not be glamorous, but they are essential in ensuring that our systems can handle any challenge thrown their way.