$ cat post/december-8,-2014---a-day-in-the-life-of-a-devops-engineer.md

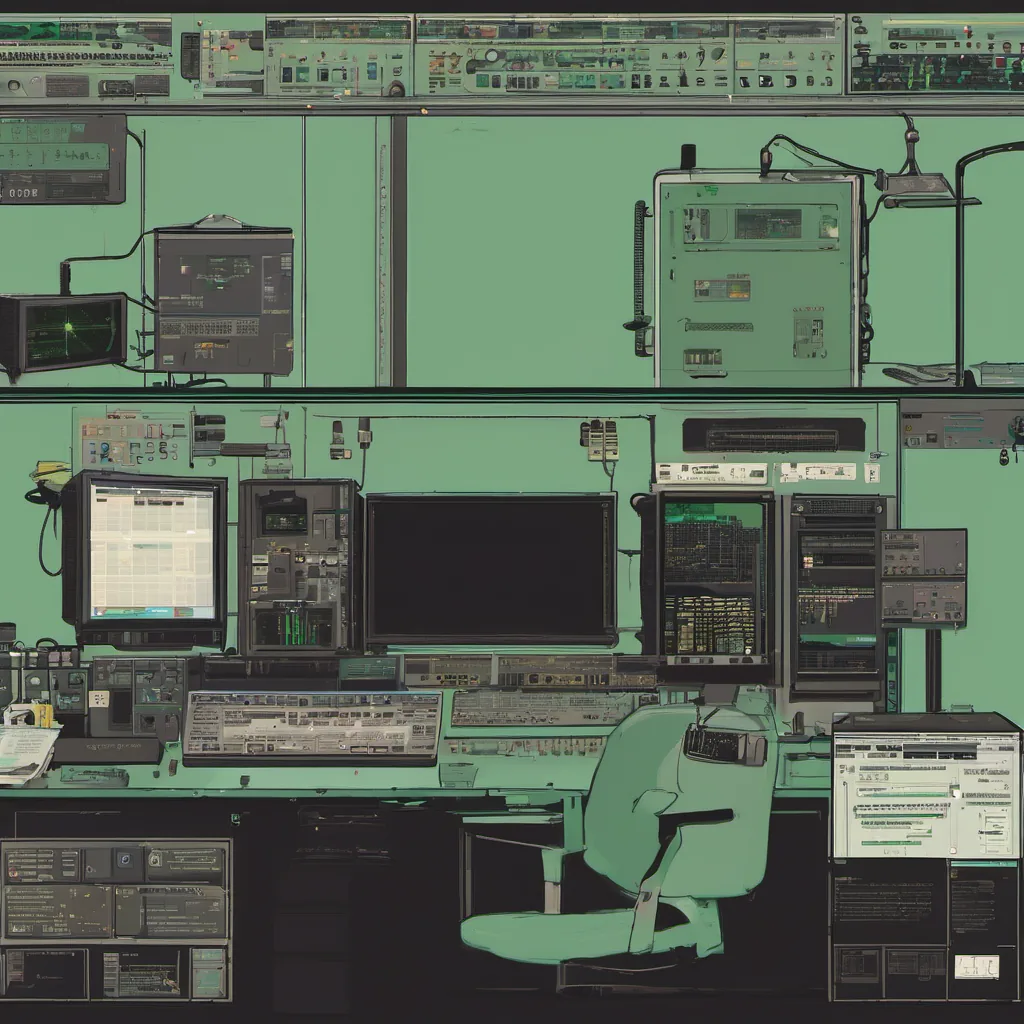

December 8, 2014 - A Day in the Life of a DevOps Engineer

December 8, 2014 was another day on the rollercoaster that is my life as a platform engineer. I woke up to the sound of my cat meowing for breakfast and then proceeded to check Twitter to see what kind of chaos had erupted overnight. The hacker news headlines were a mix of the usual tech drivel, but one story stood out—CoreOS was building Rocket, their own container runtime. That’s when reality set in: containers were finally going mainstream.

Today started with a quick stand-up where we hashed out priorities for our team. Our project is a microservices architecture using Docker and Kubernetes (then still called Borg). We’ve been playing around with it for months now, but the real challenge lies in orchestrating these services at scale.

I spent most of my morning debugging a stubborn service that kept crashing on startup. I had added logging galore, but the logs were sparsely populated. After an hour of scratching my head and wondering if I’d accidentally forgotten to include a semicolon somewhere (yes, I have done that before), I decided to take a closer look at how we handle environment variables.

Turns out, our CI/CD pipeline wasn’t properly setting one critical variable. Once I fixed that, the service came back up like a champ. It’s moments like these where you realize the importance of having comprehensive logging and proper testing. Sometimes it feels like I’m constantly debugging spaghetti code, but it keeps me on my toes.

Around lunchtime, we had a team meeting to discuss how to handle our growing number of microservices. We’ve been running into some issues with state management, especially as services start talking to each other more frequently. CoreOS’s etcd seemed like the way to go for managing shared state across multiple containers. However, opinions were mixed on whether we should use etcd or stick with a traditional database solution.

I argued in favor of etcd because it’s designed for high availability and low latency, which is crucial when dealing with microservices. But some team members pointed out that it might add unnecessary complexity to our system. The debate raged on until someone mentioned Kubernetes had its own built-in service discovery mechanism using DNS. This seemed like a simpler solution but required us to rethink how we handle state transitions.

After the meeting, I spent some time prototyping a small proof-of-concept for integrating etcd with our microservices. It wasn’t glamorous work, just setting up the infrastructure and writing basic scripts to interact with etcd. But sometimes these mundane tasks are where you find the real solutions.

By the afternoon, I was feeling pretty good about what we had accomplished. Our service seemed stable, and the team was making progress on our state management issue. As I looked over my logs for the day, I realized that despite all the bugs and debates, there’s something incredibly rewarding about seeing a complex system come together.

As I left work, I couldn’t help but think about how fast things were moving in the tech world. Just last year, we were still playing around with Docker containers as an experiment. Now they’re part of our core infrastructure. And just when you thought you had everything figured out, something new comes along—like Rocket or SRE practices—and shifts your perspective.

But that’s what keeps it exciting. Each day is a fresh challenge, and each problem solved feels like a small victory. On this December 8th, I felt grateful for the chaos of DevOps and the constant evolution of technology. It may be unpredictable, but it’s also full of endless possibilities.