$ cat post/debugging-the-dream-team:-a-lamp-stack-nightmare.md

Debugging the Dream Team: A LAMP Stack Nightmare

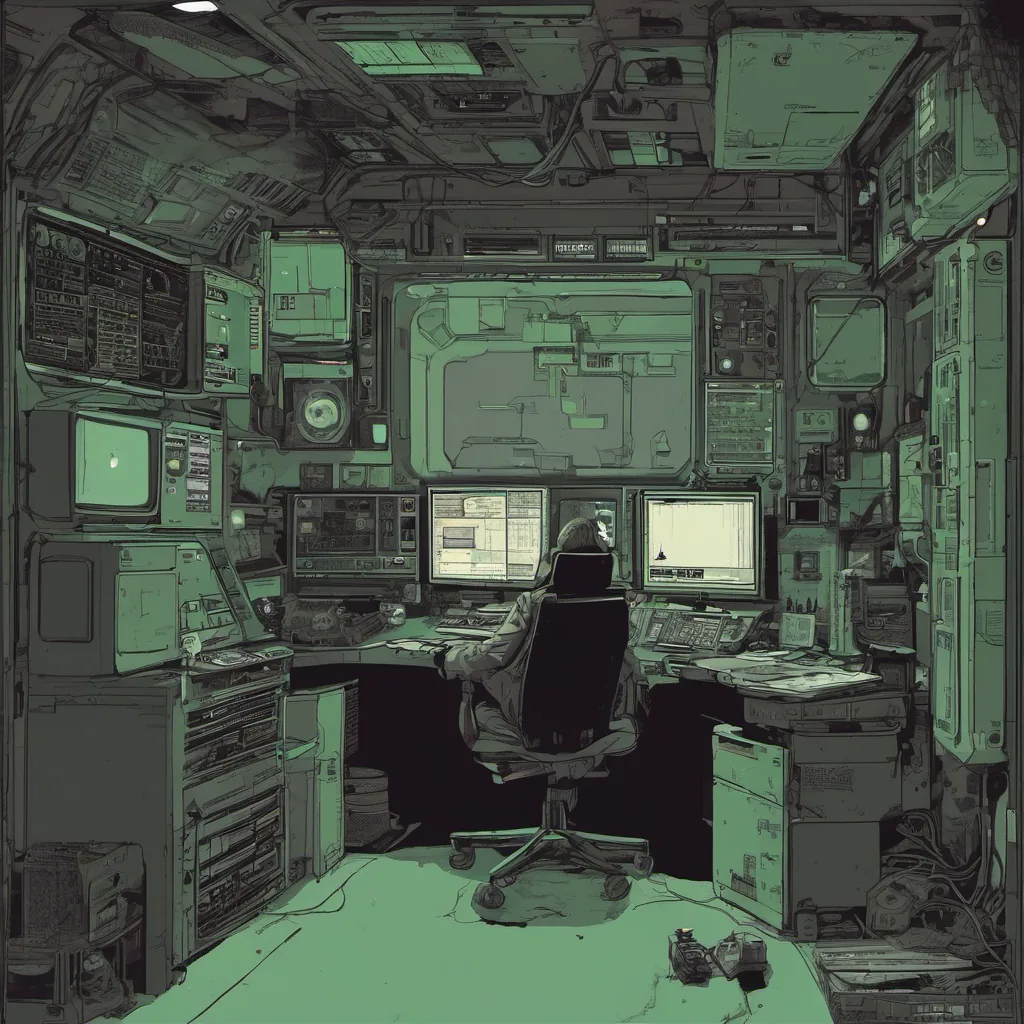

December 8, 2003. It’s a Wednesday morning, and I’m staring at my screen with the kind of frustration that only comes from having spent too many late nights. I’ve been working on our web application for months now, but it just won’t behave.

This project was supposed to be a showcase. A demo of what we could achieve by leveraging open-source technologies like Linux, Apache, MySQL, and PHP (the LAMP stack). But right now, everything feels like a nightmare. The application has been running fine until today when I started getting hundreds of 500 Internal Server Error messages. It’s like the whole system is conspiring against me.

The Setup

Let’s take a quick tour through our setup: we’re using Debian Linux for the server OS, Apache as our web server and reverse proxy, MySQL for the database backend, and PHP for the application logic. We’ve got Memcached installed to cache frequently accessed data and lighten the load on the database. Oh, and let’s not forget about Xen running in a VM for some of the more resource-intensive tasks.

The Problem

The 500 errors started appearing around midnight, which was odd because our logs showed no unusual traffic or activity at that time. I pulled up my favorite debugging tool, top, to see if anything stood out. At first glance, everything seemed within normal operating ranges: the CPU was barely under 20%, memory usage was steady, and disk I/O wasn’t a factor.

But then I noticed something strange—our MySQL processes were maxing out the CPU. Something must be hitting our database harder than it should have been. I dove into mysqladmin proc, which showed that several queries were running for an abnormally long time. One query in particular caught my eye: it was a large join statement that was taking over 30 seconds to execute.

The Investigation

I started with the obvious questions: Was there a sudden increase in traffic? Had someone accidentally left something running? Checking our server logs, I found nothing unusual. No new users, no spikes in requests. It looked like a slow query had just decided to hit its stride at midnight and never let up.

Next, I checked the PHP scripts that were generating these queries. They seemed fine—no obvious bugs or inefficiencies. But something wasn’t right. I added some logging around the database calls and reran the problematic query manually. It took 45 seconds! That’s way too long for such a simple operation.

The Fix

After digging through the code, I realized that the culprit was our caching layer—Memcached. We had set it up to cache results of certain queries but failed to properly invalidate those cached values when the underlying data changed. This meant that stale data was being served repeatedly, and as more requests hit the system, the database had to do more work.

I fixed the issue by adding an extra check in our application logic to ensure that the cache was only used if the data hadn’t been updated recently. This reduced the load on the database significantly, and the 500 errors started to disappear almost immediately.

The Aftermath

This experience taught me a valuable lesson about the importance of thorough testing and validation, especially when dealing with complex systems like ours. We were so focused on getting things up and running that we sometimes neglected to fully understand how each component interacted with the others.

Looking back, it was a good reminder that no matter how robust your infrastructure or application is, there will always be unexpected challenges. But those challenges can also lead to significant learning opportunities if you approach them with the right mindset.

In the end, the whole ordeal felt like a rite of passage for our team—another step on the path from greenfield projects to production systems. And while it was frustrating at the time, it was ultimately rewarding to see how we could turn problems into solutions and improve our processes along the way.

That’s my tale from December 8, 2003. A day when LAMP wasn’t just a buzzword but an actual stack full of quirks and challenges waiting to be debugged.