$ cat post/tab-complete-recalled-/-the-pipeline-hung-on-step-three-/-the-log-is-silent.md

tab complete recalled / the pipeline hung on step three / the log is silent

Title: Kubernetes Complexity: A Month of Navigating Turbulence

September 7th, 2020. I wake up with the usual coffee and a laptop open to my backlog of emails and tickets from the night before. But there’s something different this morning—something more tangible than the relentless grind that’s been weighing on us over the past few months. It’s the reality that the tech world has been shifting gears, and so have we.

The day starts with a Slack thread about our internal developer portal, Backstage. We’ve been iterating on it for years now, but this time feels different. There are new features being requested by teams who want more control over their infrastructure and a single source of truth for all things related to their apps. I spend the morning hashing out some ideas with the dev portal team, trying to balance usability with flexibility.

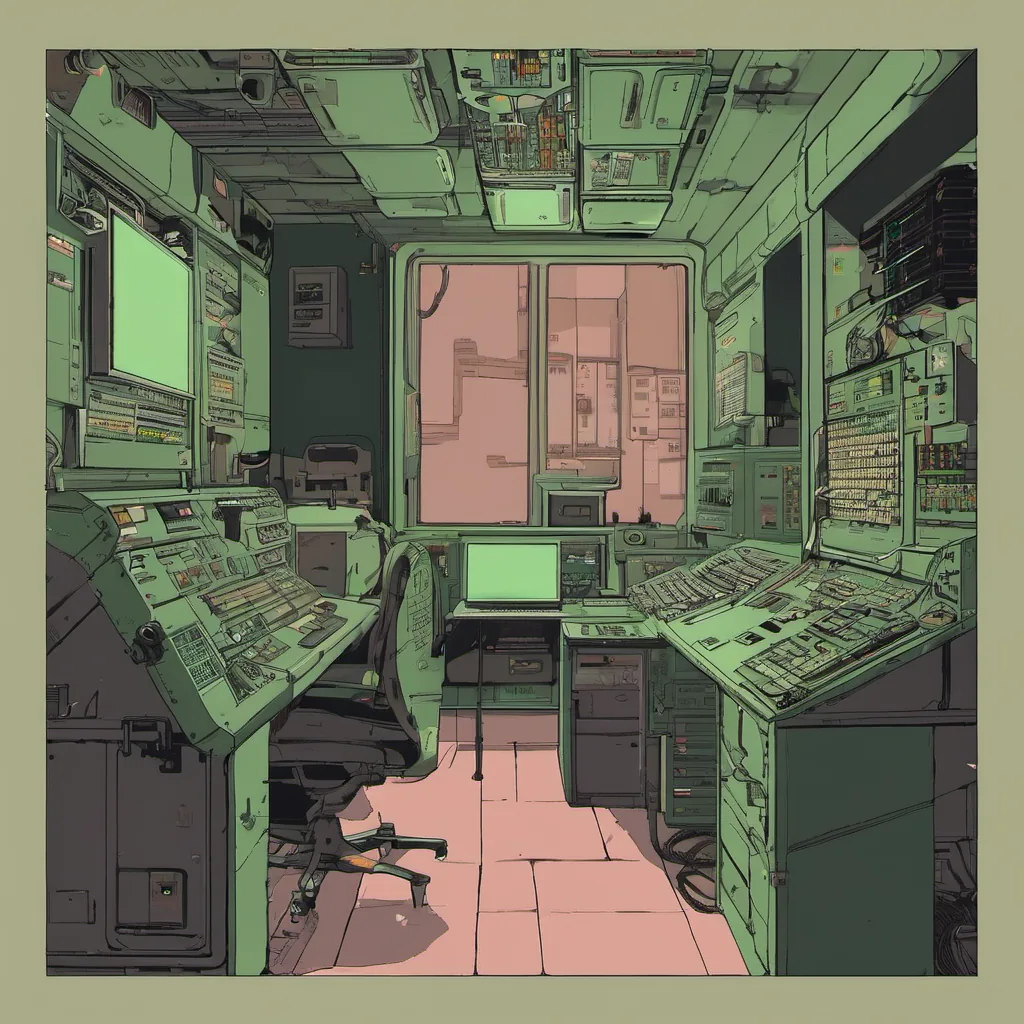

Later, as I’m diving into one of our Kubernetes clusters, I notice something off in one of the services. A pod is stuck in an error state, and it’s not clear why. My first instinct is to check the logs, but there’s a sea of cryptic errors that don’t give much insight. I decide to try eBPF. This is the first time we’ve really had to dive into eBPF for troubleshooting, and it feels like a rabbit hole—lots of new commands, concepts, and tools to learn on the fly.

I spend hours digging through the trace outputs, cross-referencing with our deployment manifests and service configurations. It’s frustrating because every time I think I’m making progress, I hit another dead end. But finally, after what feels like an eternity, I find a pattern in the traces that points to a specific network issue—a misconfiguration in the ingress controller. Once it’s fixed, everything returns to normal. It’s a small victory, but it’s also a reminder of how much complexity can creep into our infrastructure.

As I sit down for lunch with my team, we discuss some recent changes in the industry. The Nvidia Arm acquisition is making waves, and there’s been chatter about how this might impact cloud providers and our own architecture. We’re still digesting what it means for us in the short term, but I can’t help but feel a bit anxious about the long-term implications.

The conversation then turns to GitOps tools like ArgoCD and Flux, which have become staples of our platform engineering toolkit. There’s talk of moving more towards automated deployments and leveraging these tools to reduce manual intervention. The consensus is that while they offer great benefits, they also come with their own set of challenges—like figuring out the right blend of automation versus human oversight.

Back at my desk, I’m looking over some code changes for a new service we’re rolling out. It’s been a long month, and it’s easy to feel like every day is a struggle to keep up with the growing complexity. But there are moments like these—small victories that remind me why I love this job.

As I wrap up the day, I reflect on the month. We’ve faced challenges, sure, but we’ve also made progress. The internal developer portal continues to evolve, and our Kubernetes cluster is becoming more robust thanks to eBPF and GitOps tools. And while the world outside is in flux, at least within my little corner of tech, there’s a sense of steady progress.

I look forward to the next month, knowing that whatever comes our way, we’ll face it together—because that’s what this job is all about: navigating the turbulence, learning from each other, and building something better every day.

This journal entry captures the essence of the time—a mix of technical challenges, evolving technologies, and personal reflections on the journey.