$ cat post/debugging-the-llm-apocalypse:-a-tale-of-two-infrastructure-hells.md

Debugging the LLM Apocalypse: A Tale of Two Infrastructure Hells

October 7th, 2024. I sat down with a cup of cold coffee (it’s been a long day) and opened my editor to draft what would hopefully be an honest reflection on our current tech landscape.

The AI/LLM Infodemic

Last week, the ChatGPT Search was all over Hacker News, spurring a million-dollar war for search market share. Meanwhile, I’ve been knee-deep in the LLM (Large Language Model) infrastructure explosion at my workplace. Our platform team has been scrambling to keep up with the demand and manage the complexity.

A Tale of Two Infrastructure Hells

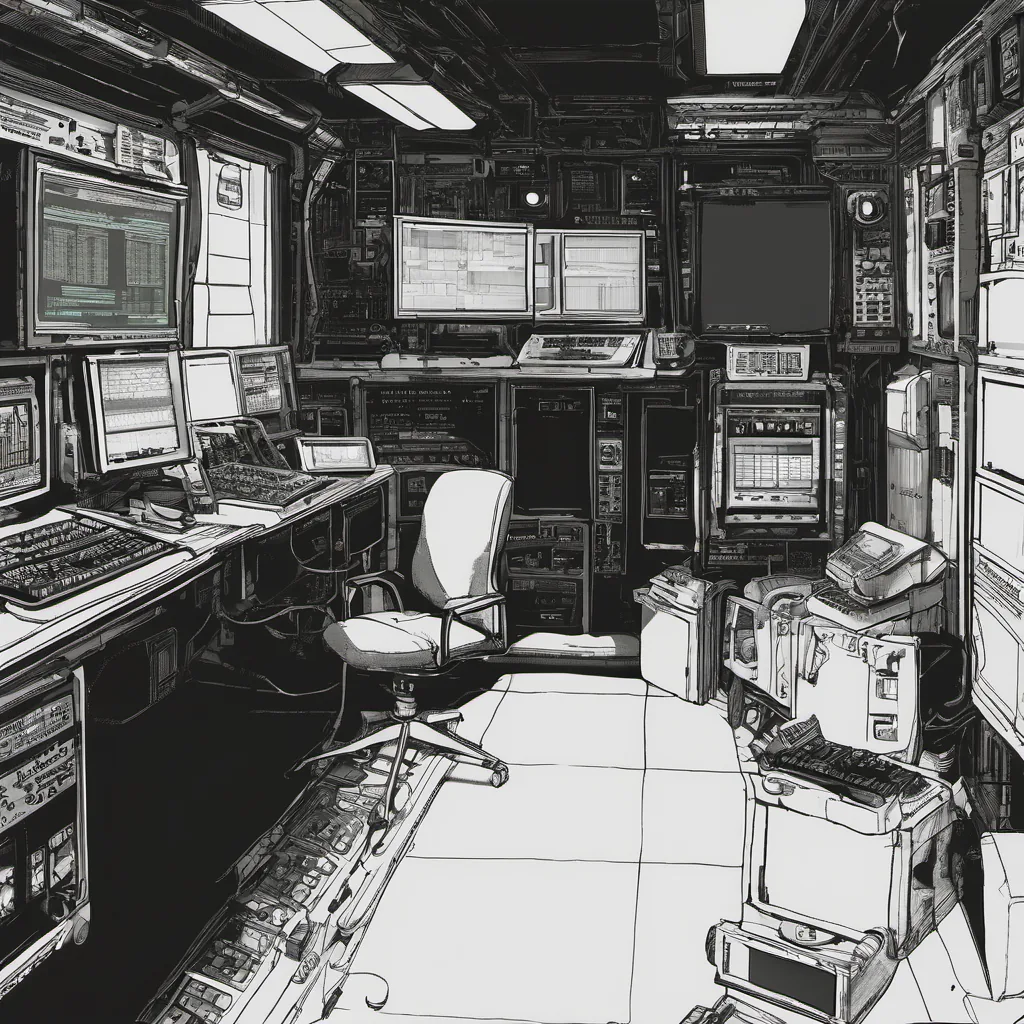

Our latest project involves building an LLM-powered chatbot for customer support. The idea was straightforward enough: make our support agents more efficient by having a bot handle common queries and escalate issues when necessary. But, boy, were we in for it.

Server-Side WebAssembly

We decided to leverage WebAssembly (Wasm) on the server side as part of our platform strategy. The plan was to use Wasm modules to serve dynamic content generated by our LLMs more efficiently. However, the devil is always in the details, and we quickly found ourselves navigating a treacherous landscape.

One day, I spent hours debugging a segmentation fault in one of our Wasm modules. It turned out that an overflow in some vector operations was causing a core dump. Fixing it involved carefully auditing the LLM’s output to ensure no illegal values were being passed into these functions—a task more akin to archeology than programming.

FinOps and Cloud Cost Pressure

On the cost side, our cloud provider had recently announced steep rate increases for compute resources. Our FinOps team was already breathing down our necks about how we could optimize costs while maintaining performance. One of my engineering leads argued that we should move more processing to edge servers rather than running everything in the cloud, but I couldn’t help but feel a bit like Sisyphus pushing a rock up the hill.

Platform Engineering Mainstream

Platform engineering has truly come into its own. We’re now seeing clear paths for growth and development, with staff+ and engineering tracks normalized across the board. Yet, with this newfound stability comes a deluge of choices and tools to manage. From Kubernetes clusters to Istio service meshes, it’s a veritable jungle out there.

The Day I Learned Git Wasn’t Magic

A minor incident on our internal platform highlighted another painful truth: just because something “works” in development doesn’t mean it will scale well in production. During an upgrade, we lost access to one of our databases. After some frantic digging and collaboration with DevOps, we managed to restore service, but I couldn’t help feeling that if I had done a better job understanding Git’s intricacies earlier, this might have been avoided.

The Big Picture

Looking back at the stories from Hacker News, it feels like we’re living through a renaissance of tech innovation. From space travel to AI breakthroughs, there’s so much happening that it can be overwhelming. But amidst all these advancements, I find myself thinking about the real work: debugging, arguing about best practices, and learning on the fly.

Conclusion

As I close my editor for today, I’m reflecting on the challenges of our current era. It’s a time of both incredible progress and immense complexity. The AI/LLM wave is just beginning, and with it comes endless opportunities—and headaches. But that’s what makes this job so rewarding: every day brings something new to learn.

So here’s to debugging, and to the constant evolution of tech—may we all find a way to navigate these hells while still enjoying the ride.

Feel free to tweak any part of this to better fit your personal style or technical context.