$ cat post/a-patch-long-applied-/-the-deploy-left-no-breadcrumbs-/-the-cron-still-fires.md

a patch long applied / the deploy left no breadcrumbs / the cron still fires

Title: Debugging AI Copilots in a Post-Hype Kubernetes World

July 7, 2025. I wake up to the familiar morning alarm, but this isn’t your usual day. Today is different because we’ve moved beyond the hype of Kubernetes and into a new era where platform teams own the AI infrastructure pipelines, making our lives both easier and more complex.

Yesterday was another rollercoaster with the LLM Inevitabilism story trending on Hacker News. The discussion was heated—some arguing that we’re past the peak, others believing the best is yet to come. Personally, I find myself somewhere in between. Sure, the buzz has died down a bit, but there’s still plenty of excitement around AI copilots and eBPF production-proven tools.

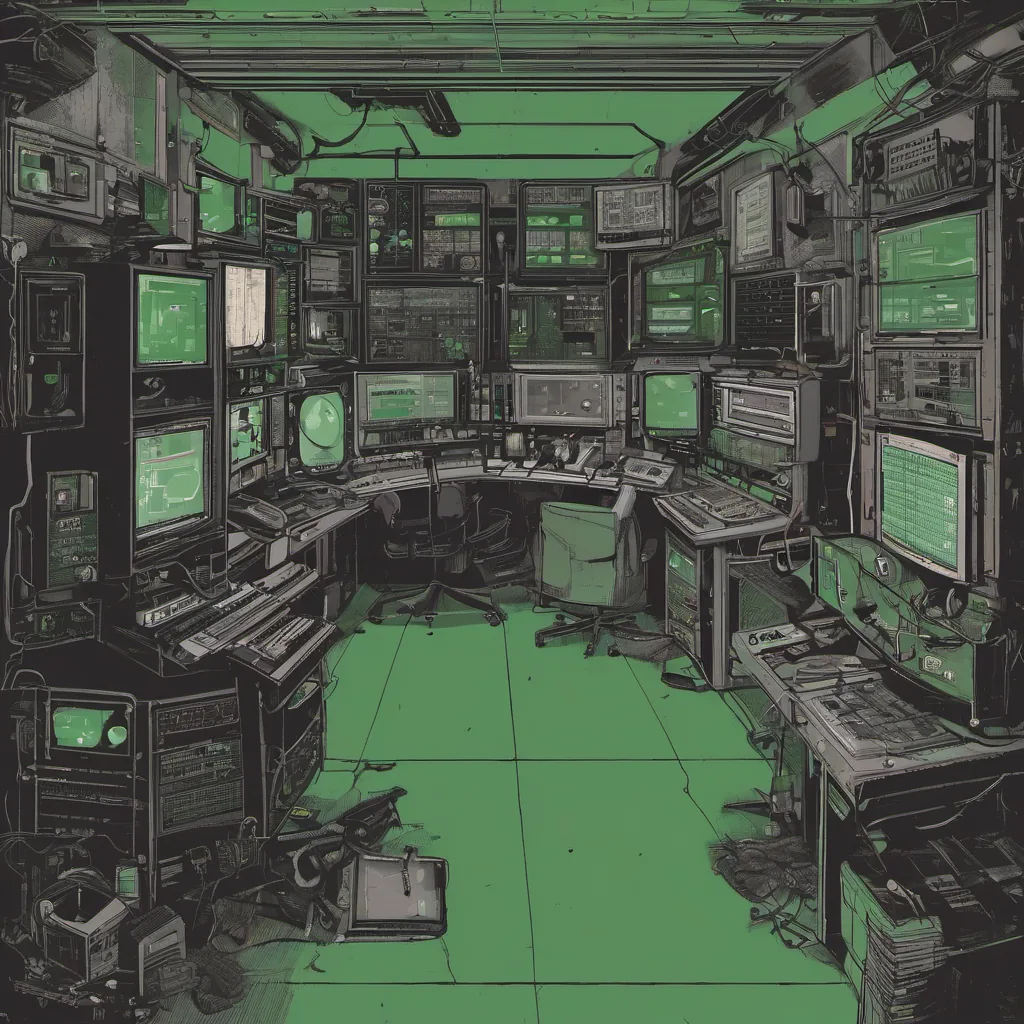

Today, as usual, I start my day with a cup of coffee and an open terminal. My team and I are debugging an issue with our new AI copilot. It’s one of those days where the AI isn’t quite living up to its promises. Specifically, we’re dealing with a misbehaving function that keeps running into edge cases it shouldn’t.

The copilot in question is built on top of LLMs and Kubernetes. We use eBPF for low-level optimizations and Wasm for embedding runtime behavior. The issue at hand is related to an unexpected state transition in one of our microservices. It’s not a simple bug—more like a multi-threaded Rubik’s Cube where each piece seems to move independently.

I step through the code, trying to understand why the copilot’s decision-making process deviated from expectations. The culprit turns out to be a subtle interaction between Kubernetes service discovery and eBPF tracing. Our AI copilots are learning to adapt in real-time, but sometimes they get caught in their own neural networks.

After an hour of intense debugging with my team, we finally spot the issue: a race condition introduced by the new version of Kubernetes. The LLM had been trained on older versions and didn’t account for this particular edge case. We quickly patch the code and push out a fix to production.

The experience brings up some reflection. As platform engineers, we’re in a unique position where we manage AI context ourselves. It’s not just about writing code anymore; it’s about understanding how different layers interact and ensuring that every component works seamlessly together. The copilot is more than just an assistant—it’s become an integral part of our infrastructure.

As I sit back to watch the logs stabilize, I can’t help but think about the future. Will AI copilots continue to evolve, or will they eventually fade into the background like a well-tuned Kubernetes cluster? For now, we’re in this phase where every bug is a learning opportunity, and every challenge pushes us to innovate further.

On another note, I’ve been following the news about Helsinki’s traffic-free year. It’s amazing what can be achieved when technology aligns with safety goals. Meanwhile, the debate over copyparty is heating up—another reminder that technology is not just about building things; it’s also about culture and responsibility.

Tomorrow is another day, filled with more code reviews and meetings. But for now, I’ll enjoy this moment of stability and reflection on our journey so far.

[End of Post]