$ cat post/debugging-a-nightmarish-finops-scenario-with-dora-metrics.md

Debugging a Nightmarish FinOps Scenario with DORA Metrics

Hey team,

So there I was, mid-may 2024, and the whole tech landscape felt like it was shifting under my feet. On one side, we were seeing this massive explosion of AI/LLM infrastructure—growing exponentially from ChatGPT days. Platform engineering had become a mainstream buzzword, and everyone was talking about CNCF’s latest projects. WebAssembly on the server side? It’s not just hype; it’s becoming a reality. FinOps and cloud cost pressure were everywhere, with DORA metrics being widely adopted.

And then there I was, trying to make sense of our own infrastructure costs in the face of all this. One day, we found ourselves in a bit of a finops nightmare. You see, one of our services had been running under an old, inefficient cost model that nobody noticed until now. This service, which was supposed to be optimized and scaled for high traffic, ended up sucking up more than 50% of our monthly cloud budget!

The Debugging Journey

I’ll admit, the first reaction wasn’t exactly a team cheer session. We were using DORA metrics, but we hadn’t really drilled down into every service’s performance in detail. So, I decided it was time to take a closer look.

Step 1: Collecting Data

First things first, I dug through our cloud provider’s billing API to gather detailed usage data for each of our services. This wasn’t as straightforward as it sounds; different services had different billing structures and we needed to normalize the data. After a few frustrating hours, I finally got a dataset that could be compared.

Step 2: Setting Up Metrics

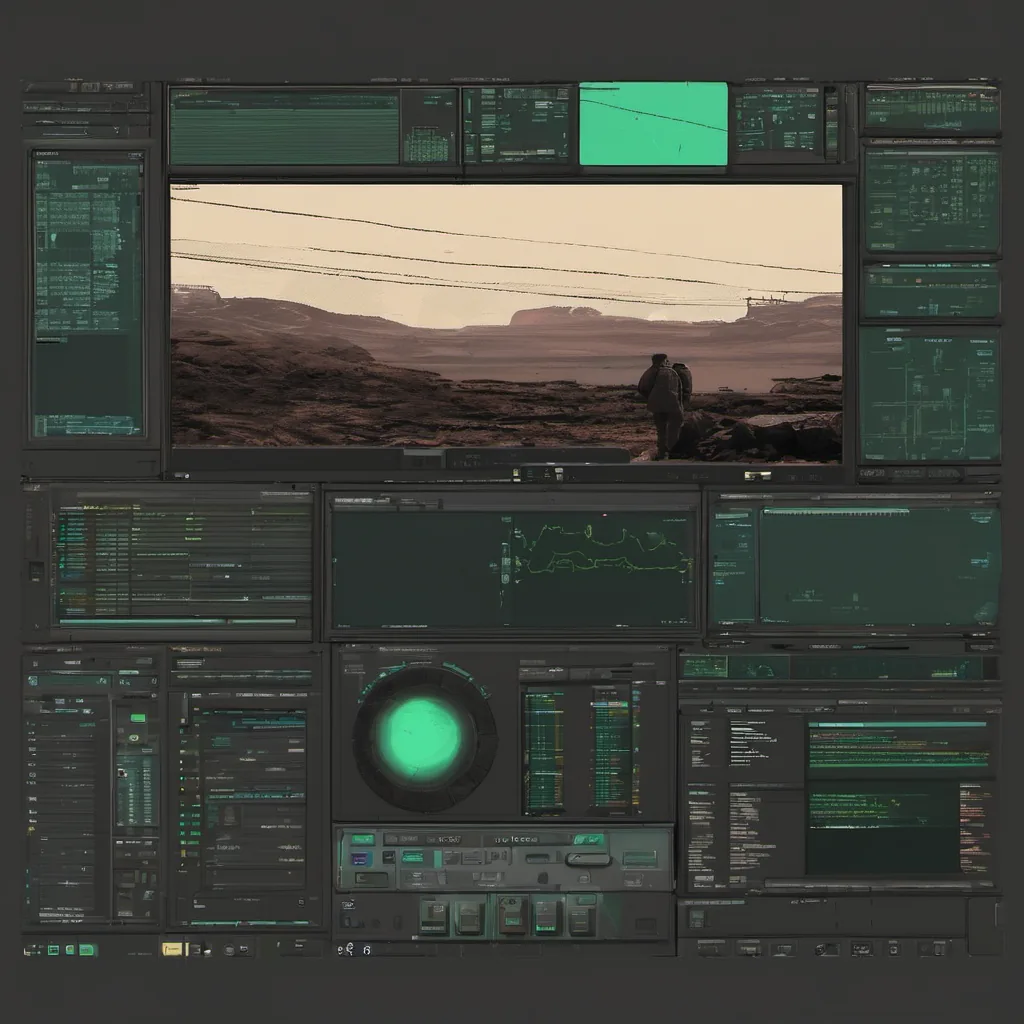

I set up Prometheus on our platform to collect and aggregate these metrics. We decided to use Grafana for visualization, which helped us get a clearer picture of where our costs were going. The initial dashboard was pretty ugly, with all the different services mixed together, but it served its purpose.

Step 3: Analyzing the Numbers

With the data in hand, we started looking at each service’s cost. It quickly became apparent that one particular microservice was the culprit. This service had been running on a high-performance instance type for years, and while it handled spikes well, it was costing us a fortune.

Step 4: Optimization

The fix wasn’t just to switch to a cheaper instance; we needed to optimize the code itself. We started profiling the service using XRay and noticed that there were some inefficient operations being performed during peak times. After refactoring those parts of the code, we saw a significant drop in CPU usage and, consequently, cost.

Step 5: Implementing DORA

We took this opportunity to implement more of the DORA metrics into our service’s monitoring pipeline. We added Lead Time, Deployment Frequency, and Change Failure Rate to see if we could improve not just cost but overall service reliability and speed of delivery.

Lessons Learned

This experience taught me a few things:

- DORA is More Than Just Metrics: While the DORA metrics are great for improving your DevOps practices, they only provide part of the picture. We needed to actually implement them in our day-to-day operations.

- Cost Optimization Needs a Holistic Approach: It’s not just about switching to cheaper hardware; it’s also about optimizing code and processes.

- Regular Audits Are Crucial: Once we implemented this change, I made sure to set up regular audits to ensure we didn’t slip back into old habits.

The Silver Lining

The silver lining? We saved over 40% of our monthly cloud budget! That’s a lot of money that can be reinvested in other areas. More importantly, it showed us the power of continuous improvement and the importance of staying vigilant about cost optimization.

In the end, it wasn’t just another day in the office; it was a reminder that as tech evolves, so do our challenges. But with the right tools and a bit of hard work, we can overcome them.

Stay tuned for more adventures in ops!

Cheers, Brandon