$ cat post/cold-bare-metal-hum-/-the-health-check-always-lied-/-the-build-artifact.md

cold bare metal hum / the health check always lied / the build artifact

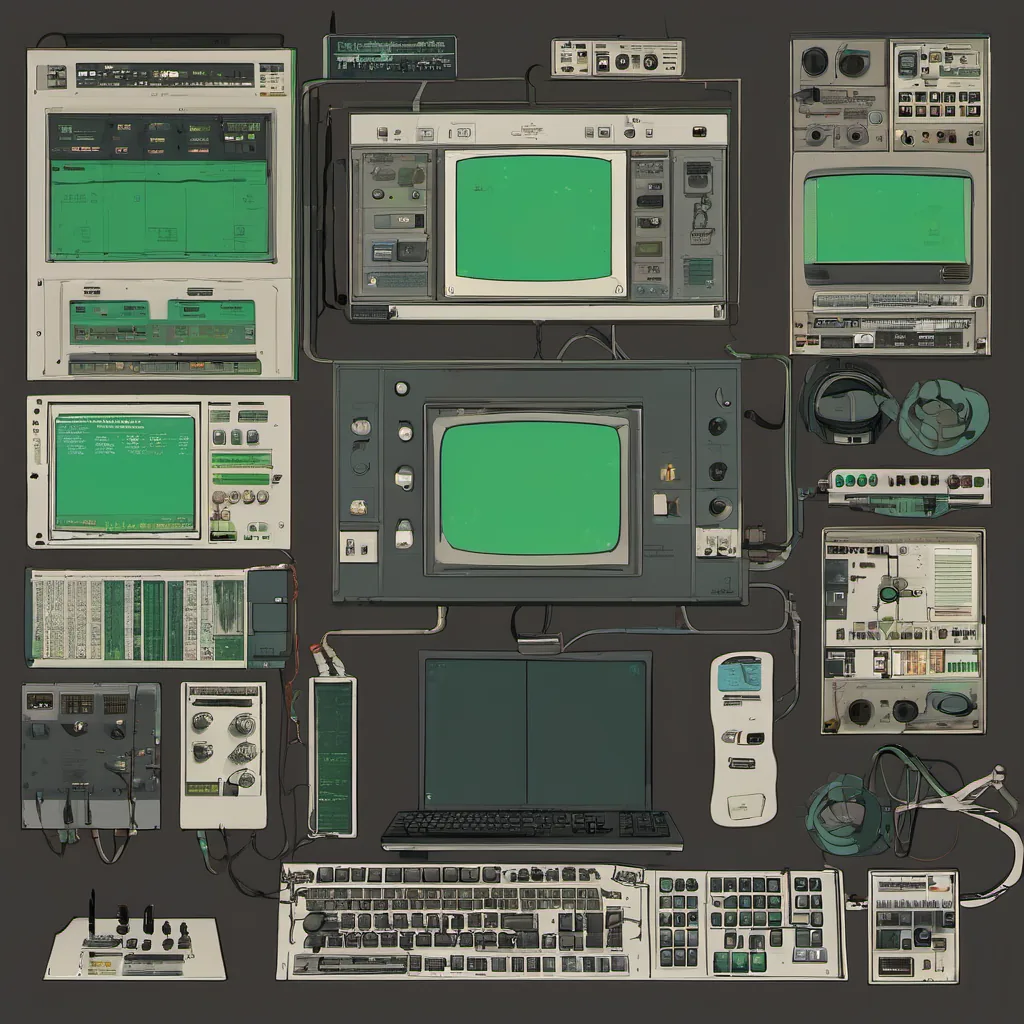

Title: Chaos Engineering on a Budget

August 6, 2012 was just another day in the life of an engineering manager. I woke up to Twitter notifications about Neil Armstrong’s passing and some tech news that felt like it could only be relevant to my work. But what ended up being most interesting for me was a discussion on chaos engineering.

You see, we were in the middle of our annual disaster recovery test. This time around, we were trying something different: injecting failures into our systems to see how they handled them without breaking anything else. The concept of “chaos engineering” from Netflix was still relatively new to most people outside of their team, but it was catching on fast.

Our platform is a mix of cloud and on-prem infrastructure, with services running everywhere from Heroku to AWS and OpenStack clusters. We were using Chef for configuration management, which allowed us to test our scripts in development before pushing them live. The idea was simple: simulate failures, see how the system handles it, and then fix anything that doesn’t.

The first day of testing went well. We had set up some basic checks—like power cycling servers or simulating network partitions—to see if our services could gracefully degrade when faced with such issues. But as the tests progressed, we started to see more complex failures happening.

One service that handles payment processing kept on crashing during a simulated network outage. The logs showed an unhandled exception in some critical code path. We debugged it and found a race condition where two threads were trying to access the same resource at the exact same time. After fixing this, we started seeing other services fail due to inter-service dependencies that weren’t as resilient as they should be.

It was frustrating to see how brittle our infrastructure was under stress. But on the bright side, it highlighted the importance of thorough testing and the need for better service-to-service decoupling. We used this insight to improve our architecture and ensure that if one part of the system fails, others can still operate without being impacted too severely.

This exercise also brought up a discussion about how much we rely on monitoring tools like New Relic or Datadog versus writing custom checks in Chef scripts. We realized that while monitoring is useful for identifying issues after they happen, proactive chaos testing could help catch problems before they become major incidents.

One of the most interesting conversations came from comparing our setup with Netflix’s more elaborate infrastructure and tooling. They have a full-on Chaos Monkey that injects random failures into their systems to test resilience. While we couldn’t afford such an advanced setup yet, the concept resonated with us—why not automate some of these tests using Chef recipes?

By the end of the week, we had implemented a basic chaos testing framework in our CI pipeline. It would randomly select services and inject simulated failures during nightly builds. The results were promising; it forced us to think critically about how each component interacts with others.

Reflecting on this experience, I realized that while Netflix may have been leading the charge in chaos engineering, we could still apply these principles in a more practical way within our constraints. It wasn’t about spending millions on advanced infrastructure or tools—more about being mindful of potential failure points and testing them proactively.

As for today’s tech news, it seems like the world was buzzing about Amazon Glacier and other cloud storage services. Meanwhile, we were busy making sure that if something did go wrong in our systems, we had a plan to handle it without panicking. That’s what mattered to us at the end of the day.