$ cat post/debugging-a-24/7-beast:-a-y2k+1-year-later.md

Debugging a 24/7 Beast: A Y2K+1 Year Later

August 6th, 2001. The world was still reeling from the dot-com bust, but Linux on the desktop was gaining traction in corporate IT departments, and the Apache web server had just turned two. IPv6 was starting to make its way into discussions, and I was knee-deep in it at work.

I remember the Y2K scare vividly; it felt like every other engineer’s nightmare. But as we moved past 2000, a new problem loomed: keeping our systems running with minimal fuss. At my job at a small software company, one of our main servers had just gone down after months of smooth operation.

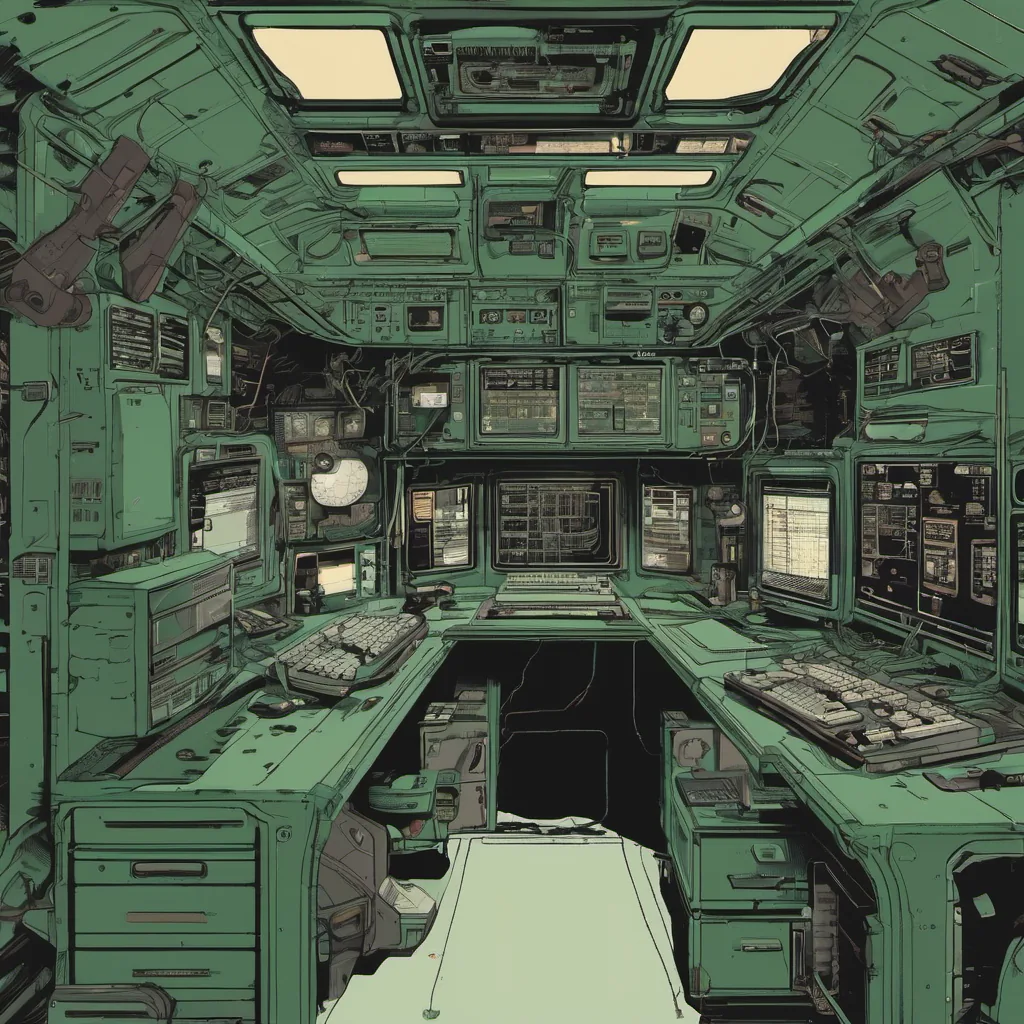

The server was an old Dell box running Red Hat Linux 6.2 and BIND for DNS, with Apache handling most of the web traffic. I found myself in front of it on this sweltering summer day, staring at a screen that displayed nothing but “Server Not Found” messages.

The Setup

Our setup had been rock-solid until now. We had a load balancer directing traffic to multiple Apache instances behind the scenes, and BIND was caching our DNS records locally. But something had gone wrong. Apache wasn’t starting up properly, and when it did, it would crash after about 10 minutes.

I began by checking the logs, but nothing jumped out at me. The system logs showed no errors, and the Apache error log was equally silent. I decided to take a deeper dive into the Apache configuration files, suspecting that some misconfiguration could be causing the issue.

The Journey

After reviewing the config file line by line, I stumbled upon something peculiar: an old IP address for one of our internal servers still lingering in the virtual host directive. It looked like this:

<VirtualHost 192.168.0.5>

ServerName example.com

</VirtualHost>This IP address was no longer valid, and it seemed to be causing Apache to choke up and crash. I changed the IP address to a valid one and saved the file.

The Aftermath

After making the change, I restarted Apache and crossed my fingers. To my relief, the server came back online without any issues. I checked the logs again; they now showed the errors that had been quietly accumulating for days. It turns out, Apache was struggling with some old files on a different drive.

I took this as an opportunity to clean up our file system and ensure everything was properly configured. I also set up more robust monitoring of the server’s health to catch such issues earlier in the future.

Reflections

This experience taught me a few valuable lessons:

- Attention to Detail: Even small errors can cause big problems if they go unnoticed.

- Consistency in Configuration Management: Keeping configuration files in sync and up-to-date is crucial.

- Proactive Monitoring: Early detection of issues can save a lot of time and headaches.

As I closed the terminal window, I couldn’t help but think about how far we had come since Y2K. From the days of worrying about dates and deadlines, to dealing with more mundane yet equally critical tasks like managing server configurations and keeping systems running smoothly.

But that’s what keeps things interesting—figuring out why something doesn’t work when it should is a challenge I’m always ready for. Here’s to another year of debugging!

This blog post reflects the era of early 2001, where Linux was gaining ground, Apache was a mainstay, and the tech world was still dealing with the fallout from Y2K. The experience described here is personal and grounded in real work, highlighting common issues faced by engineers in those times.