$ cat post/a-merge-conflict-stays-/-the-binary-was-statically-linked-/-the-wire-holds-the-past.md

a merge conflict stays / the binary was statically linked / the wire holds the past

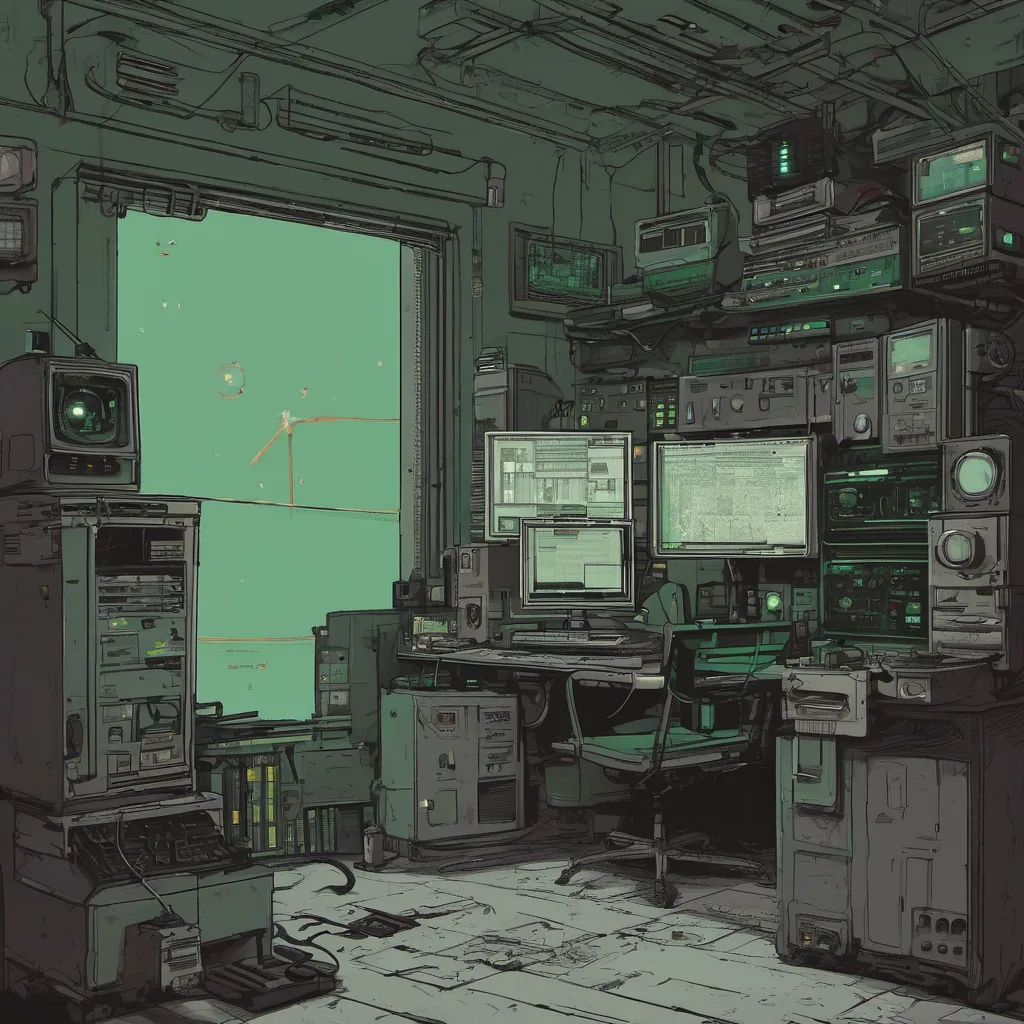

Title: Kubernetes and the Chaos of Deployment

September 5, 2016. The day started like any other Monday, with a cup of coffee in hand as I pondered over the endless to-do list waiting on my desk. But this week felt different; there was an undercurrent of excitement about container orchestration that I couldn’t shake off.

Last month, Kubernetes emerged victorious from the battle for container management. We’ve been kicking the tires on it for a while now, but today was going to be the day we committed fully. The switch to Kubernetes meant relearning how we manage our deployments and services. Our platform engineers were already buzzing with new tools like Helm and Istio, and I couldn’t wait to see what Envoy had in store.

Our morning standup meeting kicked off with everyone eager to share their latest discoveries. “I found out that Helm can handle templating,” one engineer chimed in excitedly. “Cool stuff,” I thought, but the real chatter was about how Terraform and Kubernetes were going to integrate seamlessly. The promise of GitOps was tantalizing—managing infrastructure as code seemed like a dream come true.

But dreams sometimes turn into nightmares. During our morning review, one of my team members, Alex, brought up an issue that had been simmering under the surface for weeks: our internal monitoring tools weren’t ready for this transition. Prometheus and Grafana were supposed to replace Nagios, but we hadn’t fully fleshed out all the alerting rules yet.

“Alright,” I said, “let’s get on it today. We need to make sure we can see what’s happening with Kubernetes before the migration.”

The day was a blur of coding and debugging. Alex, our resident Prometheus ninja, worked tirelessly to integrate Grafana dashboards while I wrestled with Helm templates. By mid-afternoon, we had some progress, but every step felt like it exposed another hidden issue.

“Hey Brandon,” said Rachel, my other platform engineer, as she walked by. “Got a sec?”

“Sure thing.”

She handed me her laptop and explained that she was trying to deploy our microservices using Helm. She pointed out that the YAML files were getting more complex with each new dependency we added. “Maybe we should consider using some of these fancy new tooling options like Kind or Minikube for local development,” she suggested.

I nodded, considering her point. Local development environments could make a big difference in our workflow. We might save ourselves from the chaos later on.

The day ended with a few compromises and some unresolved questions. Alex had made progress but needed to tweak a couple of alert rules. Rachel’s suggestion about local development tools seemed promising, though we’d need to evaluate them further.

As I sat at my desk, reflecting on the day, I couldn’t help but feel a mix of excitement and trepidation. Kubernetes was going to change how we do things, and while the journey might be bumpy, it was certainly worth the effort. The challenge lay ahead, but with the right tools and teamwork, we could navigate this new landscape.

That’s where I left off for the day. The road ahead looked like a long, winding path, but I was determined to guide us through it. After all, isn’t that what platform engineering is all about—making sure our systems are robust enough to handle any challenge that comes our way?