$ cat post/cold-bare-metal-hum-/-the-repo-holds-my-old-mistakes-/-the-pod-restarted.md

cold bare metal hum / the repo holds my old mistakes / the pod restarted

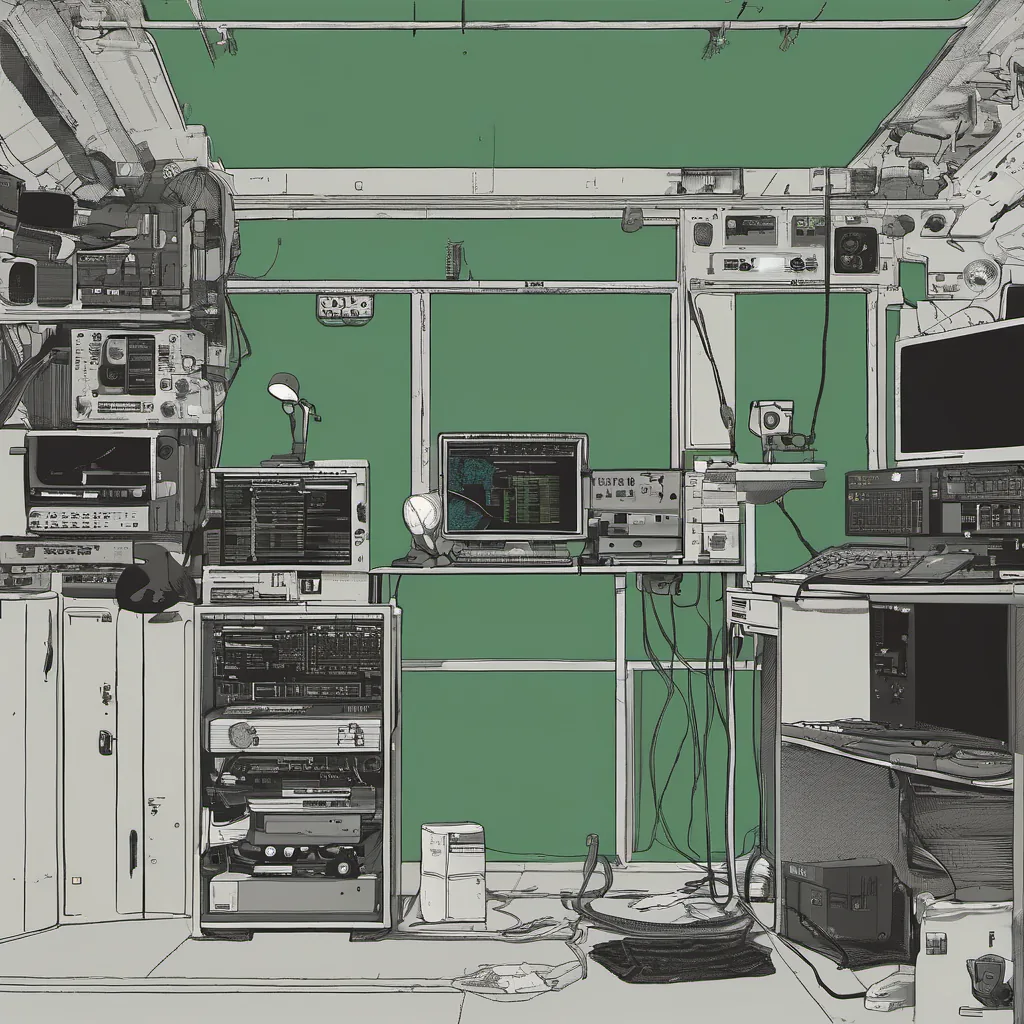

Debugging the Apache Log Files: A Tale of Y2K and Post-Bust Realities

February 5, 2001 was a day like any other in my life at the time. I was just another engineer trying to keep our little e-commerce site running smoothly, but there were some interesting times lurking on the horizon. The dot-com bubble had burst, but Linux and open source were making their way onto more than just developers’ machines—our production servers were running Linux with Apache as our go-to web server. And amidst all this, we were still wrestling with Y2K aftereffects.

The Setup

We had a cluster of Red Hat 6.1 boxes serving static content and some dynamic pages for our growing online retail store. Our tech stack was simple but effective: Apache running on top of Perl scripts that accessed an Oracle database. It worked well enough until the bugs started creeping in, and one day, I found myself buried under a mountain of logs.

The Problem

It all started with a sudden spike in server load. Normally, we’d see 10-20 requests per second during peak hours, but now it was hitting over 100 per second, and the CPU utilization had gone through the roof. My first thought was “Doh! That’s too much traffic for our little box.” But then I checked the access logs and saw that most of these requests were from bots, particularly those using wget to spider the site.

The Debugging Journey

I dove into tail -f /var/log/httpd/access_log to find out more. What I found was a pattern: many requests were being made with very short intervals between them. It turned out that some of these bots were making rapid-fire requests, hammering our servers without any real intent.

The Fix

After a few hours of debugging and testing, I realized we needed to implement some rate limiting on the server side. I set up a simple Perl script to limit the number of requests from each IP address within a certain time window. This was a quick fix that worked wonders—CPU usage dropped, and we were back in business.

The Aftermath

This experience taught me a lot about understanding traffic patterns and managing server resources effectively. It wasn’t just about coding; it was about understanding the real-world implications of our code running in production. We also started implementing more robust monitoring tools to catch issues like this early on.

A Side Note: Y2K Aftermath

Looking back, I couldn’t help but think about how much we had dodged during the Y2K scare. It was a tense time, with everyone expecting the worst. In many ways, it felt like an exaggerated version of what we were dealing with in 2001—overly cautious and slightly paranoid. But hey, at least we didn’t have to worry about computers turning off in 2000!

Lessons Learned

Debugging these logs taught me a lot about server performance and resource management. It wasn’t just about fixing the immediate issue; it was also about understanding the broader implications of our technology choices. As we move forward, I’ll keep these lessons in mind as I tackle new challenges.

That’s my day in tech on February 5, 2001. A simple fix to a complex problem, but one that highlighted the real-world impact of our work.