$ cat post/yaml-indent-wrong-/-the-config-file-knows-the-past-/-it-ran-in-the-dark.md

yaml indent wrong / the config file knows the past / it ran in the dark

Debugging a Production Issue with Xen

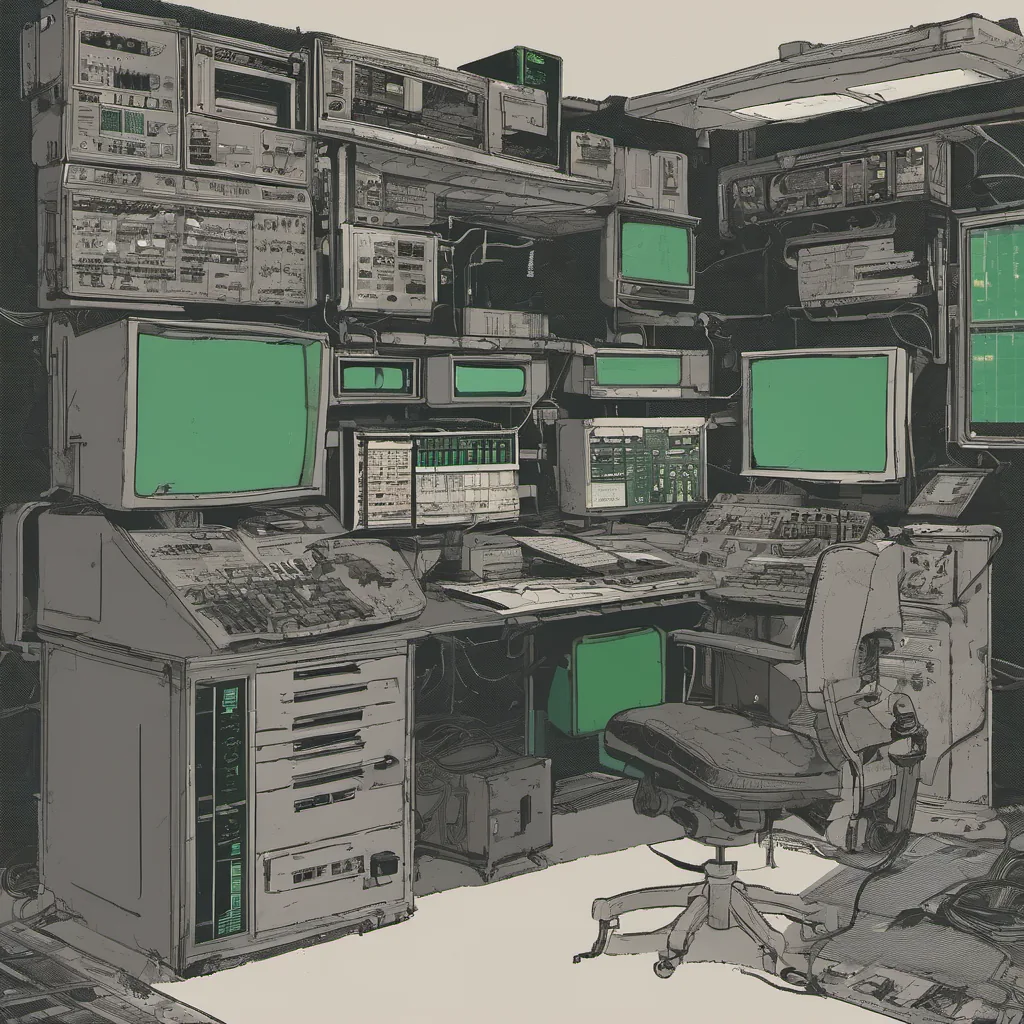

April 5, 2004. The date echoes in my memory like an old record scratch—a time when open-source stacks ruled the day and sysadmins were still learning to automate their lives with Python and Perl. It was a month when Google was aggressively hiring and Firefox made its debut. Web 2.0 was just starting to bubble up, and we had barely scratched the surface of how dynamic and interactive websites could be.

Today, I’m writing about something that happened around this time—a production issue I faced with our Xen hypervisor setup. It was one of those days when everything seemed to go wrong at once, and it felt like the world was coming apart at the seams.

The Setup

At the time, we were running a cluster of servers using Xen as the virtualization layer. Each server hosted multiple virtual machines (VMs) for various applications. We had just upgraded from an older version of Xen to a more recent one, thinking it would bring some much-needed stability and performance improvements.

The Crash

One morning, I got a call from our monitoring system, alarming me about high CPU usage on several servers. At first glance, it seemed like a simple load balancer issue or a misbehaving VM, but as the number of affected servers grew, it became clear that something more serious was happening.

I quickly jumped into my car and rushed to the office. As I logged in, the server logs were showing hundreds of error messages related to domain management. The Xen hypervisor seemed to be crashing intermittently, causing all running VMs to go down.

Digging In

The first thing I did was review the changes we made before the crash. We had updated the version of Xen, but everything else in our infrastructure remained unchanged. I went through the release notes for the new version and noticed a few mentions of potential issues with certain configurations. This didn’t give me much hope that it was going to be an easy fix.

Next, I started checking system logs, looking for any additional clues. The xend daemon logs were flooded with error messages like:

2004-04-05 10:38:17 +0000 DEBUG: domain creation failed

2004-04-05 10:39:16 +0000 ERROR: domain suspension failed due to resource constraintsThese error messages hinted at a problem with resources, but I needed more context. I decided to use top and htop to monitor the CPU and memory usage in real-time. As I watched, I noticed that the VMs were hitting their resource limits just before they went down.

The Fix

After hours of digging, I finally found a pattern: whenever a VM reached its allocated resources (CPU or memory), it would start causing other VMs to fail as well. It turned out that our Xen version had a bug in how it managed shared CPU and memory pools. Once the system hit certain thresholds, it started failing domains instead of gracefully managing resource contention.

To fix this, I rolled back to the previous version of Xen, which handled resource management much better. This was not an ideal solution as we wanted to stay on the latest stable release, but it allowed us to stabilize our infrastructure while we waited for a proper patch from the developers.

Lessons Learned

This experience taught me a lot about how complex distributed systems can be. It also highlighted the importance of thorough testing and validation before rolling out new versions of critical components like Xen. We started implementing more rigorous automated testing scripts to catch such issues earlier in the deployment process.

Looking back, it’s amazing to think that we’re still dealing with many of these same challenges today—only now, the scale is much larger and the stakes are even higher. The sysadmin role has evolved a lot since those days, but the basic principles remain the same: always be prepared to debug unexpected behavior and stay vigilant in your monitoring.

Conclusion

That day in April 2004 was just one of many battles we fought as our infrastructure grew more complex. It’s these experiences that shape us and make us better engineers. Debugging a production issue like this was no walk in the park, but it helped me grow both technically and personally. And who knows? Maybe some future sysadmin will read this post and recognize a similar issue, thanks to the open-source community we all part of.

That’s how it felt to live through those early days of Xen and Open Source Infrastructure. The tech world was evolving rapidly, and with that came both challenges and opportunities.