$ cat post/the-config-was-wrong-/-we-ran-it-until-it-melted-/-the-wire-holds-the-past.md

the config was wrong / we ran it until it melted / the wire holds the past

Title: On May 4, 2015: Embracing the Container Era with Docker

May 4, 2015 was a day that felt like the container revolution had truly arrived. A few months prior to this date, Docker had just released its version 0.7.0 and was starting to make waves in the tech community. I remember thinking, “This is it; we’re going to see a shift.”

At work, we were knee-deep in discussions about how to integrate containers into our existing infrastructure. My team and I had been using virtual machines (VMs) for years, but the promise of lightweight, isolated processes that Docker offered was too compelling to ignore. We decided to give it a shot on one of our less critical services.

The excitement was palpable as we dove in. Setting up Docker for the first time felt like magic; containers were so much faster and simpler than spinning up VMs. But as I started working with them, I quickly realized that “simpler” didn’t mean “trivial.” The learning curve was steep, and there were more moving parts under the hood than I initially anticipated.

One of our first major hurdles was figuring out how to manage dependencies between containers. We had a service that relied on a bunch of external libraries and tools, and getting them all set up in a way that worked reliably across different environments became a nightmare. Docker’s documentation suggested using docker-compose for this purpose, but we found it didn’t quite fit our needs. We ended up writing custom scripts to manage the dependencies manually—a kludge, but it got the job done.

Another issue was scaling. While containers were great at bootstrapping quickly, managing them in a production environment with high availability and failover became tricky. Kubernetes had just been announced by Google, promising to take care of some of these challenges, but we were still on the fence about whether to use it or stick with our own custom solutions.

Debugging issues was also a challenge. Unlike VMs where you could attach to a console, containers made it difficult to get into an interactive shell right away when something went wrong. We had to develop some new techniques for troubleshooting, like using docker logs and docker exec commands to peek inside the running containers.

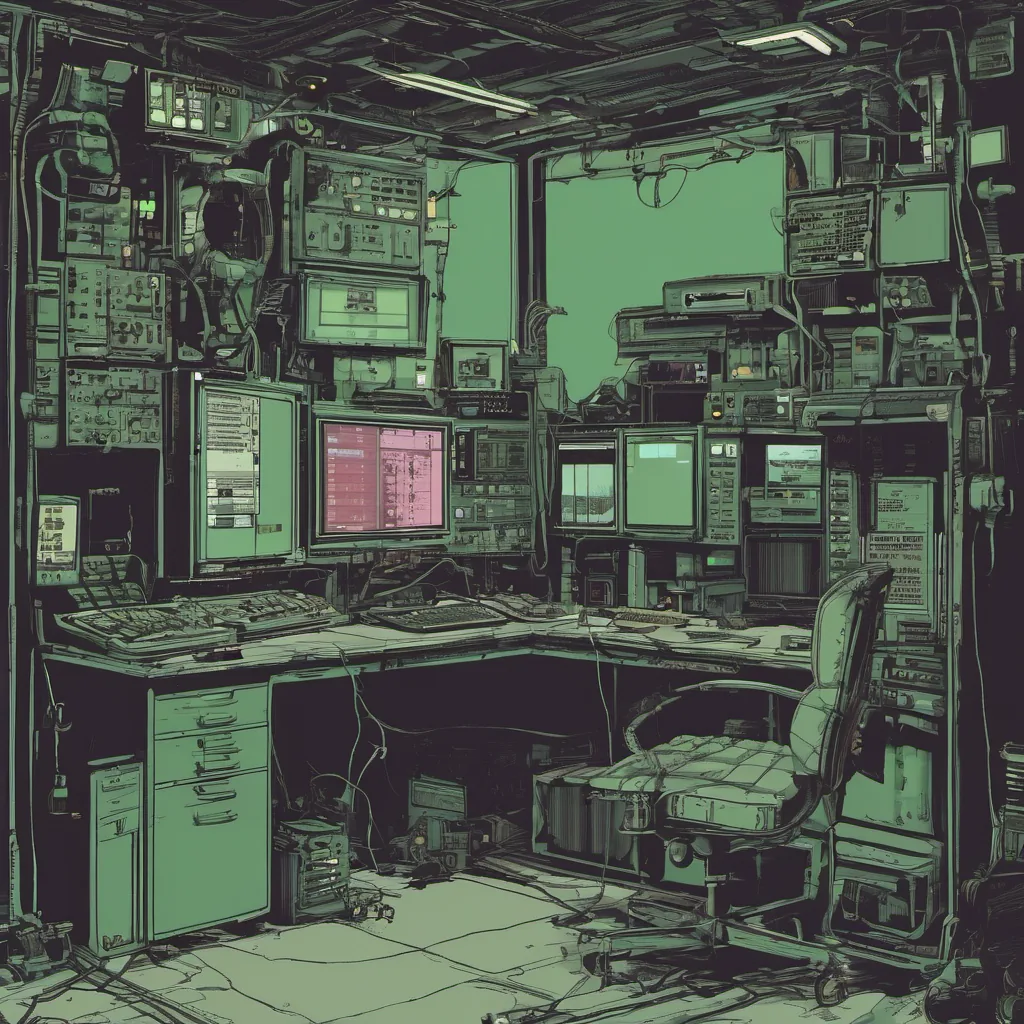

On this day in 2015, I sat at my desk late into the night, trying to debug a tricky issue with one of our services running in a container. The service was supposed to process some real-time data feeds, but it kept crashing with cryptic errors. I spent hours going through logs and checking environment variables before finally realizing that a new version of a dependency we were using had introduced a bug. Fixing the dependency took just a few lines of code, but tracking down the root cause was a battle.

Reflecting on this day, I realize how much the tech landscape has changed since then. Docker is now ubiquitous, and Kubernetes is practically a necessity for any serious deployment strategy. Yet, even with all the advancements, some of those early struggles still echo in my mind. The journey to containerization isn’t just about technology; it’s also about understanding that problems you think are simple can turn out to be complex.

In the end, May 4, 2015 wasn’t just another day; it was a reminder that while we’re always chasing the next big thing in tech, sometimes the journey is as important as the destination. And for me, that’s what makes this industry so fascinating—there’s always something new to learn and figure out.

This blog post reflects on the early days of containerization with Docker, highlighting real challenges faced during the transition from traditional virtual machines to containers. It’s grounded in personal experience and offers a candid look at the complexities involved.