$ cat post/june-4,-2007---a-messy-weekend-with-git-and-mysql.md

June 4, 2007 - A Messy Weekend with Git and MySQL

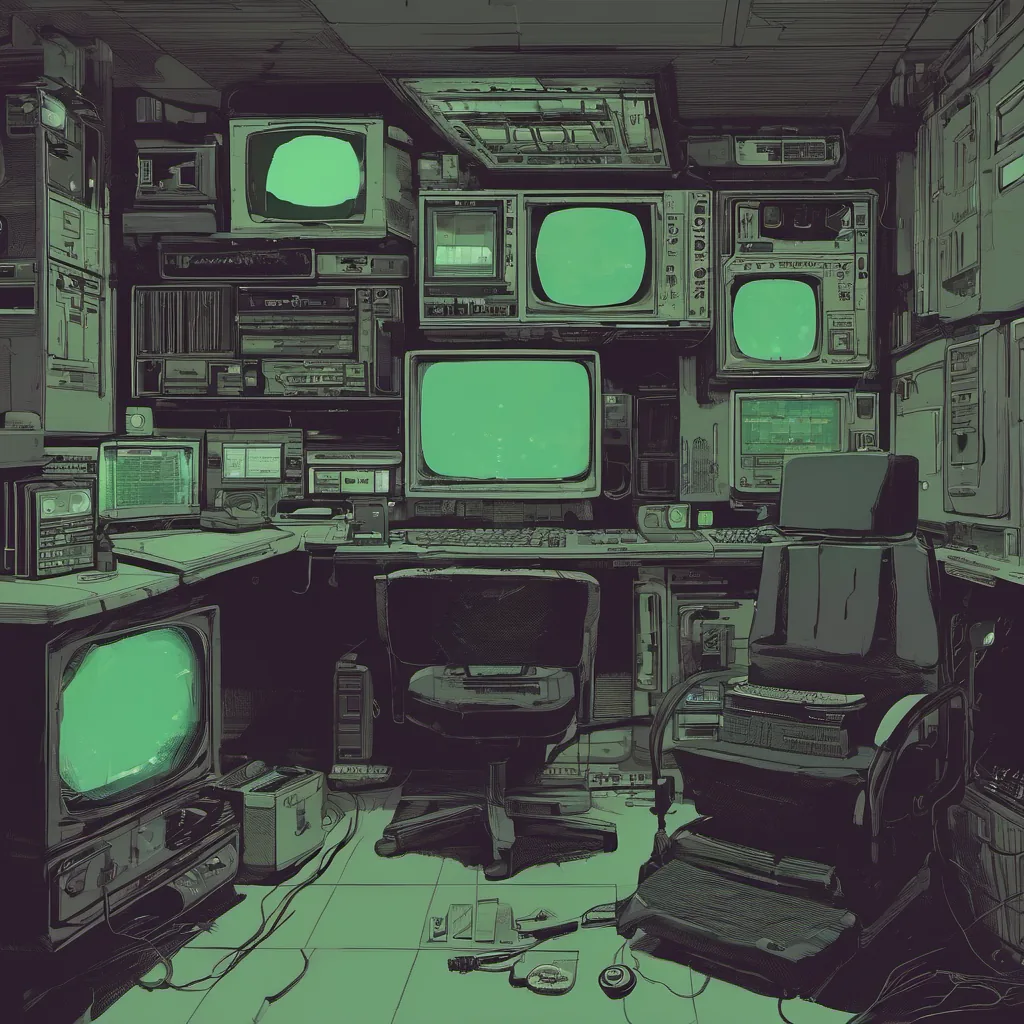

June 4, 2007. The air is thick with the smell of freshly brewed coffee as I sit down at my desk, trying to make sense of a messy weekend spent debugging our database issues. It’s been a rough couple of days, but I’m determined to get this resolved before the week gets any more hectic.

The Setup

We were using MySQL in our application and had been for years. Our deployment process was fairly standard – we’d push code changes to SVN (oh the nostalgia), and then deploy to production servers. However, things started going sideways a few days ago when multiple developers reported data inconsistencies across different environments. This was no small matter; we were handling sensitive user information and financial transactions.

Enter Git

As a techy, I couldn’t resist playing with new tools, so about two months back, one of our senior engineers introduced us to Git for version control in our development process. The idea was to get more granular control over our codebase, especially since we were starting to see the benefits of continuous integration. At first, it seemed like a no-brainer – but now, I’m left with a tangled web of branches and conflicts.

The Problem

Over the weekend, as everyone else was enjoying their well-deserved Sunday, I found myself sifting through our Git history looking for clues. It’s 3 AM, and my brain is fuzzy from hours of caffeine-fueled debugging. Suddenly, it hits me – a merge conflict in one of the branches must have caused this data corruption issue.

I dive into the codebase, tracing the commit history with git blame, trying to figure out who introduced the bug. It turns out that it was a developer working on a feature branch who didn’t properly resolve conflicts during a rebase. The change had been pushed to our mainline and caused a cascade of issues.

Fixing the Mess

First, I needed to get everyone’s work up-to-date. We decided to do a forced push to overwrite any local changes that might be conflicting with the new code. This was risky but necessary given the urgency. After getting all the developers on board, we proceeded to squash and merge the offending branches.

Once everything was back in sync, I ran some SQL queries to ensure the data integrity. Phew! It took a while, but eventually, the system started behaving as expected. By morning, most of our team had arrived at work, and they were pleasantly surprised that things seemed to be working again without any outages.

Lessons Learned

This incident really drove home the importance of proper Git workflow practices. I’ve always been a firm believer in having clear branching strategies—especially with shared environments like ours where multiple developers are working on different features simultaneously. It’s easy to get lost in the details, especially when you’re juggling multiple tasks.

In hindsight, we should have implemented some form of code review process or automated testing that could catch these kinds of issues before they hit production. But hey, experience is a great teacher, right?

Looking Forward

As I type this up on Monday morning, I’m feeling relieved but also a bit embarrassed at having to debug something so basic. It’s moments like these that remind me why I love tech—every problem is an opportunity to learn and grow.

The world of technology was buzzing with all sorts of exciting developments back then. GitHub had just launched, AWS EC2/S3 were becoming must-haves, and the iPhone SDK was starting to stir up some excitement. But for now, I’m just grateful that we managed to resolve this mess before it caused any serious damage.

Stay tuned—more adventures await in the world of tech!