$ cat post/debugging-apache-in-the-y2k-aftermath.md

Debugging Apache in the Y2K Aftermath

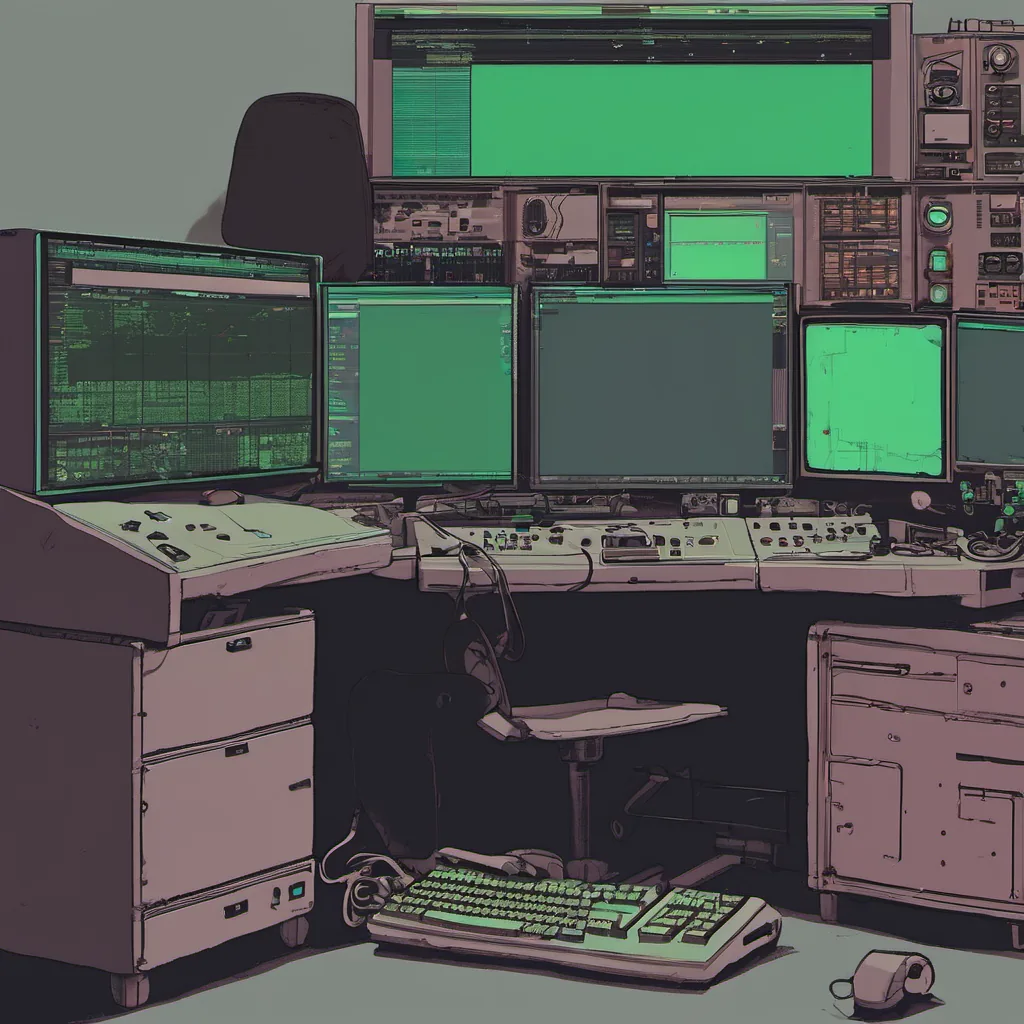

June 4th, 2001 - Today marks another day in the long and often thankless task of keeping the web running. I’ve been working as a platform engineer for the past five years, navigating through the ups and downs of IT. Right now, we’re dealing with Apache - an old friend from back when the web was still a new kid on the block.

I’ve always admired how Apache has held up over the years. Sure, it’s not perfect, but it’s reliable and does its job without too much fuss. We use it heavily for serving static content and some dynamic pages. But sometimes, those pesky bugs rear their heads, and today was one of those days.

It started with a strange bug report from our operations team: “Apache is crashing on heavy load.” My heart sank—another Apache crash? I’ve seen them before, but they’re always a pain to debug because there’s no straightforward way to pinpoint the issue. We use mod_perl for some of our pages, which can be finicky.

I grabbed my laptop and logged into one of our production servers. The logs were filled with error messages like “segmentation fault” and “access violation.” I knew exactly where to look—Apache’s core, or more accurately, the part that interacts with mod_perl.

After a few hours of debugging, I started to notice something odd. The crashes seemed to occur only when we hit high traffic on specific pages. This narrowed down our search significantly. But why were these particular pages causing issues?

I dove into the code and found what appeared to be a race condition in how Apache was handling Perl requests. It’s always those edge cases that bite you the hardest. Apache’s multi-threaded nature meant that it needed to handle concurrency carefully, but mod_perl introduced an additional layer of complexity.

To fix it, I added some synchronization primitives around our critical sections. This wasn’t a simple change—mod_perl was already optimized for performance, and adding locks could have unintended consequences. But the risk of Apache crashing on us was too high.

After implementing the changes, we tested thoroughly in staging environments. The initial results were promising—the crashes stopped! We deployed to production with some trepidation but optimism. For a while, everything seemed fine until…

Just as I thought we had finally fixed it, the ops team reported another crash. This time, it was from a different part of our codebase. It felt like déjà vu all over again.

I decided to take a step back and look at how we were handling requests more broadly. Perhaps there was something deeper going on with our load distribution or cache invalidation strategies. I spent the next few days auditing our entire request flow, from the edge servers to the backend databases.

In the end, it turned out that a subtle interaction between mod_perl and our caching layer had been causing the crashes. Once we isolated this issue and updated both components, everything started working smoothly.

This experience reminded me why I love working with open-source tools like Apache. The community is incredibly supportive, and you learn so much by tackling these challenges head-on. Sure, it’s frustrating when things go wrong, but there’s always a way to find the root cause and fix it.

Today, as we sit in the Y2K aftermath, surrounded by the remnants of the dot-com bubble, I’m reminded that tech is about more than just the latest trends or tools. It’s about problem-solving, persistence, and the satisfaction of making something work when others fail.

Apache will always be a part of my journey, a testament to the hard work and dedication required to keep the web running smoothly. Here’s to many more years of debugging, learning, and growing with it.

End of Blog Post