$ cat post/the-old-server-hums-/-the-rollout-was-never-finished-/-packet-loss-remains.md

the old server hums / the rollout was never finished / packet loss remains

Title: The Fourth of July Bug Hunt

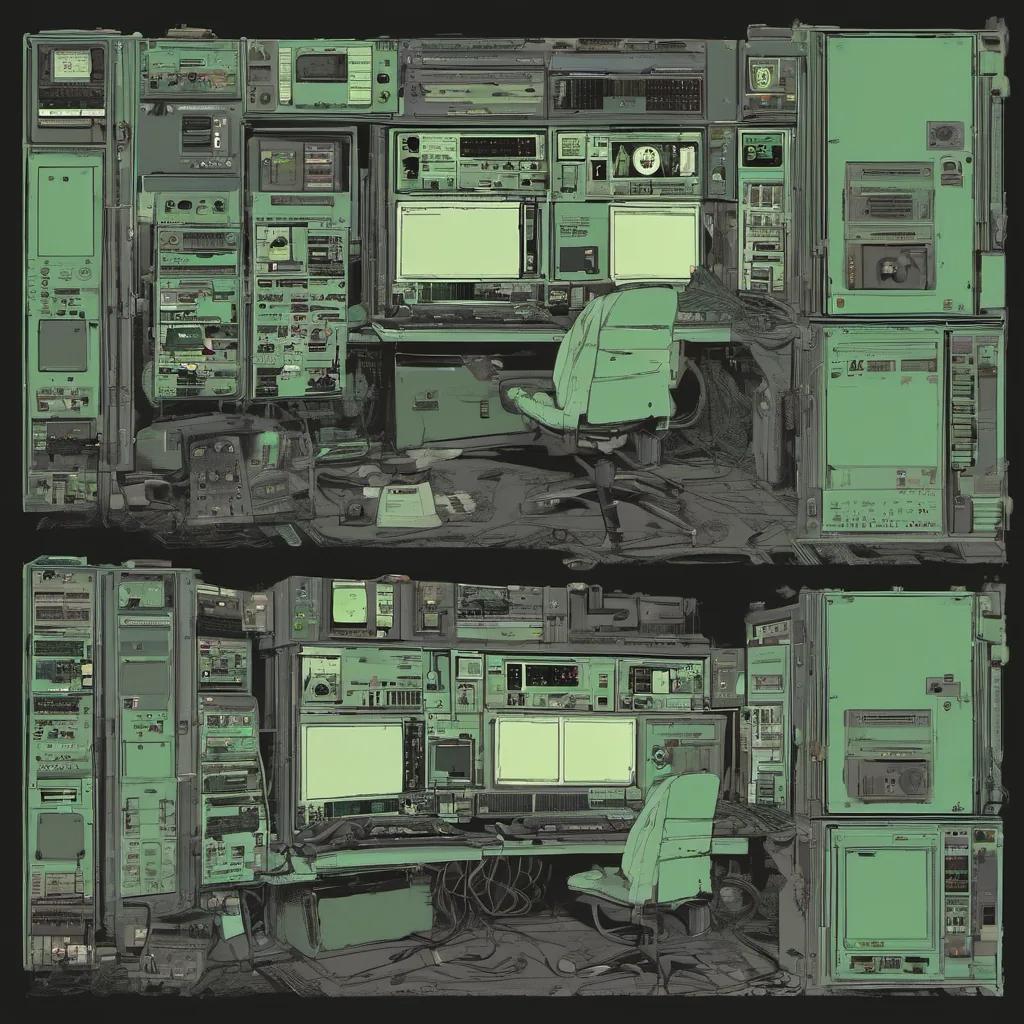

July 4th, 2005—just another day at the office. Well, maybe just a bit more festive with red, white, and blue shirts splattered across the racks in our server room. It was the era of open-source stacks like LAMP (Linux-Apache-MySQL-PHP), and I found myself knee-deep in a bug that wouldn’t let go.

I was part of a small team building an e-commerce platform for a startup, and it wasn’t going smoothly. We were using a combination of MySQL, PHP, and Apache to serve our customers, but something kept breaking. The site would randomly crash during high traffic periods, and our logs didn’t tell us much. The frustration was palpable.

One day, I got a call from the ops team. “Something’s going bonkers,” they said. “The server just died on us again.” I grabbed my laptop and rushed over to the server room, trying not to look like someone who had been up all night.

The first thing I did was boot up top on the server console. It showed a high CPU load, but nothing seemed out of place. I then ran ps aux | grep mysql to see if any processes were hogging resources. Nothing jumped out at me, so I decided to dig deeper with strace.

After about an hour, something caught my eye—a series of system calls that looked suspicious. They appeared to be related to file operations on a specific directory used for storing temporary files. It hit me then—could it be our temp files were causing the issue?

I quickly checked the permissions and ownership settings on those directories. Everything seemed fine at first glance, but something wasn’t right. I ran lsof +L1 to see if any processes had a large number of locked files, and sure enough, MySQL was hogging up a bunch of these temp files.

Realizing the problem, I decided to increase the innodb_log_file_size setting in our MySQL configuration, which should help manage those temp files better. After applying this change, the server seemed more stable, but we knew it wasn’t the end of the world. We needed to figure out a more permanent solution.

That night, I sat down and started thinking about how to refactor our codebase to handle these temporary files better. The next morning, I presented my findings to the team and proposed adding a dedicated temp file management module. It was met with some resistance—some argued that it would add unnecessary complexity. But after showing them the impact of not having proper file handling in place, they agreed.

The change took weeks to implement and test thoroughly, but it paid off. Our system became more robust, and we finally saw a smoother user experience during peak times. The victory was sweet, but it came with hard work and some late nights.

Looking back at 2005, the tech world was changing rapidly. Open-source tools like LAMP were taking over, and new players like Firefox were shaking things up. But for us on the ground level, the real challenges lay in making these systems reliable every day. Tools like strace became our best friends, and we learned to appreciate the value of good logging and monitoring.

As I sit here today, writing this blog entry, I can’t help but think about how far we’ve come since those days. The Sysadmin role has evolved significantly, with scripting languages like Python and Perl becoming more prominent in automating our infrastructure tasks. But no matter how much technology changes, the basics of problem-solving, debugging, and teamwork remain constant.

So here’s to July 4th—may your servers run smoothly on this Independence Day, and may we continue to innovate and push boundaries.

Feel free to modify any part as needed!