$ cat post/debugging-at-scale:-a-day-in-the-life-of-a-platform-engineer.md

Debugging at Scale: A Day in the Life of a Platform Engineer

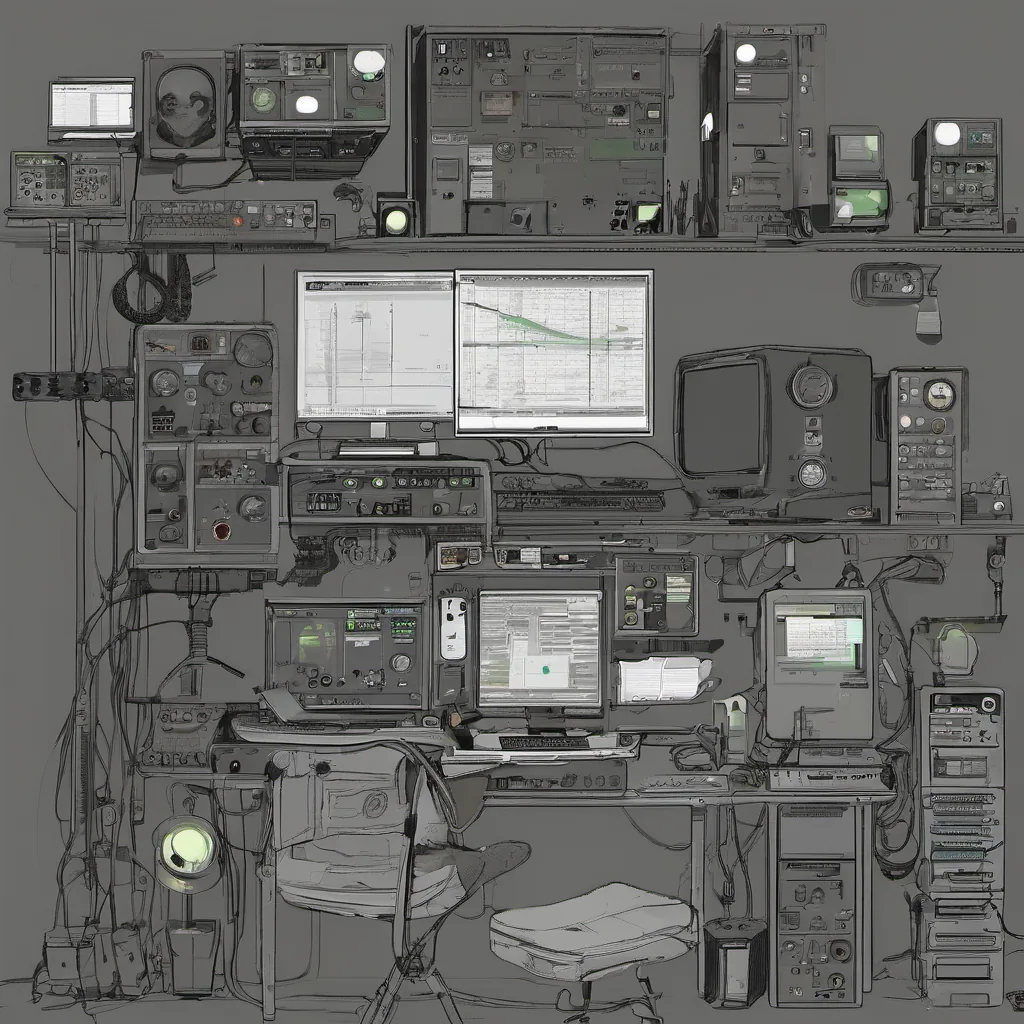

March 3rd, 2014 was just another day for me as a platform engineer, but it felt like a significant moment in time. Containers were gaining traction, and I was dealing with a cluster running Docker containers that had gone rogue. It’s a day that highlights the tension between technology’s power and its unpredictable nature.

The Morning Grumble

I woke up to a notification from our monitoring system: “CPU utilization is spiking on our production environment.” A quick glance at the logs showed hundreds of containers spawning like crazy, each one consuming more resources than it should. This kind of issue can quickly turn into an epic debugging session, or worse, a full-blown incident if left unchecked.

The Hunt Begins

I started digging by looking at the Docker container logs and examining the network traffic with tcpdump. It was clear that something was awry; the containers were making too many unnecessary requests to other services. I needed to figure out which one was causing this behavior, but it wasn’t a simple matter of finding a single bad actor.

I switched over to my trusty kubectl interface (yes, Kubernetes had just been announced by Google, but we were still running Docker). The cluster looked relatively stable until I hit the /metrics endpoint for one of our services and saw an exponential growth in requests. That’s when it clicked: a poorly implemented service was triggering a cascading failure.

The Realization

It dawned on me that the issue wasn’t just about fixing a bad container, but understanding how all these containers interact with each other. I pulled up the service’s configuration files and found the culprit—a misconfigured environment variable that caused it to make excessive API calls. This was not something that would have been caught in our static analysis tools; it had slipped through because of the dynamic nature of our setup.

The Fix

Armed with this knowledge, I edited the container’s Dockerfile to set the correct environment variable and pushed a new version. Watching the logs, I saw the immediate impact: CPU utilization stabilized, and the requests normalized. It was a satisfying moment of resolution, but it also highlighted the challenges we face in managing large-scale, dynamic environments.

Reflections

This day reminded me that while Docker has made containerization more accessible, it’s not a silver bullet. Managing services at scale requires constant vigilance, robust monitoring, and an understanding of how each component interacts with others. The tech landscape was evolving rapidly, but the core principles of good engineering—thorough testing, diligent debugging, and resilience—remained paramount.

The Big Picture

Looking back, this incident felt like a microcosm of what was happening in the tech world at large. Just as 2048 had captured people’s imaginations with its simple yet addictive gameplay, technology was doing the same thing with containerization and microservices. It was an exciting time to be part of it all.

This day was just a small chapter in the grand narrative of platform engineering, but it encapsulated so much—struggles, successes, and the constant learning process that defines this field.