$ cat post/nmap-on-the-lan-/-we-named-it-temporary-once-/-the-key-still-exists.md

nmap on the lan / we named it temporary once / the key still exists

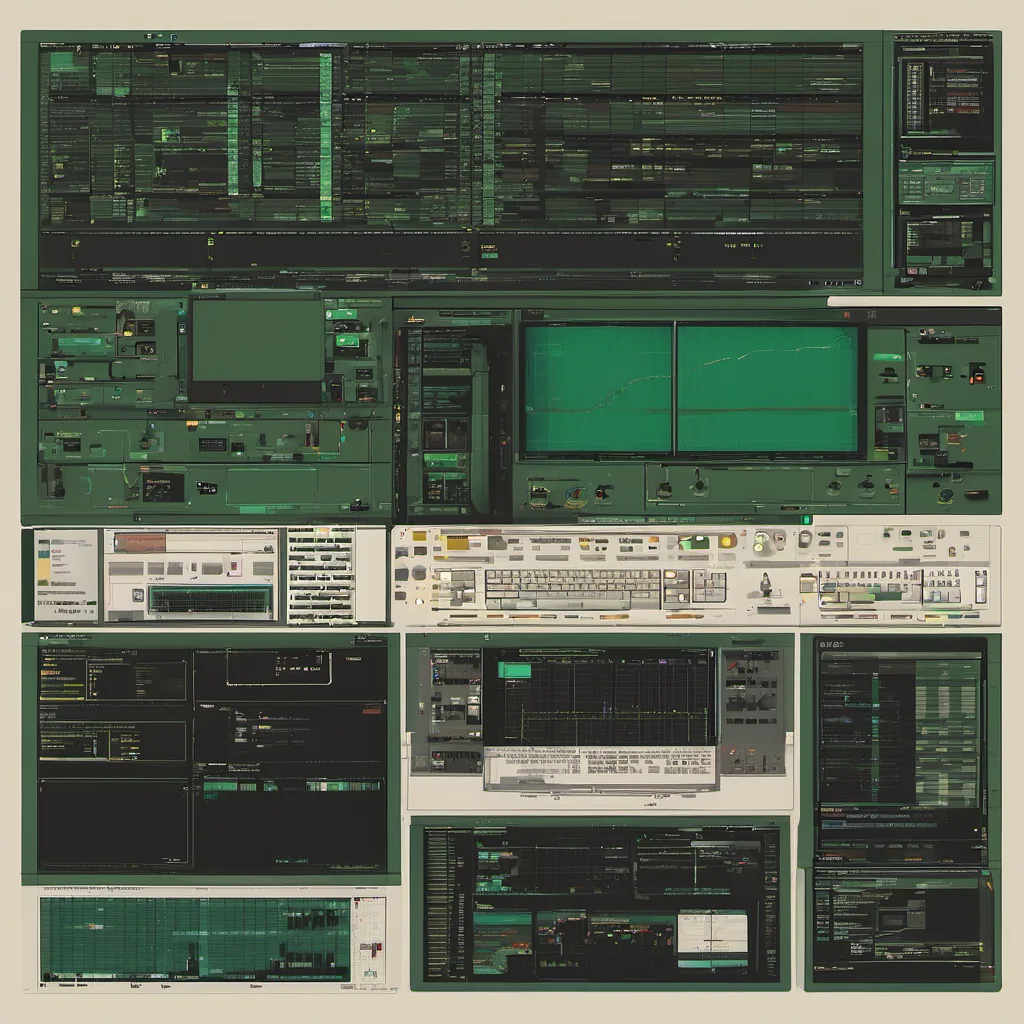

Title: Debugging the Real-Time Dilemma: A LAMP Stack’s Nightmare

February 3, 2003. The date feels like an eternity ago in the tech timeline, but it was a crisp early spring day when I sat down with my team to debug a major issue that had been plaguing our web application for weeks. It wasn’t glamorous or sexy; it was just another day of digging through logs and source code.

Our stack? LAMP: Linux (Red Hat 7.2), Apache, MySQL, PHP 4.3.9. The application itself was a mix of custom scripts and a few open-source gems that had been cobbled together over the past couple of years. At the time, it was cutting-edge tech; now, looking back, it seems like we were living in the stone age compared to today’s standards.

The problem? A sudden surge in real-time data processing requests. Our app was built on a batch-oriented model—queries hitting the database, processing heavy lifting done server-side with PHP. But our users wanted something faster. They wanted it now. Fast forward and you’d have something like Web 2.0 today, but at the time, it felt revolutionary.

One day, in the middle of a quiet afternoon (or was it early evening?), we noticed an alarming increase in response times for our core query functions. Users were complaining about lag, which wasn’t normal. We started logging and monitoring everything: network traffic, database queries, server CPU usage, even disk I/O. Nothing seemed out of the ordinary until we got into the nitty-gritty details.

Turns out, it was a classic case of cache buster gone wrong. We were using query strings to invalidate our caching layer, which worked great for smaller datasets. But as soon as our dataset grew and traffic increased, these queries started hammering our database. The kicker? Our PHP scripts were not optimized for this kind of workload. They were slow, and they kept the server’s CPU pegged.

We spent days poring over logs, rewriting code to make it more efficient, and tweaking Apache configuration files to handle requests better. We also looked at MySQL configurations—increasing buffer sizes, optimizing indexes, you name it. But there was this one piece that just wouldn’t go away: the PHP scripts. They were like a slow-moving train wreck.

I remember staying up late, coding, refactoring, and benchmarking. I tried everything from optimizing queries to implementing caching strategies in PHP itself. We even looked into switching from MySQL to something more robust like PostgreSQL, but we didn’t have time for that kind of major overhaul. So, we focused on the immediate problem.

The solution? A combination of techniques. First, we implemented a more efficient query structure and optimized our indexes. Then, we added a caching layer using Memcached, which drastically reduced load times. Finally, we tweaked PHP itself by reducing memory usage and optimizing for speed. The process was arduous but ultimately rewarding. We saw our response times drop significantly, and users noticed the difference almost immediately.

Looking back, this experience taught me so much about real-time data processing on a LAMP stack. It showed that while open-source tools are powerful, they can also be limiting in certain situations. It highlighted the importance of understanding your system’s bottlenecks and not just slapping on patches without proper analysis.

Debugging this issue felt like debugging a puzzle where every piece mattered. We worked together as a team to understand what was going wrong and find solutions that could scale. In the end, it wasn’t about picking the perfect tool; it was about making do with what we had and pushing ourselves to innovate within those constraints.

That’s why I remember this day so vividly. It’s not just because of the problem, but because of how we solved it together. That kind of teamwork is something you can only get from hands-on experience in the trenches.

End of Post