$ cat post/the-floppy-disk-spun-/-the-namespace-collision-held-/-the-merge-was-final.md

the floppy disk spun / the namespace collision held / the merge was final

Title: April 2023 Journal: Embracing LLMs in the Cloud

April’s been a busy month. The AI/LLM infrastructure explosion post-ChatGPT has everyone reevaluating their cloud strategies, and I’ve found myself knee-deep in some pretty gnarly ops work to make sure our platform can handle it.

Remembering Bob Lee’s passing was a stark reminder of how fast things move. Last year he was still at Square, pushing the boundaries of cloud native tech; now his absence is felt deeply in the community. It’s humbling and reminds me that even with all this technology, we’re still dealing with very human issues.

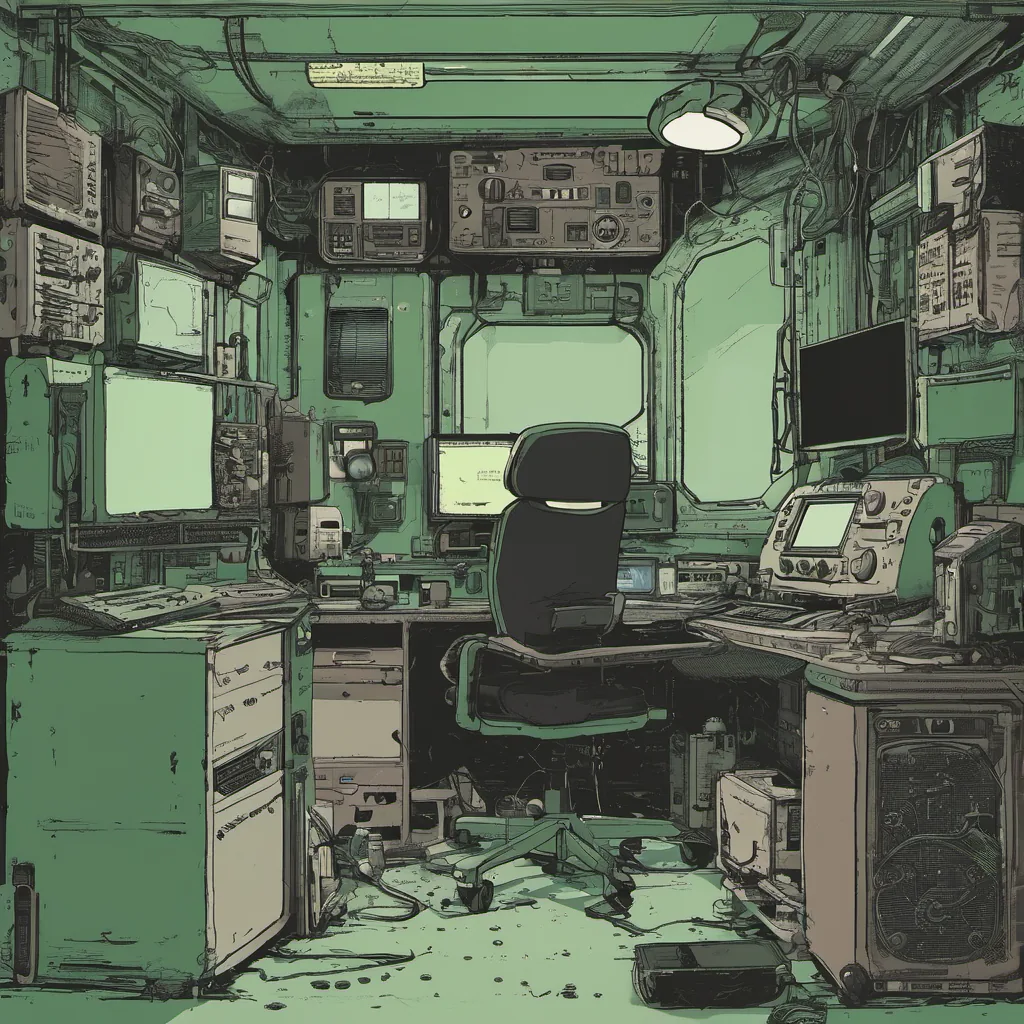

One project I’ve been working on lately involves integrating StableLM into our internal tools. The challenge isn’t just about spinning up instances and feeding it data but also managing the costs and ensuring reliability. We’re using K8s and GCP to run these models, which has its own set of gotchas. For instance, we had a pretty nasty bug where some requests were timing out due to network latency issues between our microservices and the LLM container. Once I finally tracked it down, it turned out that the network policy was configured improperly, causing packets to get dropped before reaching the right service.

On the flip side, platform engineering is becoming increasingly mainstream. I’ve been in several debates about whether we should standardize on a particular cloud provider for our various services. The argument for staying with GCP because of its strong support for Kubernetes and ML ops tools versus the allure of AWS’s sheer scale and flexibility has been quite heated. In the end, pragmatism won out—GCP’s tooling is more integrated and easier to manage, at least for now.

Speaking of management, I had a long chat with our DevOps team about developer experience. We’ve been focusing heavily on making sure that setting up and running these models is as simple as possible. One idea we’re exploring is creating a serverless function layer using Cloud Functions to abstract away the complexity of provisioning and managing resources. It’s early days, but if it works, it could save us from a lot of manual labor.

FinOps continues to be a topic of intense conversation within the company. With cloud costs skyrocketing, we’re constantly looking for ways to optimize our usage. DORA metrics are being widely adopted here as well, and they’re driving home how critical it is to keep an eye on delivery lead times and failure rates. We’ve seen some impressive gains in deployment speed, but there’s still a lot of room for improvement.

One of the things that struck me was reading about Space Elevator projects. It’s crazy to think about what might be possible if we can just get past this initial hurdle. The tech isn’t even the hard part; it’s the logistics and politics. I couldn’t help but chuckle at the Semaphore full-body keyboard, a concept so far ahead of its time that I’m not sure when—or if—it will ever catch on.

As for myself, I’m trying to find balance between all these moving parts. It’s been a good reminder that no matter how much tech changes, there’s always some fundamental human work to be done—debugging, arguing, and learning. We’ll see what April brings next, but so far it’s been a wild ride.

This post aims to capture the essence of my experiences in managing platforms with an increasing amount of AI/LLM infrastructure while reflecting on broader tech trends and industry events that shaped the month.