$ cat post/debugging-my-day-job:-a-y2k-aftermath-tale.md

Debugging My Day Job: A Y2K Aftermath Tale

April 3, 2000. I woke up to the sound of my old dial-up modem connecting to the internet, a constant hum that never failed to remind me how much I hated waking up early on a Saturday. The year was still recovering from the dot-com bust, but Linux had started its march to take over more and more server workloads. In my corner of the tech world, we were still wrestling with Y2K issues and slowly adapting to new tools like Apache and Sendmail.

Today, I was facing a particularly gnarly bug in our core application that was acting up on some of our production servers. The app was responsible for processing payments, and it was crucial that it worked flawlessly. Our team had been hammering away at this issue all week, but today felt like the day we might finally get to the bottom of it.

The Setup

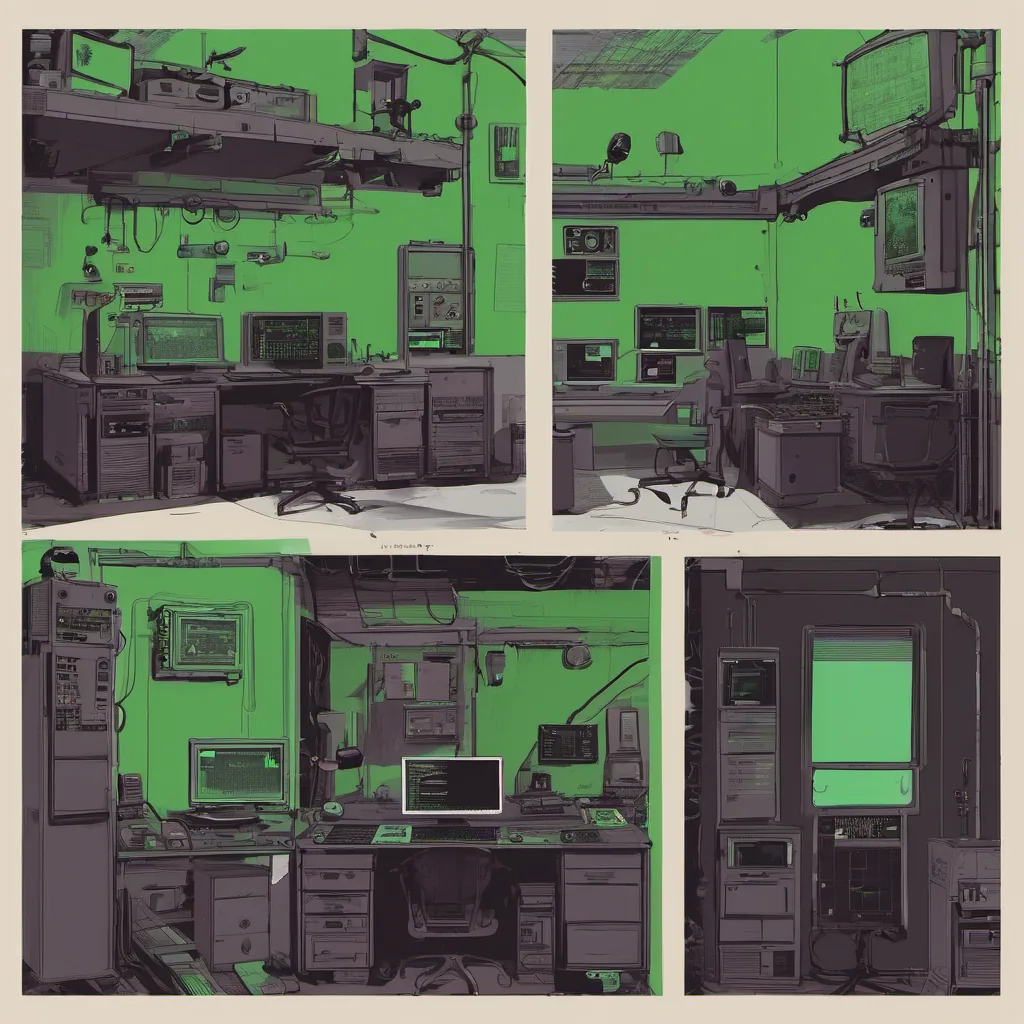

Our servers were running Red Hat 5.2 on an older AMD K6-2 processor. Memory allocation and performance optimization weren’t exactly a top priority back then, so we were dealing with systems that had only about 128MB of RAM. Our application was written in Perl, which I still thought was the greatest language ever—except when it came to debugging. The codebase was a tangled mess of interconnected modules, and tracing down what went wrong was like trying to untangle a bowl full of spaghetti.

The Bug

The issue was sporadic. Sometimes, our application would freeze for minutes at a time, then resume normally. Other times, it would crash completely, spitting out strange error messages before vanishing into nothingness. After much head-scratching and countless grep commands, I finally found what looked like the culprit: an unhandled exception in one of our module dependencies.

I dove into the code, trying to make sense of how this could happen so randomly. The problem turned out to be a race condition within a subroutine that handled database queries. It seemed that under heavy load, the subroutine would sometimes return before the database query had completed, leading to inconsistent state and eventual deadlock.

The Fix

Fixing it wasn’t easy. I spent hours rewriting the offending subroutine, adding in checks for incomplete data and ensuring that all operations were properly synchronized. Along the way, I learned about the joys of Try::Tiny, a module that made handling exceptions much cleaner—something we definitely needed more of.

After making these changes, I pushed the code to our development server and watched it like a hawk. The application ran for hours without any issues—a huge relief. But I knew better than to declare victory just yet. We still had to deploy this change to production and hope that it held up under real-world conditions.

The Night Shift

That night, we went into full production support mode. I stayed late, monitoring the servers and keeping an eye out for any signs of trouble. For a while, everything seemed fine. But around 3 AM, our application started showing some strange behavior again. This time, it wasn’t just freezing; it was giving us actual error messages that made no sense.

I scrambled to my desk, pulling up the logs and trying to figure out what could be causing this new issue. After a few hours of frustration and rejections from various grep commands, I finally noticed something: one of our load balancers had been acting up, sending incomplete requests to our application servers.

It turned out that even after all the work we’d done, there was still more to learn about how our infrastructure handled network traffic. After a bit more tweaking and some additional logging, we managed to stabilize things by ensuring that all requests were fully formed before being sent on.

Reflections

Looking back at this experience now, I can see how much has changed in the tech world since 2000. Back then, we didn’t have CI/CD pipelines or containerization; we just had a lot of raw hardware and hope that our code wouldn’t fall apart under stress. Debugging was a constant battle against Murphy’s Law, with every line of code potentially hiding a lurking bug.

Today, I appreciate the tools and practices that make my job easier—although sometimes it still feels like the spaghetti monster is just around the corner, ready to attack again. But for now, our application runs smoothly, handling payments without a hitch. And isn’t that what matters most?