$ cat post/grep-through-the-dark-log-/-i-traced-it-to-the-library-/-the-daemon-still-hums.md

grep through the dark log / I traced it to the library / the daemon still hums

Kubernetes Dominance and the Quest for Stability

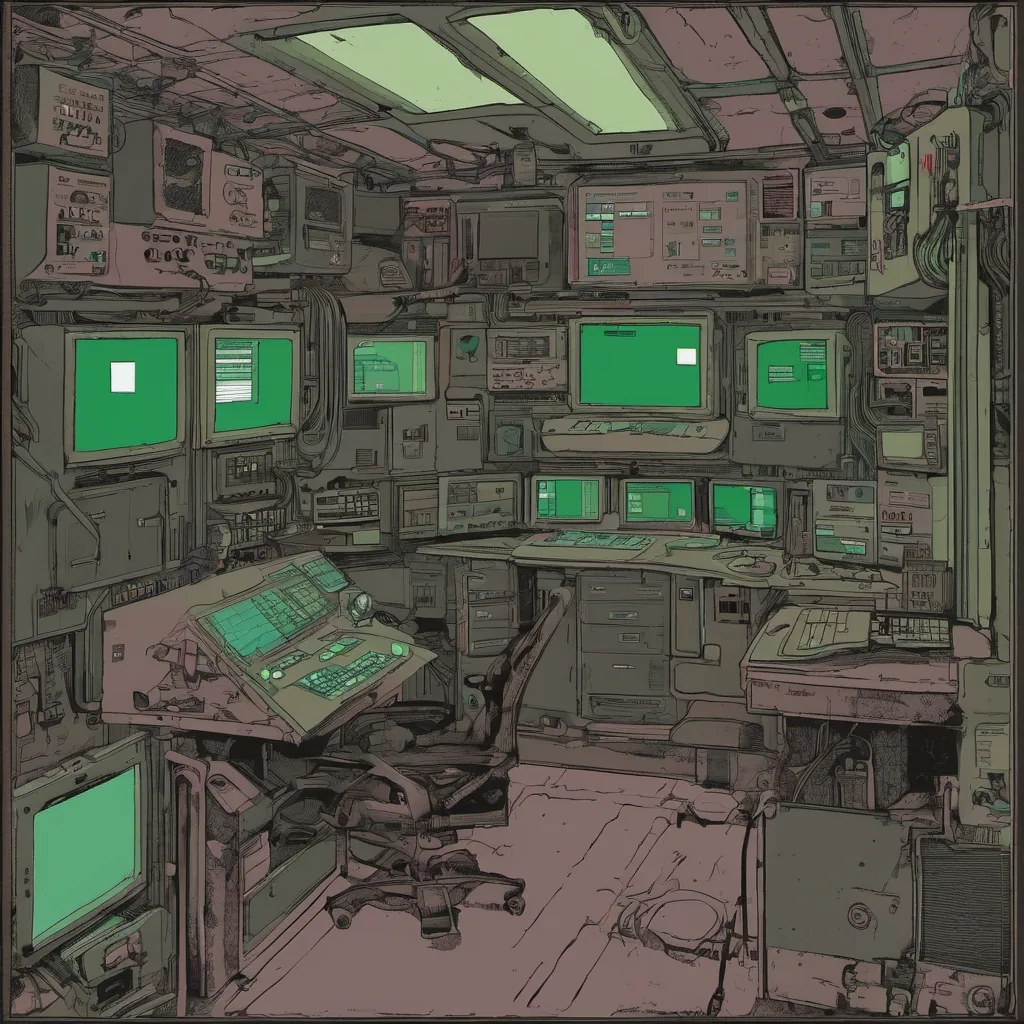

July 2, 2018. The sun was setting on another long San Francisco summer day as I sat in my home office, staring at a cluster of nodes that seemed to be just hanging out there, waiting for something—or someone—to make them do something useful.

It’s been about six months since Kubernetes became the de facto standard for container orchestration. We had initially adopted it with great fanfare because everyone was talking about how wonderful it would be. And while it was certainly better than what we were using before, I couldn’t help but feel like we were dealing with more moving parts than ever.

Our setup involved a mix of Kubernetes and Helm charts, which allowed us to deploy our applications in an orchestrated way. But as the number of services grew, so did the complexity. We started seeing more and more issues with deployments failing, rolling back, or just plain not working as expected.

One particular issue stood out to me: we had a service that kept crashing during deployment. The logs were filled with cryptic errors like “failed to pull image,” but when I tried running the container locally on my laptop, it worked fine. It was driving me crazy!

I spent hours trying to figure out what was going wrong—was it an issue with Docker? With Kubernetes? Was it something in our environment that we had forgotten to set up properly?

After a particularly frustrating debugging session, I decided to take a break and clear my head. That’s when I stumbled upon the news about microservices moving from 100s of “problem children” to just one superstar. It made me think: was all this complexity really necessary? Were we just adding layers of abstraction without gaining any real benefits?

But as much as it pained me to admit, we were stuck with our current architecture for now. So I dove back into the problem.

I decided to take a closer look at how Helm was managing deployments. Maybe there was something wrong with our configuration or how we were deploying the images. After fiddling around with some changes, I managed to get the service up and running without any issues during deployment. But it still felt like there had to be a better way.

As I sat back and looked at all this work—writing YAML files for Helm, managing Kubernetes resources, and trying to keep everything in sync—I couldn’t help but wonder if we were overcomplicating things.

A few days later, I found myself reading about GitOps. The idea of using Git as a single source of truth for infrastructure configurations resonated with me. It felt like a simpler way to manage our deployments without having to deal with all the moving parts. And since everyone was starting to adopt it, maybe it was time for us to give it a try.

So I started researching how to implement GitOps in our environment. The idea of using tools like Flux or Argo CD seemed promising, but there were still so many questions—how would we handle rollbacks? How would we ensure that only the right changes made it into production?

These thoughts kept swirling around in my head as I continued to work on other projects. But with each passing day, I found myself increasingly drawn back to this problem. The complexity of our Kubernetes setup was becoming more and more overwhelming.

On one particularly quiet afternoon, I sat down and started sketching out a plan for migrating to GitOps. It wasn’t easy—there were still so many unknowns—but something about it felt right. Maybe we could simplify things by focusing on a single source of truth and using automation tools to manage our deployments.

As the sun set over San Francisco once again, I left my desk feeling a mix of excitement and trepidation. The road ahead would be long and filled with challenges, but at least now I had a direction to follow.

In the coming weeks, I’ll be sharing more about how we tackled this transition and what lessons we learned along the way. For now, though, it’s time to put on my debugging hat once again and dive into some more YAML.

Until next time, Brandon