$ cat post/kubernetes-chaos:-a-tale-of-debugging-and-resilience.md

Kubernetes Chaos: A Tale of Debugging and Resilience

May 1, 2017. The date is slipping by, and with it, the dawn of a new era in tech. Kubernetes had been making waves for months now, but today feels like a turning point. Helm is still in its early days, Istio has just hit version 0.1.6, and serverless is starting to catch the fancy of enterprises who can afford it.

I remember the excitement when we started experimenting with Kubernetes at work. The promise of a portable, self-healing container orchestration system was too good to pass up. But as with any new technology, there were growing pains. A few months in, our clusters started acting up. Pods would randomly crash and restart, and services would intermittently go down.

It was a Friday afternoon when I finally had time to dig into the logs and find out what was happening. The root cause was a simple network issue: one of the nodes in our cluster was misconfigured, causing it to occasionally drop packets. Kubernetes, being as robust as it is, tried to recover by restarting pods, but that created more chaos than stability.

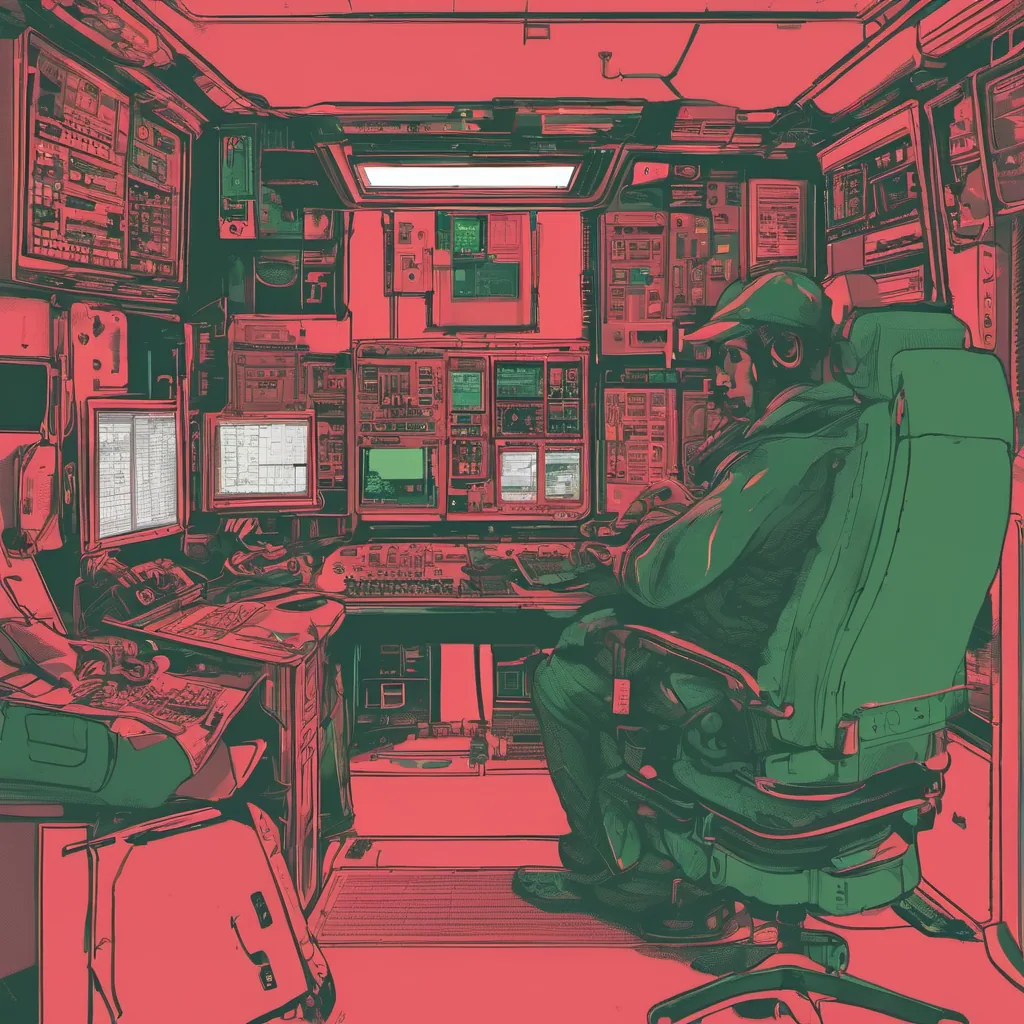

I spent hours troubleshooting this, pulling up the cluster’s configuration files and digging through Docker logs. I tried adding node labels to isolate traffic but couldn’t get it quite right. It felt like every tweak I made caused a ripple effect somewhere else in the system. Debugging Kubernetes is both an art and a science—a mix of patience and trial-and-error.

Eventually, after a series of small changes and lots of testing, we managed to stabilize the cluster. But the experience left me with a few key takeaways:

- Documentation: Kubernetes has fantastic documentation, but it can still be overwhelming for new users. I found myself repeatedly going back to the official docs, which are great once you know what to look for.

- Operator Patterns: As we moved more workloads into Kubernetes, I started exploring operators—custom controllers that manage complex resources. They help in managing stateful applications and simplify operations significantly.

- Resilience Design: Building resilient systems is crucial. It’s not just about having a high-availability architecture but also understanding the failure modes of your system and how to recover from them.

The weekend came, and as I stepped away from my computer, I couldn’t help but think about the other tech news happening around me:

- The accidental stopping of a global cyber attack highlighted the delicate balance between security and operations.

- CRISPR was making waves in genetic engineering, pushing the boundaries of what’s possible. It felt like we were at the same stage with containerization and orchestration.

- A crashed advertisement revealed the extent of facial recognition systems, raising ethical questions that tech leaders must ponder.

But for now, my focus is back on our Kubernetes clusters. Debugging is never fun, but it’s a critical part of building resilient infrastructure. I’m looking forward to the next challenge, knowing that each problem solved makes us stronger and more prepared for whatever comes our way.

Until then, here’s to hoping we can keep the lights on—and maybe even enjoy some fireflies this summer.