$ cat post/a-patch-long-applied-/-a-crontab-from-two-thousand-two-/-the-cron-still-fires.md

a patch long applied / a crontab from two thousand two / the cron still fires

Debugging a Migrating Disaster on June 1, 2009

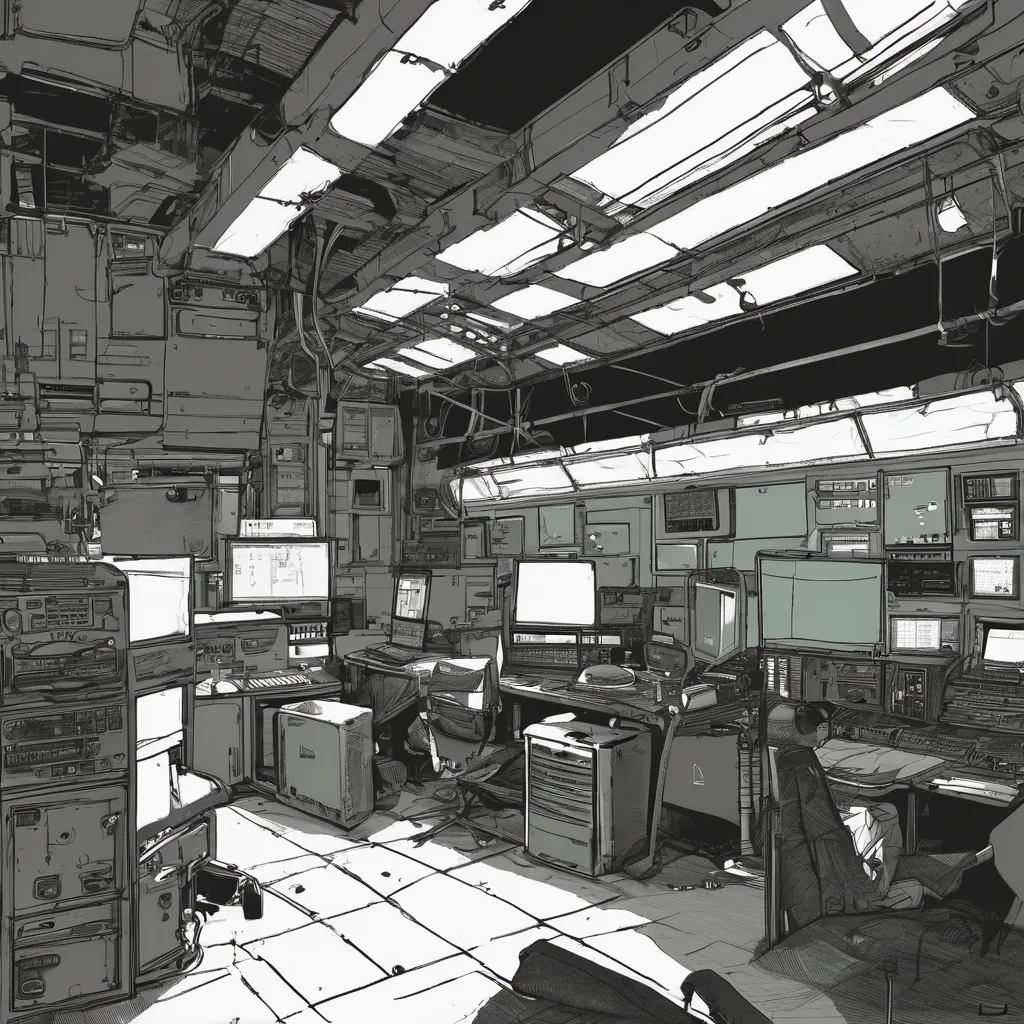

It was a typical Tuesday morning at the office, and I was sitting in my desk with my trusty monitor staring blankly back at me. The team had just finished up a weekend sprint where we added some exciting new features to our application. I decided to start the week by reviewing those changes and making sure they were solid before going live.

As I clicked through the code, everything looked good. The logic was straightforward, the tests passed, but something didn’t feel right. I had this nagging feeling that I should have taken more time with the migrations script we were running. But what could go wrong, right? I mean, it’s not like we’re running into any issues now.

I quickly fired up a local instance of our database to test out the migration scripts and everything looked perfect. So I went ahead and pushed them to production with a bit of trepidation but also a sense of confidence that things would be fine.

Sure enough, within an hour, alarms started going off in our monitoring tools. The application was slow—really slow. People were starting to complain about performance issues on Twitter and our support emails were flooding in. I knew we had a problem; the migration scripts must have done something unexpected to the schema or indexes.

I quickly jumped into production to investigate. As soon as I logged in, my first thought was: “How could this be so simple?” The logs showed that every request to the database was taking significantly longer than before. It was like someone had put a giant delay in front of each query.

After some digging, I realized that our migrations had added an index to one of our tables without specifying the right columns. This led to full table scans for all queries involving those columns, causing massive performance issues. How could we have missed this? In my defense, it was a busy weekend and the team was tired, but still.

The good news was that fixing it wasn’t too difficult. I quickly rolled back the migration, added the correct index, and ran a new one with proper column specification. After an hour of waiting for the indexes to build, things started coming back online. The support tickets slowly dwindled as users noticed performance improving.

Reflecting on this, I realized that while Git and Agile practices were spreading, the importance of thorough testing and code reviews couldn’t be overstated. We had been so focused on delivering new features quickly that we didn’t pay enough attention to the underlying infrastructure changes. This incident was a wake-up call for us to improve our processes.

The tech world in 2009 was changing rapidly with GitHub, AWS EC2/S3 gaining traction, and Hadoop becoming mainstream. We were living through some of those changes ourselves, but it was easy to get caught up in the excitement of new tools without thinking about the basics.

As we closed out the day, I couldn’t help but think about how much technology had advanced since Michael Jackson’s last big performance. It made me wonder what other lessons from that era were still relevant today. For now, though, it was just time to get some sleep and start fresh tomorrow.

This post is a reflection on a personal experience with technical issues during a busy development cycle in 2009. The title and content are designed to evoke the spirit of the tech landscape at that time while focusing on the reality of working through a technical challenge.