$ cat post/the-rollback-succeeded-/-we-merged-without-a-review-/-the-merge-was-final.md

the rollback succeeded / we merged without a review / the merge was final

Title: August 2022 Tech Journal: A Week of Surprises and Scrutiny

August 1, 2022. It feels like the whole world is talking about AI. ChatGPT was barely a blip when we shipped our latest feature, but now it’s everywhere. I can’t help but wonder if this is just another fad or if something fundamental has changed. Meanwhile, I’m still trying to come to terms with why physical buttons are apparently superior on new cars.

The last week was packed with unexpected challenges and lessons learned. Here’s a quick rundown of what we tackled:

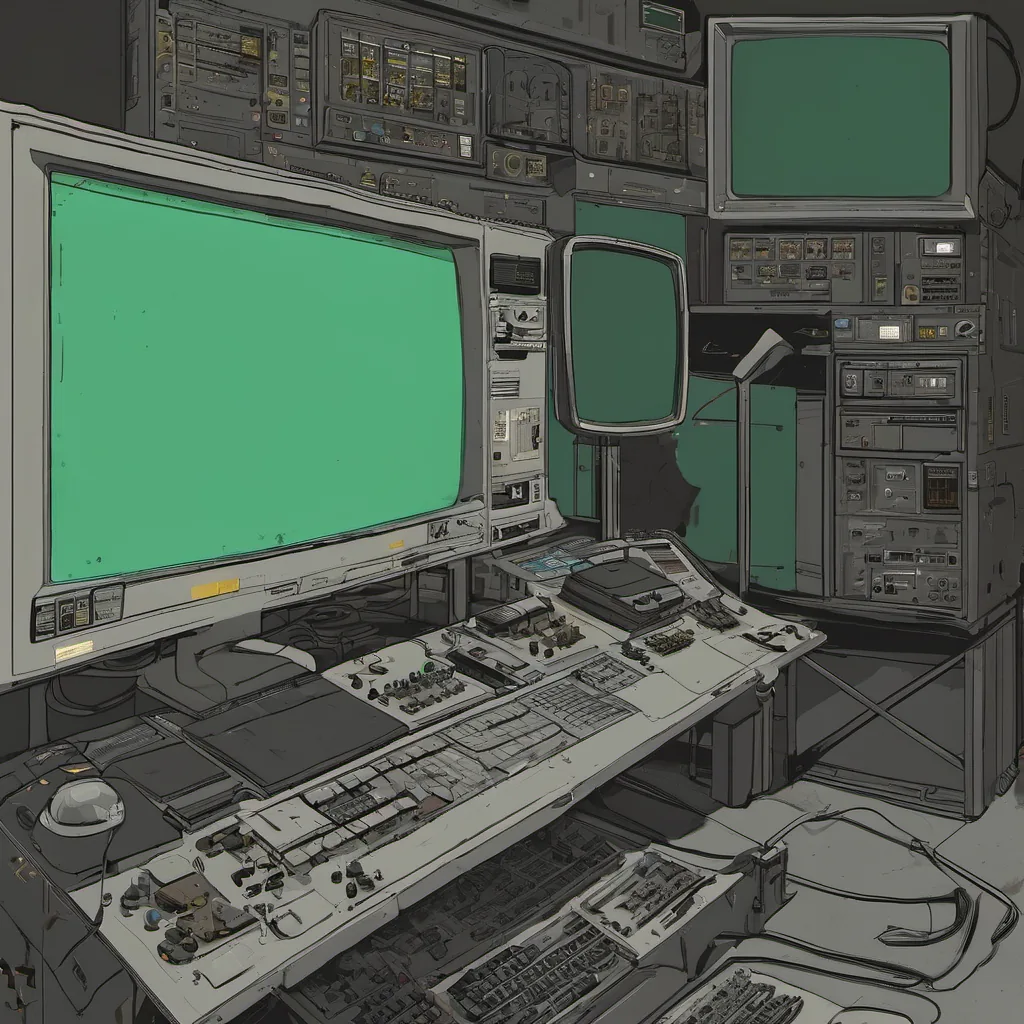

Serverless vs. Big Iron

One team is having a heated debate about whether they should move their critical application from serverless functions to a monolithic setup using a big server. The argument centers around predictability, performance guarantees, and control over the hardware environment. I’ve seen both sides; serverless feels like it offers more flexibility but has its quirks. A big server gives you full control but can be a pain in the ass to manage.

We ultimately decided to stick with serverless for now, as it aligns better with our current ops and cost goals. But every day we face some new issue that makes us question this choice. It’s like driving an old car—it works, but you’re always fixing something.

FinOps Demands

Cost pressure is real. Last week, a team got hit by a sudden $10k overage from AWS. It turns out their logging service was capturing every single API call and storing it indefinitely. We all sighed, knowing we’ve seen this story too many times. The team now has to implement FinOps principles more rigorously—monitoring costs, setting budgets, and optimizing usage.

This is the reality of cloud ops: you can’t just set it and forget it. You need to be constantly vigilant, especially with AI models that can easily eat up resources if left unchecked.

DORA Metrics

Our dev team has been focusing on DORA metrics—deployment frequency, lead time for changes, mean time to recovery (MTTR), and change fail percentage. We’re seeing improvements but still have room for growth. One of the biggest takeaways is that our deployment process can be more automated. Manual steps are error-prone and slow down deployments.

We’ve also been working on improving MTTR by refining our monitoring tools. It’s frustrating when an incident happens, but it’s valuable practice to improve our response times.

The Big [Censored] Theory

There was a heated discussion about whether we should move some of our services to a single large server as suggested in one of the Hacker News stories. I’m not entirely convinced this is a good idea yet. While it might simplify management, it could also introduce scalability issues and reduce fault isolation.

We need to weigh the benefits against potential downsides carefully before making such a significant change. For now, we’re keeping an eye on our services’ performance and capacity needs while exploring other options like hybrid cloud setups or better resource allocation strategies within our current architecture.

Wrapping Up

Overall, it’s been a busy and reflective week. Tech is always evolving, but the fundamentals—like cost management, reliability, and continuous improvement—remain crucial. I’m looking forward to seeing how AI shapes our work in the coming months and years. For now, let’s focus on what we can control: making our systems better day by day.

That’s it for August 1st. Let’s see where this month takes us!